## Bar Chart: Performance Comparison of Llama Models on Question Answering Datasets

### Overview

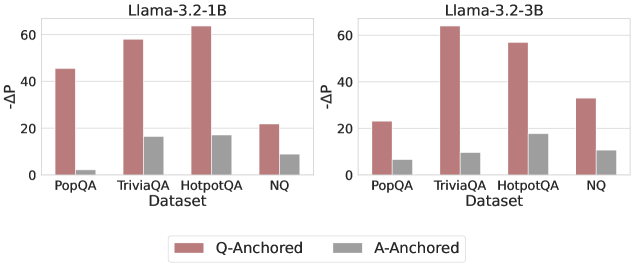

The image presents a comparative bar chart illustrating the performance difference (ΔP) between two Llama models – Llama-3.2-1B and Llama-3.2-3B – across four different question answering datasets: PopQA, TriviaQA, HotpotQA, and NQ. The performance metric, ΔP, appears to represent a change in some probability or accuracy score. The chart uses two bars for each dataset, representing "Q-Anchored" and "A-Anchored" approaches.

### Components/Axes

* **X-axis:** "Dataset" with categories: PopQA, TriviaQA, HotpotQA, NQ.

* **Y-axis:** "−ΔP" (negative Delta P), with a scale ranging from 0 to 60, incrementing by 10.

* **Legend:** Located at the bottom-center of the image.

* "Q-Anchored" – represented by a light red color (approximately #F08080).

* "A-Anchored" – represented by a gray color (approximately #808080).

* **Titles:** Two titles are present, one above each chart: "Llama-3.2-1B" and "Llama-3.2-3B".

### Detailed Analysis

The chart is divided into two sections, one for each Llama model.

**Llama-3.2-1B:**

* **PopQA:** Q-Anchored is approximately 44, A-Anchored is approximately 8.

* **TriviaQA:** Q-Anchored is approximately 55, A-Anchored is approximately 16.

* **HotpotQA:** Q-Anchored is approximately 62, A-Anchored is approximately 18.

* **NQ:** Q-Anchored is approximately 28, A-Anchored is approximately 10.

**Llama-3.2-3B:**

* **PopQA:** Q-Anchored is approximately 22, A-Anchored is approximately 6.

* **TriviaQA:** Q-Anchored is approximately 60, A-Anchored is approximately 12.

* **HotpotQA:** Q-Anchored is approximately 54, A-Anchored is approximately 16.

* **NQ:** Q-Anchored is approximately 30, A-Anchored is approximately 8.

**Trends:**

* For both models, the Q-Anchored bars are consistently higher than the A-Anchored bars across all datasets, indicating better performance with the Q-Anchored approach.

* The Q-Anchored performance is highest on the TriviaQA and HotpotQA datasets for both models.

* The A-Anchored performance is relatively low and consistent across all datasets for both models.

* The Llama-3.2-3B model generally shows lower Q-Anchored values than the Llama-3.2-1B model for PopQA, but higher values for TriviaQA, HotpotQA, and NQ.

### Key Observations

* The difference between Q-Anchored and A-Anchored performance is substantial, suggesting that the anchoring method significantly impacts the model's performance.

* The performance varies considerably depending on the dataset.

* The Llama-3.2-3B model shows a different performance profile compared to the Llama-3.2-1B model, particularly on the PopQA dataset.

### Interpretation

The data suggests that the "Q-Anchored" approach consistently outperforms the "A-Anchored" approach across all tested datasets for both Llama models. This implies that anchoring the model's attention or processing towards the question itself is more effective than anchoring it towards the answer. The varying performance across datasets indicates that the effectiveness of each model is dataset-dependent, potentially due to differences in question complexity, answer format, or domain knowledge required. The Llama-3.2-3B model's performance on PopQA is lower than the Llama-3.2-1B model, which could be due to the larger model being less effective on simpler datasets or requiring more data to generalize effectively. The higher performance of the Llama-3.2-3B model on TriviaQA, HotpotQA, and NQ suggests that it can leverage its increased capacity to handle more complex reasoning and knowledge retrieval tasks. The consistent low performance of the A-Anchored approach suggests that it may not be a suitable strategy for these question answering tasks.