\n

## Bar Chart: Accuracy vs. Difficulty Level for Different Models

### Overview

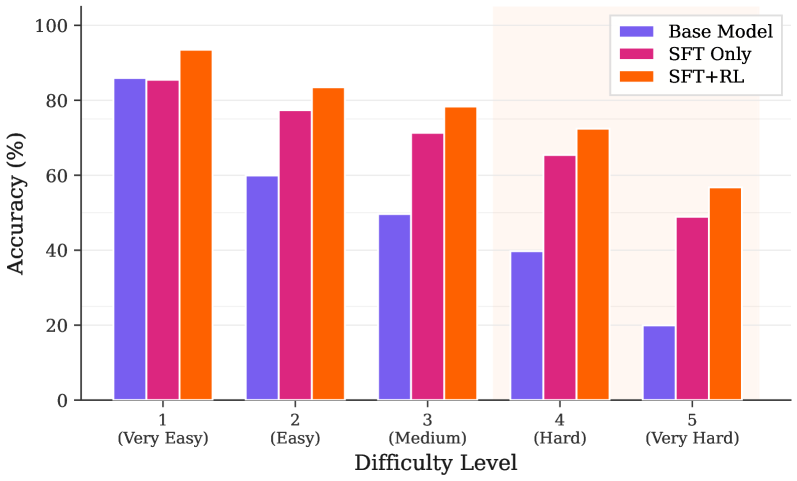

This bar chart compares the accuracy of three different models – Base Model, SFT Only, and SFT+RL – across five difficulty levels: (Very Easy), (Easy), (Medium), (Hard), and (Very Hard). Accuracy is measured as a percentage, ranging from 0% to 100%.

### Components/Axes

* **X-axis:** Difficulty Level, with categories: 1 (Very Easy), 2 (Easy), 3 (Medium), 4 (Hard), 5 (Very Hard).

* **Y-axis:** Accuracy (%), ranging from 0 to 100.

* **Legend:** Located in the top-left corner, identifying the three data series:

* Base Model (Purple)

* SFT Only (Magenta/Pink)

* SFT+RL (Orange)

### Detailed Analysis

The chart consists of five groups of three bars, one for each model at each difficulty level.

**Difficulty Level 1 (Very Easy):**

* Base Model: Approximately 84% accuracy.

* SFT Only: Approximately 88% accuracy.

* SFT+RL: Approximately 94% accuracy.

**Difficulty Level 2 (Easy):**

* Base Model: Approximately 58% accuracy.

* SFT Only: Approximately 76% accuracy.

* SFT+RL: Approximately 82% accuracy.

**Difficulty Level 3 (Medium):**

* Base Model: Approximately 48% accuracy.

* SFT Only: Approximately 69% accuracy.

* SFT+RL: Approximately 76% accuracy.

**Difficulty Level 4 (Hard):**

* Base Model: Approximately 40% accuracy.

* SFT Only: Approximately 65% accuracy.

* SFT+RL: Approximately 68% accuracy.

**Difficulty Level 5 (Very Hard):**

* Base Model: Approximately 30% accuracy.

* SFT Only: Approximately 52% accuracy.

* SFT+RL: Approximately 62% accuracy.

**Trends:**

* **Base Model:** Accuracy decreases consistently as difficulty level increases.

* **SFT Only:** Accuracy decreases as difficulty level increases, but at a slower rate than the Base Model.

* **SFT+RL:** Accuracy decreases as difficulty level increases, but generally maintains the highest accuracy across all difficulty levels.

### Key Observations

* SFT+RL consistently outperforms both the Base Model and SFT Only across all difficulty levels.

* The Base Model exhibits the most significant drop in accuracy as difficulty increases.

* The difference in accuracy between the models is most pronounced at higher difficulty levels.

* All models show a clear negative correlation between difficulty level and accuracy.

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) with Supervised Fine-Tuning (SFT) significantly improves model performance, particularly on more challenging tasks. The Base Model, lacking fine-tuning, struggles with increasing difficulty, indicating the importance of adapting the model to specific task complexities. The SFT Only model shows improvement over the Base Model, demonstrating the benefit of supervised learning. However, the SFT+RL model's consistent superiority highlights the added value of reinforcement learning in optimizing performance. The decreasing accuracy across all models with increasing difficulty is expected, as tasks become inherently more complex and require more sophisticated reasoning and problem-solving capabilities. The gap between the models widens at higher difficulty levels, suggesting that RL is particularly effective in tackling complex challenges. This data supports the idea that a combination of supervised and reinforcement learning techniques is crucial for building robust and adaptable models.