\n

## Diagram: Kimi-VL Training Pipeline

### Overview

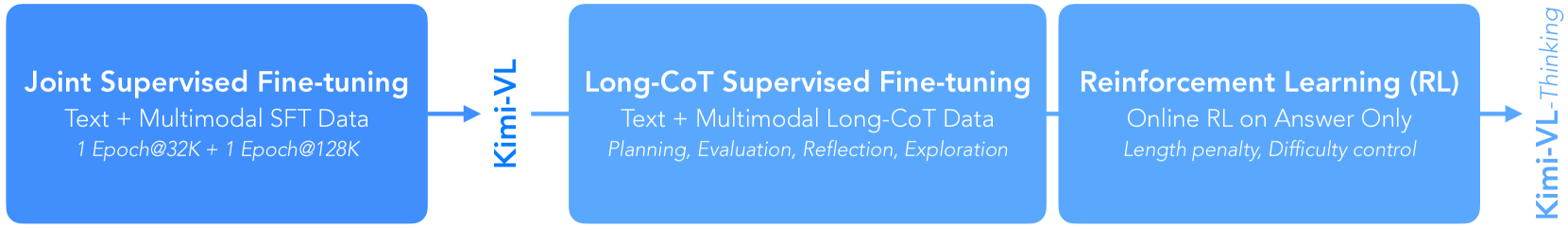

The image depicts a sequential diagram illustrating the training pipeline for Kimi-VL, a multimodal large language model. The pipeline consists of three stages: Joint Supervised Fine-tuning, Long-CoT Supervised Fine-tuning, and Reinforcement Learning (RL). An arrow indicates the flow of training from the first stage to the second, and then to the third. The Kimi-VL logo is present on the right side of the diagram.

### Components/Axes

The diagram is composed of three rectangular blocks, each representing a training stage. Each block contains text describing the stage's methodology and data used. There are no axes in this diagram.

### Content Details

**Block 1: Joint Supervised Fine-tuning**

* **Title:** Joint Supervised Fine-tuning

* **Description:** Text + Multimodal SFT Data

* **Details:** 1 Epoch@32K + 1 Epoch@128K

**Block 2: Long-CoT Supervised Fine-tuning**

* **Title:** Long-CoT Supervised Fine-tuning

* **Description:** Text + Multimodal Long-CoT Data

* **Details:** Planning, Evaluation, Reflection, Exploration

**Block 3: Reinforcement Learning (RL)**

* **Title:** Reinforcement Learning (RL)

* **Description:** Online RL on Answer Only

* **Details:** Length penalty Difficulty control

**Arrow:** A blue arrow connects the first and second blocks, indicating the sequential flow of training.

**Kimi-VL Logo:** The logo "Kimi-VL Thinking" is present on the right side of the diagram, vertically aligned with the three blocks.

### Key Observations

The diagram highlights a three-stage training process. The first stage uses supervised fine-tuning with specific epoch configurations. The second stage focuses on Long-Context (CoT) data and incorporates cognitive processes like planning and reflection. The final stage employs reinforcement learning, focusing on answer quality and controlling for length and difficulty.

### Interpretation

This diagram illustrates a progressive training strategy for Kimi-VL. It begins with standard supervised learning to establish a baseline, then moves to more complex supervised learning incorporating long-context reasoning, and finally refines the model through reinforcement learning to optimize answer quality. The inclusion of "Planning, Evaluation, Reflection, Exploration" in the second stage suggests an attempt to imbue the model with higher-level cognitive abilities. The final RL stage's focus on "Length penalty Difficulty control" indicates a desire to balance answer conciseness with the complexity of the questions. The sequential nature of the pipeline suggests that each stage builds upon the previous one, progressively improving the model's capabilities. The diagram provides a high-level overview of the training process and does not contain specific quantitative data beyond the epoch numbers.