## Flowchart: Kimi-VL Training Pipeline

### Overview

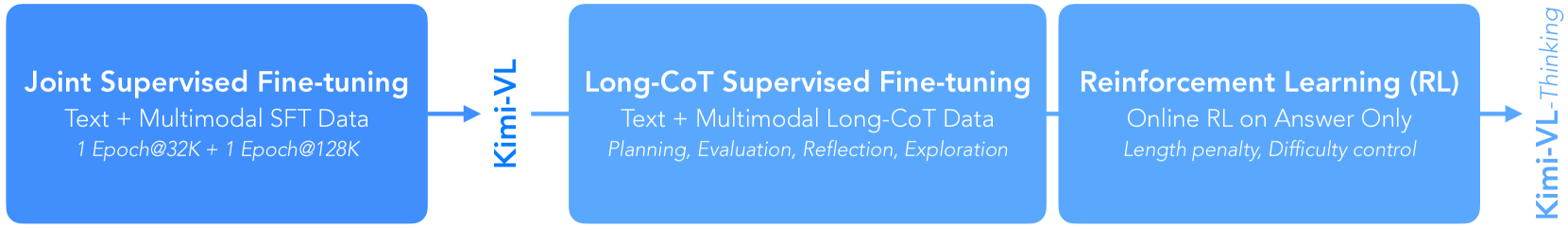

The image depicts a three-stage training pipeline for the Kimi-VL model, visualized as a horizontal flowchart with three blue rectangular blocks connected by arrows. Each block represents a distinct training phase, with technical specifications and objectives labeled in white text. Arrows between blocks indicate progression and data flow.

### Components/Axes

1. **Blocks (Training Phases)**:

- **Left Block**: "Joint Supervised Fine-tuning"

Subtext: "Text + Multimodal SFT Data\n1 Epoch@32K + 1 Epoch@128K"

- **Middle Block**: "Long-CoT Supervised Fine-tuning"

Subtext: "Text + Multimodal Long-CoT Data\nPlanning, Evaluation, Reflection, Exploration"

- **Right Block**: "Reinforcement Learning (RL)"

Subtext: "Online RL on Answer Only\nLength penalty, Difficulty control"

2. **Arrows (Flow Direction)**:

- Left-to-middle arrow labeled "Kimi-VL"

- Middle-to-right arrow labeled "Kimi-VL-Thinking"

### Detailed Analysis

- **Block 1 (Joint Supervised Fine-tuning)**:

Focuses on initial training using text and multimodal data with two distinct training epochs (32K and 128K tokens). The term "SFT" (Supervised Fine-Tuning) implies standard supervised learning with labeled data.

- **Block 2 (Long-CoT Supervised Fine-tuning)**:

Builds on the first phase by incorporating Chain-of-Thought (CoT) data, emphasizing reasoning capabilities through explicit subtext categories: planning, evaluation, reflection, and exploration. This suggests a focus on developing structured reasoning processes.

- **Block 3 (Reinforcement Learning)**:

Shifts to online RL with answer-only feedback, introducing constraints like length penalties and difficulty control. This phase prioritizes optimizing response quality and efficiency.

### Key Observations

1. **Progressive Complexity**: Each phase adds specialized components (CoT data, RL constraints) to enhance the model's capabilities.

2. **Data Flow**: The pipeline transitions from supervised learning (Blocks 1-2) to reinforcement learning (Block 3), indicating a hybrid approach.

3. **Technical Specificity**: Epoch sizes (32K, 128K) and constraints (length penalty) are explicitly quantified, suggesting rigorous experimentation.

### Interpretation

The flowchart illustrates a staged methodology for training Kimi-VL, where each phase addresses specific limitations of the prior. The progression from supervised fine-tuning to RL reflects a common pattern in LLM development:

- **Phase 1** establishes foundational knowledge via SFT.

- **Phase 2** enhances reasoning via CoT data, critical for complex tasks.

- **Phase 3** optimizes real-world performance through RL, balancing answer quality and efficiency.

The explicit mention of "Answer Only" in RL suggests a focus on response generation rather than multimodal outputs, while "Difficulty control" implies adaptive training for varying task complexities. This pipeline likely aims to balance breadth (multimodal data) and depth (reasoning/RL) in Kimi-VL's capabilities.