## Diagram: Comparison of Generated Hypotheses for Two Scenarios

### Overview

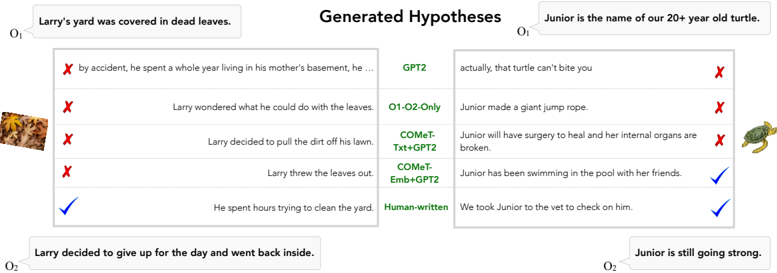

The image is a structured comparison chart evaluating the performance of different AI models in generating plausible hypotheses for two distinct narrative scenarios. It presents a side-by-side analysis, with one scenario on the left and another on the right, listing model-generated outputs and marking them as correct (✓) or incorrect (✗) against a human-written standard.

### Components/Axes

* **Structure:** The diagram is organized into two primary vertical columns, each dedicated to a specific narrative scenario.

* **Left Column (Scenario 1):**

* **Context (O1):** "Larry's yard was covered in dead leaves."

* **Context (O2):** "Larry decided to give up for the day and went back inside."

* **Image:** A small thumbnail of a leaf-covered yard is placed to the left of the hypothesis list.

* **Right Column (Scenario 2):**

* **Context (O1):** "Junior is the name of our 20+ year old turtle."

* **Context (O2):** "Junior is still going strong."

* **Image:** A small thumbnail of a turtle is placed to the right of the hypothesis list.

* **Central Column (Models):** Lists the five sources of hypotheses, vertically aligned between the two scenario columns.

* GPT2

* O1-O2-Only

* COMET-Txt+GPT2

* COMET-Emb+GPT2

* Human-written

* **Hypothesis Lists:** For each scenario, five hypotheses are listed, each aligned with a model name. A symbol (✓ or ✗) is placed on the outer edge (left for Larry, right for Junior) to indicate correctness.

* **Legend:** Located at the bottom center, it defines the symbols:

* ✓ = Correct

* ✗ = Incorrect

### Detailed Analysis

**Scenario 1: Larry's Yard**

* **Trend:** The hypotheses generally attempt to explain what Larry did about the leaves. The visual trend shows a progression from incorrect, off-topic, or simplistic guesses to a correct, detailed action.

* **Data Points (Hypotheses & Judgments):**

1. **GPT2:** "by accident, he spent a whole year living in his mother's basement, he ..." (✗ - Incorrect, irrelevant to the context).

2. **O1-O2-Only:** "Larry wondered what he could do with the leaves." (✗ - Incorrect, this is a restatement of the problem, not a resolution).

3. **COMET-Txt+GPT2:** "Larry decided to pull the dirt off his lawn." (✗ - Incorrect, illogical action).

4. **COMET-Emb+GPT2:** "Larry threw the leaves out." (✗ - Incorrect, contradicts O2 where he gives up).

5. **Human-written:** "He spent hours trying to clean the yard." (✓ - Correct, a plausible action that fits between O1 and O2).

**Scenario 2: Junior the Turtle**

* **Trend:** The hypotheses attempt to explain a situation involving the turtle, Junior. The trend shows models generating dramatic, negative, or irrelevant scenarios, while the human-written hypothesis is a simple, positive, and logical continuation.

* **Data Points (Hypotheses & Judgments):**

1. **GPT2:** "actually, that turtle can't bite you" (✗ - Incorrect, irrelevant to the narrative context).

2. **O1-O2-Only:** "Junior made a giant jump rope." (✗ - Incorrect, fantastical and illogical).

3. **COMET-Txt+GPT2:** "Junior will have surgery to heal and her internal organs are broken." (✗ - Incorrect, overly dramatic and contradicts O2 "still going strong").

4. **COMET-Emb+GPT2:** "Junior has been swimming in the pool with her friends." (✓ - Correct, a plausible, positive activity consistent with a healthy turtle).

5. **Human-written:** "We took Junior to the vet to check on him." (✓ - Correct, a logical and responsible action for a pet owner).

### Key Observations

1. **Performance Disparity:** The "Human-written" hypotheses are consistently marked correct for both scenarios, serving as the gold standard.

2. **Model Variability:** Model performance is inconsistent. For example, `COMET-Emb+GPT2` fails on the first scenario but succeeds on the second. `GPT2` and `O1-O2-Only` fail both.

3. **Nature of Errors:** Model errors fall into categories: irrelevance (GPT2), restating the prompt (O1-O2-Only), logical inconsistency (COMET-Txt+GPT2 on Larry), and fantastical invention (O1-O2-Only on Junior).

4. **Spatial Layout:** The design effectively uses symmetry and alignment to facilitate direct comparison between models across two different tasks. The legends and images are placed peripherally to avoid cluttering the core data.

### Interpretation

This diagram is a qualitative evaluation benchmark for narrative reasoning and commonsense inference in AI models. It demonstrates that while some models (like `COMET-Emb+GPT2`) can generate contextually plausible text, they are not reliably consistent across different scenarios. The human-written hypotheses provide a baseline of logical, context-aware reasoning that the models struggle to match uniformly.

The chart suggests that the task requires understanding cause-and-effect, character motivation, and real-world plausibility—areas where current models show significant weakness. The inclusion of two distinct scenarios (a mundane chore and a pet care situation) tests the generalization of the models' reasoning capabilities. The clear visual marking of correctness makes the performance gaps immediately apparent, highlighting the ongoing challenge of achieving robust, human-like narrative understanding in AI.