## Diagram: Robotic Manipulation Pipeline with Tokenized State Representation

### Overview

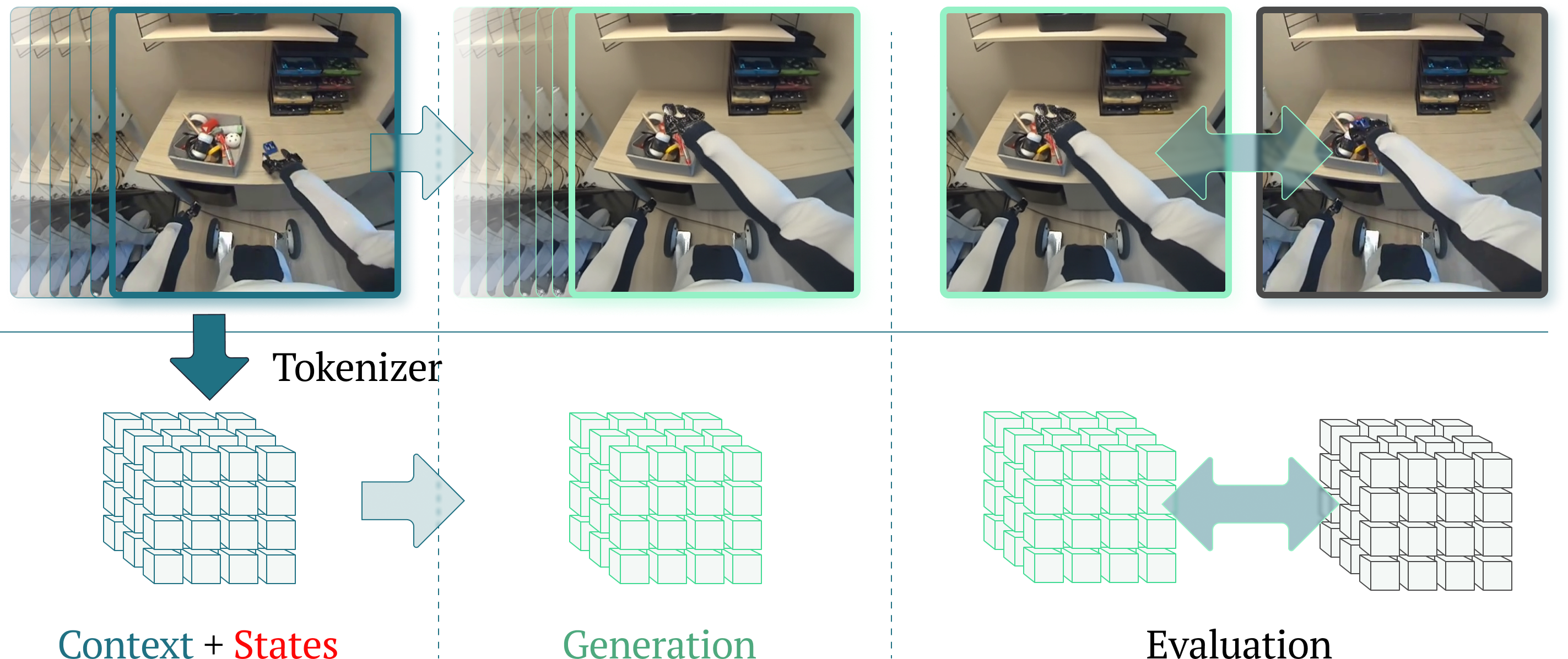

The image is a technical diagram illustrating a three-stage pipeline for processing visual data from a robotic manipulation task. It shows the transformation of raw camera images into abstract tokenized representations, followed by a generation step and a final evaluation comparison. The diagram is divided into three vertical panels separated by dashed lines, each representing a distinct phase of the process.

### Components/Flow

The diagram is organized into three main vertical sections, each containing two horizontal layers:

1. **Top Layer (Visual Data):** Shows first-person perspective images from a robot's camera.

2. **Bottom Layer (Abstract Representation):** Shows 3D grid structures (cubes) representing tokenized data.

**Flow Direction:** The process flows from left to right, indicated by large, light-blue arrows connecting the stages. A secondary, double-headed arrow appears in the final stage.

**Text Labels (Transcribed):**

* **"Tokenizer"**: Located in the top-left section, with a dark teal arrow pointing down from the first image panel to the first cube grid.

* **"Context + States"**: Located at the bottom-left, below the first cube grid. The word "States" is highlighted in red.

* **"Generation"**: Located at the bottom-center, below the second cube grid.

* **"Evaluation"**: Located at the bottom-right, below the third cube grid.

### Detailed Analysis

**Panel 1: Context + States (Left Section)**

* **Top (Visual Data):** A sequence of images (approximately 5-6 frames) is shown, with the foremost frame highlighted by a dark teal border. The image depicts a robot's white and black arm reaching towards a desk. On the desk is a grey tray containing various small objects (tools, parts). In the background, there is a shelving unit with drawers.

* **Bottom (Abstract Representation):** A 3D grid of cubes (approximately 4x4x4) is shown in a dark teal wireframe. A large, dark teal arrow labeled "Tokenizer" points from the highlighted image above to this grid, indicating the conversion of visual data into a tokenized format.

* **Flow:** A large, light-blue arrow points from this panel to the next.

**Panel 2: Generation (Center Section)**

* **Top (Visual Data):** A similar sequence of images is shown, now with the foremost frame highlighted by a light green border. The robot arm's position has changed slightly, suggesting progression in the task.

* **Bottom (Abstract Representation):** A 3D grid of cubes, identical in structure to the first, is shown in a light green wireframe. This represents the generated state or prediction based on the tokenized context.

* **Flow:** A large, light-blue arrow points from this panel to the final panel.

**Panel 3: Evaluation (Right Section)**

* **Top (Visual Data):** Two images are shown side-by-side. The left image has a light green border (matching the "Generation" panel), and the right image has a dark grey border. A large, double-headed, light-blue arrow connects them, indicating a comparison or evaluation step between the generated state and a target or ground truth state.

* **Bottom (Abstract Representation):** Two 3D cube grids are shown side-by-side. The left grid is in light green wireframe (matching the "Generation" output), and the right grid is in dark grey wireframe. The same double-headed arrow connects them, mirroring the comparison shown in the visual data layer above.

### Key Observations

1. **Color-Coded Stages:** The diagram uses a consistent color scheme to link stages: dark teal for the initial "Context + States," light green for "Generation," and dark grey for the evaluation target.

2. **Parallel Representation:** The top (visual) and bottom (abstract) layers in each panel are presented in parallel, emphasizing that the tokenized grid is a direct representation of the visual scene.

3. **Evaluation is a Comparison:** The final stage is explicitly a comparison between two entities: the generated output (green) and a reference or ground truth (grey), applied at both the visual and token levels.

4. **Spatial Grounding:** The legend/color mapping is clear and consistent across the diagram. The green border and green cubes are co-located in the "Generation" panel. The grey border and grey cubes are co-located in the right side of the "Evaluation" panel.

### Interpretation

This diagram illustrates a core framework for a **vision-based robotic learning or planning system**. The process can be interpreted as follows:

1. **Tokenization as State Encoding:** The system first converts high-dimensional visual input (pixels from the robot's camera) into a lower-dimensional, structured "tokenized" state (the cube grid). This is a common technique in machine learning to make complex data manageable for algorithms. The label "Context + States" suggests this token grid encapsulates both the environmental context and the current state of the robot and objects.

2. **Generative Prediction:** The "Generation" phase likely involves a model (e.g., a world model or policy network) that takes the tokenized context as input and predicts the next state or a sequence of actions. The output is another tokenized representation (the green grid).

3. **Evaluation via Comparison:** The final "Evaluation" phase compares the generated/predicted state (green) against a target state (grey). This target could be a desired goal state, a ground-truth next frame from training data, or the output of a different model. The double-headed arrow signifies a loss function or similarity metric being computed between these two representations. This comparison is crucial for training the generative model (via backpropagation) or for assessing the performance of a robotic plan.

**Underlying Concept:** The diagram emphasizes a **learned, abstract state-space approach** to robotics. Instead of operating directly on pixels, the system learns a compressed representation (tokens) and performs prediction and evaluation within this latent space, which can be more efficient and robust. The parallel structure between visual and token layers argues for the fidelity and utility of the learned representation.