## Line Chart: Model Accuracy vs. Reasoning Complexity

### Overview

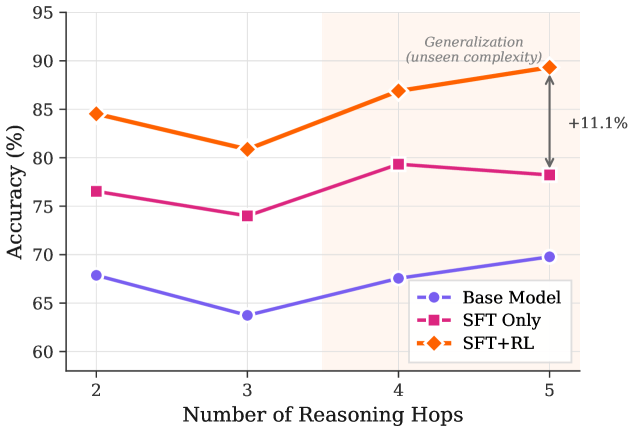

The image is a line chart comparing the performance of three different models—Base Model, SFT Only, and SFT+RL—on a task requiring increasing numbers of reasoning steps. The chart demonstrates how accuracy changes as the complexity of the reasoning task (measured in "hops") increases, with a specific focus on generalization to higher, unseen complexity levels.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled **"Number of Reasoning Hops"**. It has discrete integer markers at 2, 3, 4, and 5.

* **Y-Axis:** Labeled **"Accuracy (%)"**. The scale runs from 60 to 95, with major gridlines at intervals of 5% (60, 65, 70, 75, 80, 85, 90, 95).

* **Legend:** Located in the bottom-right corner of the plot area. It defines three data series:

* **Base Model:** Represented by a purple line with circular markers (●).

* **SFT Only:** Represented by a pink/magenta line with square markers (■).

* **SFT+RL:** Represented by an orange line with diamond markers (◆).

* **Annotations:**

* A shaded beige region spans the x-axis from 4 to 5 hops. Text within this region reads: **"Generalization (unseen complexity)"**.

* A double-headed vertical arrow connects the final data points of the "SFT+RL" and "Base Model" series at x=5. A label next to it reads: **"+11.1%"**.

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **Base Model (Purple, ●):**

* **Trend:** Shows a slight dip from 2 to 3 hops, followed by a gradual, steady increase from 3 to 5 hops.

* **Data Points (Approximate):**

* 2 Hops: ~68%

* 3 Hops: ~64%

* 4 Hops: ~68%

* 5 Hops: ~70%

2. **SFT Only (Pink, ■):**

* **Trend:** Follows a similar pattern to the Base Model—a dip from 2 to 3 hops, then a recovery and increase. It consistently performs better than the Base Model.

* **Data Points (Approximate):**

* 2 Hops: ~77%

* 3 Hops: ~74%

* 4 Hops: ~79%

* 5 Hops: ~78%

3. **SFT+RL (Orange, ◆):**

* **Trend:** Also exhibits a dip from 2 to 3 hops, but then shows the strongest upward trajectory from 3 to 5 hops. It is the top-performing model at every data point.

* **Data Points (Approximate):**

* 2 Hops: ~85%

* 3 Hops: ~81%

* 4 Hops: ~87%

* 5 Hops: ~89%

**Performance Gap:**

The annotation "+11.1%" quantifies the performance advantage of the **SFT+RL** model over the **Base Model** at the highest complexity level (5 reasoning hops). The gap between the top (SFT+RL) and middle (SFT Only) lines also appears to widen slightly at 4 and 5 hops compared to 2 hops.

### Key Observations

1. **Universal Dip at 3 Hops:** All three models experience a noticeable drop in accuracy when moving from 2 to 3 reasoning hops, suggesting this specific increase in complexity poses a common challenge.

2. **Consistent Performance Hierarchy:** The ranking of the models (SFT+RL > SFT Only > Base Model) is maintained across all tested levels of reasoning complexity.

3. **Generalization Performance:** The shaded "Generalization" region highlights that the task at 4 and 5 hops was likely not seen during training. The chart shows that all models, especially SFT+RL, recover and improve accuracy in this region, indicating successful generalization.

4. **Widening Advantage:** The performance gap between the most advanced model (SFT+RL) and the baseline appears largest at the highest complexity (5 hops), suggesting the benefits of the combined SFT and RL approach scale with task difficulty.

### Interpretation

This chart provides strong evidence for the effectiveness of a training pipeline that combines Supervised Fine-Tuning (SFT) with Reinforcement Learning (RL) for complex reasoning tasks.

* **The Data Suggests:** While SFT alone provides a significant boost over the base model, adding RL on top of SFT yields further substantial gains, particularly as the reasoning chain grows longer and more complex. The dip at 3 hops might indicate a phase where the reasoning structure becomes non-trivial, challenging all models before they adapt to longer chains.

* **Element Relationships:** The x-axis (complexity) directly challenges the models, whose performance is measured on the y-axis (accuracy). The legend defines the independent variable (training method), while the annotation explicitly calls out the most critical finding: superior generalization to higher, unseen complexity.

* **Notable Anomaly/Trend:** The most significant trend is not just the higher accuracy of SFT+RL, but its steeper positive slope from 3 to 5 hops. This indicates it is not only better but also *more robust* to increasing complexity, which is the key to solving real-world problems requiring multi-step deduction. The +11.1% gap is a concrete measure of this robustness advantage at the limit of the tested data.