\n

## Line Chart: Answer Accuracy vs. Layer for Llama Models

### Overview

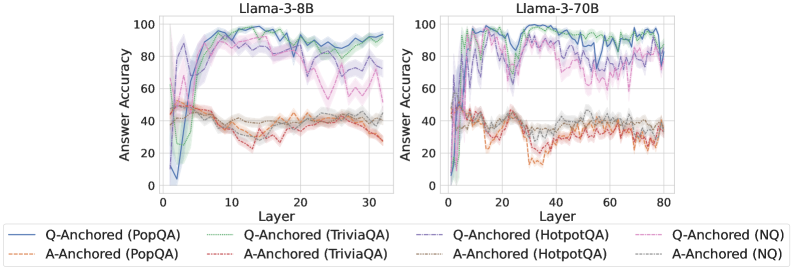

This image presents two line charts comparing the answer accuracy of different question-answering (QA) methods across layers in two Llama models: Llama-3-8B and Llama-3-70B. The x-axis represents the layer number, and the y-axis represents the answer accuracy, ranging from 0 to 100. Each chart displays multiple lines, each representing a different QA method and anchoring strategy.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 30 for Llama-3-8B and 0 to 80 for Llama-3-70B).

* **Y-axis:** Answer Accuracy (ranging from 0 to 100).

* **Models:** Llama-3-8B (left chart), Llama-3-70B (right chart).

* **QA Methods/Anchoring Strategies (Legend):**

* Q-Anchored (PopQA) - Blue line

* A-Anchored (PopQA) - Orange line

* Q-Anchored (TriviaQA) - Purple line

* A-Anchored (TriviaQA) - Brown line

* Q-Anchored (HotpotQA) - Light Green dashed line

* A-Anchored (HotpotQA) - Yellow dashed line

* Q-Anchored (NQ) - Teal line

* A-Anchored (NQ) - Gray line

### Detailed Analysis or Content Details

**Llama-3-8B (Left Chart):**

* **Q-Anchored (PopQA):** Starts at approximately 0% accuracy at layer 0, rapidly increases to around 90-95% accuracy by layer 10, and remains relatively stable around 90-95% for the rest of the layers.

* **A-Anchored (PopQA):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

* **Q-Anchored (TriviaQA):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (TriviaQA):** Starts at approximately 0% accuracy at layer 0, increases to around 50-60% accuracy by layer 10, and plateaus around 50-60% for the remaining layers.

* **Q-Anchored (HotpotQA):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (HotpotQA):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

* **Q-Anchored (NQ):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (NQ):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

**Llama-3-70B (Right Chart):**

* **Q-Anchored (PopQA):** Starts at approximately 0% accuracy at layer 0, rapidly increases to around 90-95% accuracy by layer 10, and remains relatively stable around 90-95% for the rest of the layers.

* **A-Anchored (PopQA):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

* **Q-Anchored (TriviaQA):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (TriviaQA):** Starts at approximately 0% accuracy at layer 0, increases to around 50-60% accuracy by layer 10, and plateaus around 50-60% for the remaining layers.

* **Q-Anchored (HotpotQA):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (HotpotQA):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

* **Q-Anchored (NQ):** Starts at approximately 0% accuracy at layer 0, increases to around 80-90% accuracy by layer 10, and remains relatively stable around 80-90% for the rest of the layers.

* **A-Anchored (NQ):** Starts at approximately 0% accuracy at layer 0, increases to around 40-50% accuracy by layer 10, and plateaus around 40-50% for the remaining layers.

### Key Observations

* **Q-Anchored methods consistently outperform A-Anchored methods** across all QA datasets and both model sizes.

* **Accuracy generally increases rapidly in the initial layers (0-10)** and then plateaus.

* **The 70B model shows similar trends to the 8B model**, but the x-axis extends to layer 80, indicating a deeper model.

* **PopQA, TriviaQA, HotpotQA, and NQ datasets all exhibit similar accuracy curves** for the Q-Anchored methods.

* **A-Anchored methods consistently plateau at a lower accuracy level** (around 40-60%) compared to Q-Anchored methods (around 80-95%).

### Interpretation

The data suggests that question-anchored methods are significantly more effective than answer-anchored methods for improving answer accuracy in Llama models. This could be because anchoring on the question provides more relevant context for the model to generate accurate answers. The rapid increase in accuracy in the initial layers indicates that the early layers of the model are crucial for capturing fundamental linguistic and semantic information. The plateauing of accuracy after layer 10 suggests that further layers contribute less to overall performance, or that the model has reached its capacity for learning on these datasets. The similarity in trends across different QA datasets suggests that the observed patterns are not specific to any particular dataset but are rather a general characteristic of the model's behavior. The larger 70B model does not fundamentally change the observed trends, indicating that simply increasing model size does not necessarily lead to significant improvements in accuracy without changes to the anchoring strategy. The consistent performance gap between Q- and A-anchored methods highlights the importance of carefully considering the anchoring strategy when training and deploying large language models for question answering.