TECHNICAL ASSET FINGERPRINT

2322f6cd5f7a6f94567a57a4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart/Diagram Type: Transformer Publication Trend and Model Compression Pipeline

### Overview

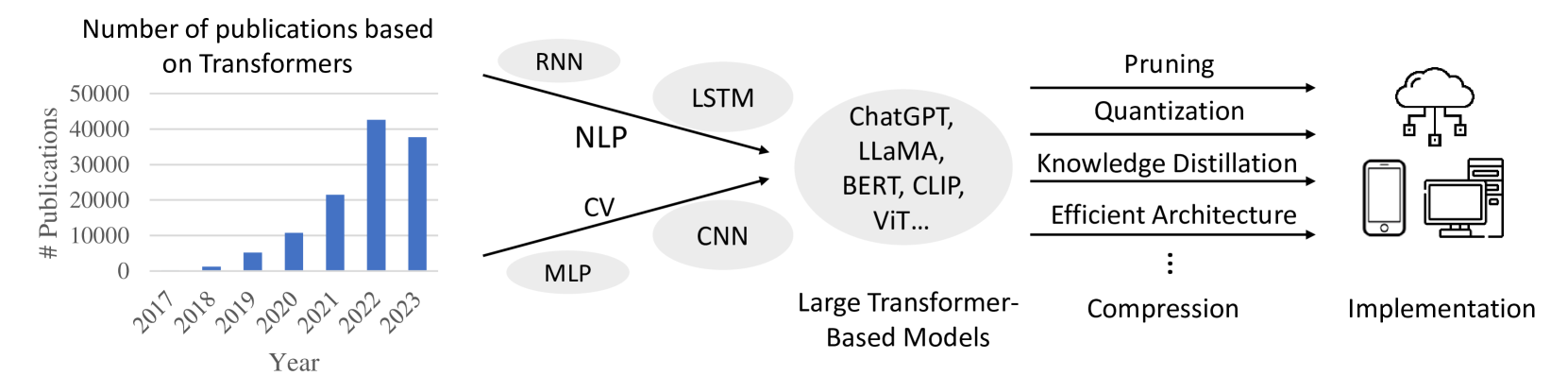

The image presents a combination of a bar chart illustrating the increasing number of publications based on Transformers over the years, and a diagram depicting the pipeline from various neural network architectures to large transformer-based models, their compression techniques, and final implementation.

### Components/Axes

**Bar Chart:**

* **Title:** Number of publications based on Transformers

* **Y-axis:** # Publications, ranging from 0 to 50000, with increments of 10000.

* **X-axis:** Year, ranging from 2017 to 2023.

**Diagram:**

* **Input Models:** RNN, LSTM, CNN, MLP

* **Intermediate Stage:** Large Transformer-Based Models (ChatGPT, LLaMA, BERT, CLIP, ViT...)

* **Compression Techniques:** Pruning, Quantization, Knowledge Distillation, Efficient Architecture

* **Output:** Implementation (Cloud, Mobile, Desktop)

### Detailed Analysis or ### Content Details

**Bar Chart Data:**

* **2017:** Approximately 0 publications.

* **2018:** Approximately 1000 publications.

* **2019:** Approximately 5000 publications.

* **2020:** Approximately 8000 publications.

* **2021:** Approximately 11000 publications.

* **2022:** Approximately 21000 publications.

* **2023:** Approximately 42000 publications.

**Diagram Flow:**

1. **Input Models:**

* RNN and LSTM are associated with NLP (Natural Language Processing).

* CNN and MLP are associated with CV (Computer Vision).

2. **Large Transformer-Based Models:**

* The input models feed into a stage of Large Transformer-Based Models, including examples such as ChatGPT, LLaMA, BERT, CLIP, and ViT.

3. **Compression Techniques:**

* These large models are then subjected to compression techniques like Pruning, Quantization, Knowledge Distillation, and Efficient Architecture.

4. **Implementation:**

* Finally, the compressed models are implemented on various platforms, including cloud, mobile devices, and desktop computers.

### Key Observations

* The number of publications based on Transformers has increased exponentially from 2017 to 2023.

* The diagram illustrates a common pipeline for developing and deploying large transformer-based models, emphasizing the importance of compression techniques for practical implementation.

### Interpretation

The data suggests a rapidly growing interest and research activity in the field of Transformers. The diagram highlights the progression from traditional neural network architectures to large transformer models, and the necessity of model compression to enable deployment on diverse platforms. The exponential increase in publications likely reflects the increasing capabilities and applications of transformer-based models in various domains. The diagram shows the flow from initial models (RNN, LSTM, CNN, MLP) to large transformer models, and then to compressed implementations. This indicates a trend towards more complex models that require optimization for real-world use.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

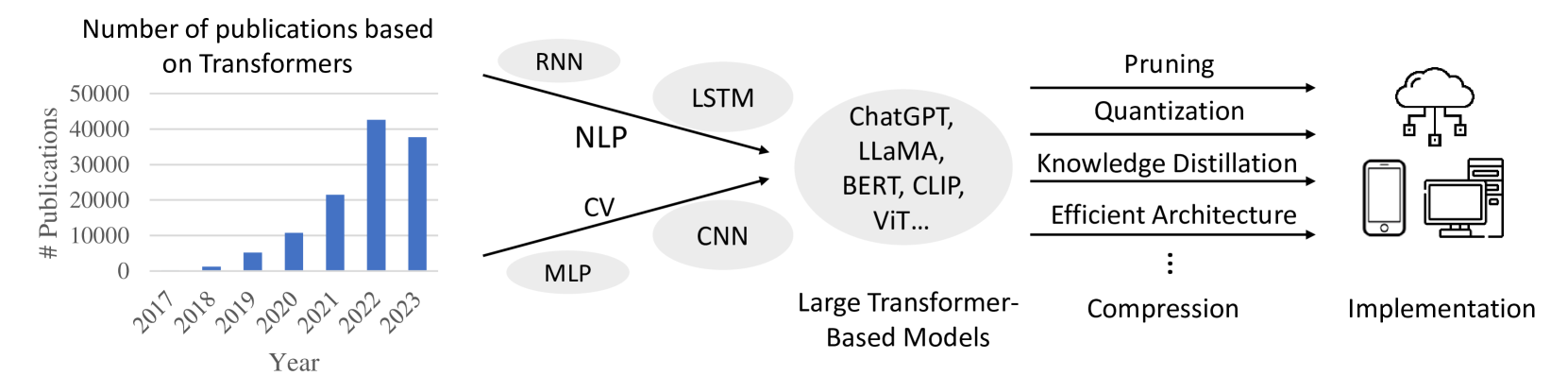

## Bar Chart and Flow Diagram: Publications on Transformers and Their Development

### Overview

This image presents two distinct but related sections. The left section is a bar chart showing the number of publications based on Transformers over the years from 2017 to 2023. The right section is a flow diagram illustrating the progression from various model types and applications to large Transformer-based models, followed by compression techniques, and finally, implementation on different devices.

### Components/Axes

**Bar Chart:**

* **Title:** "Number of publications based on Transformers"

* **Y-axis Title:** "# Publications"

* **Y-axis Scale:** Ranges from 0 to 50,000, with major tick marks at 0, 10,000, 20,000, 30,000, 40,000, and 50,000.

* **X-axis Title:** "Year"

* **X-axis Markers:** 2017, 2018, 2019, 2020, 2021, 2022, 2023.

**Flow Diagram:**

* **Input Categories (Left):**

* Ovals containing: "RNN", "NLP", "CV", "CNN", "MLP". Arrows point from these towards "Large Transformer-Based Models".

* **Intermediate Stage:**

* A large oval labeled "Large Transformer-Based Models" containing text: "ChatGPT, LLaMA, BERT, CLIP, ViT...".

* **Compression Techniques (Middle):**

* A list of techniques with arrows pointing from "Large Transformer-Based Models" to them, and then to "Implementation":

* "Pruning"

* "Quantization"

* "Knowledge Distillation"

* "Efficient Architecture"

* "..." (ellipsis indicating more techniques)

* "Compression" (positioned below the ellipsis, suggesting it's a broader category or a related concept).

* **Output Stage (Right):**

* Icons representing implementation:

* A cloud icon with connected squares, representing cloud-based implementation.

* A smartphone icon.

* A desktop computer icon.

* A label below these icons: "Implementation".

### Detailed Analysis or Content Details

**Bar Chart Data:**

The bar chart displays the following approximate publication counts for each year:

* **2017:** Approximately 1,000 publications.

* **2018:** Approximately 2,000 publications.

* **2019:** Approximately 8,000 publications.

* **2020:** Approximately 22,000 publications.

* **2021:** Approximately 42,000 publications.

* **2022:** Approximately 42,000 publications.

* **2023:** Approximately 38,000 publications.

**Flow Diagram Progression:**

The flow diagram illustrates a conceptual pathway:

1. **Foundation Models/Applications:** Traditional model types and application areas like RNN, NLP, CV, CNN, and MLP are shown as inputs that contribute to or are related to the development of Transformer models.

2. **Emergence of Large Transformer Models:** These foundational elements lead to the development of significant Transformer-based models, exemplified by names like ChatGPT, LLaMA, BERT, CLIP, and ViT.

3. **Optimization and Efficiency:** To make these large models practical, various compression and efficiency techniques are applied, including Pruning, Quantization, Knowledge Distillation, and the development of Efficient Architectures. The ellipsis and "Compression" label suggest this is a multifaceted area.

4. **Deployment:** The final stage is "Implementation," depicted by icons for cloud, mobile, and desktop/server environments, indicating the diverse platforms where these optimized Transformer models can be deployed.

### Key Observations

* **Exponential Growth in Publications:** The bar chart clearly shows a dramatic increase in the number of publications related to Transformers, particularly from 2019 to 2021, indicating a surge in research and development in this area.

* **Peak and Slight Decline:** While publications peaked around 2021-2022, there's a slight decrease in 2023, which could be a temporary fluctuation or an indication of a maturing research field or a shift in focus.

* **Interconnectedness of AI Fields:** The flow diagram highlights how older AI paradigms (RNN, CNN, MLP) and application domains (NLP, CV) paved the way for or are integrated with the rise of Transformer models.

* **Focus on Practicality:** The inclusion of compression techniques underscores the ongoing effort to make powerful Transformer models more efficient and deployable across various hardware and software environments.

### Interpretation

The image effectively communicates the trajectory of Transformer-based models in artificial intelligence. The bar chart quantifies the explosive growth in research interest and output concerning Transformers, demonstrating their significant impact on the AI landscape. The rapid ascent from 2019 to 2021 suggests a paradigm shift driven by the capabilities of these models.

The flow diagram provides a conceptual framework for understanding the evolution and application of Transformers. It suggests that while foundational AI concepts and models were crucial, the advent of large Transformer architectures like BERT and GPT has revolutionized the field. The subsequent emphasis on compression and efficient implementation highlights the practical challenges and ongoing innovations required to translate these powerful models into real-world applications across diverse platforms, from cloud services to personal devices. The diagram implies a progression from theoretical development and model creation to optimization and widespread deployment, reflecting the maturity and impact of Transformer technology. The slight dip in publications in 2023, while needing further context, could indicate a stabilization after rapid growth or a transition to more applied research and deployment rather than foundational exploration.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Transformer Publication Growth & Implementation

### Overview

The image is a diagram illustrating the growth in publications related to Transformer models from 2017 to 2023, and the subsequent methods for implementing these models. The diagram is split into three main sections: a bar chart showing publication numbers, a central node representing Large Transformer-Based Models and their connections to various NLP and CV architectures, and a right-hand side depicting implementation techniques.

### Components/Axes

* **Left Section (Bar Chart):**

* X-axis: Year (2017, 2018, 2019, 2020, 2021, 2022, 2023)

* Y-axis: # Publications (Scale from 0 to 50000)

* **Central Section (Node Diagram):**

* Central Node: "Large Transformer-Based Models"

* Connected Nodes: "NLP", "CV"

* Sub-Nodes (connected to NLP): "RNN", "LSTM"

* Sub-Nodes (connected to CV): "CNN"

* Additional Node: "MLP"

* Examples within the "Large Transformer-Based Models" node: "ChatGPT, LLaMA, BERT, CLIP, ViT..."

* **Right Section (Implementation):**

* "Compression"

* "Implementation"

* Compression Techniques: "Pruning", "Quantization", "Knowledge Distillation", "Efficient Architecture", "..."

* Arrows indicate flow/relationship between components.

### Detailed Analysis or Content Details

* **Publication Growth (Bar Chart):**

* 2017: Approximately 1000 publications.

* 2018: Approximately 2000 publications.

* 2019: Approximately 4000 publications.

* 2020: Approximately 12000 publications.

* 2021: Approximately 25000 publications.

* 2022: Approximately 35000 publications.

* 2023: Approximately 33000 publications.

* The trend shows a steep increase in publications from 2017 to 2022, with a slight decrease in 2023.

* **Model Architecture Connections:**

* NLP (Natural Language Processing) is connected to RNN (Recurrent Neural Networks) and LSTM (Long Short-Term Memory).

* CV (Computer Vision) is connected to CNN (Convolutional Neural Networks).

* MLP (Multilayer Perceptron) is directly connected to "Large Transformer-Based Models".

* **Implementation Flow:**

* "Large Transformer-Based Models" are compressed using techniques like Pruning, Quantization, Knowledge Distillation, and Efficient Architecture.

* Compressed models are then implemented, with icons representing cloud servers and mobile devices.

### Key Observations

* The number of publications on Transformers has increased dramatically in recent years, peaking in 2022.

* Transformers are being applied to both NLP and CV tasks, leveraging existing architectures like RNNs, LSTMs, and CNNs.

* Model compression is a crucial step in deploying large Transformer models, enabling implementation on resource-constrained devices.

* The diagram highlights the progression from research (publications) to practical application (implementation).

### Interpretation

The diagram illustrates the rapid growth and increasing importance of Transformer-based models in the field of artificial intelligence. The surge in publications indicates a high level of research activity and innovation. The connections between Transformers and established architectures suggest that Transformers are not replacing existing methods entirely, but rather building upon them. The emphasis on model compression highlights the challenges of deploying these large models in real-world applications, and the need for efficient implementation strategies. The inclusion of cloud and mobile device icons suggests a desire to make these models accessible across a wide range of platforms. The slight dip in publications in 2023 could indicate a maturation of the field, with a shift from rapid exploration to more focused refinement and application. The diagram effectively communicates the lifecycle of Transformer models, from initial research to practical deployment.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Composite Diagram: Evolution and Deployment of Transformer-Based AI Models

### Overview

The image is a composite technical diagram illustrating the growth of Transformer-based research, the evolution of AI model architectures leading to large Transformer-based models, and the subsequent pipeline for compressing and deploying these models. It consists of three main sections arranged horizontally from left to right: a bar chart, a flow diagram of model evolution, and a deployment pipeline.

### Components/Axes

**1. Left Section: Bar Chart**

* **Title:** "Number of publications based on Transformers"

* **X-axis:** Labeled "Year". Categories: 2017, 2018, 2019, 2020, 2021, 2022, 2023.

* **Y-axis:** Labeled "# Publications". Scale markers: 0, 10000, 20000, 30000, 40000, 50000.

* **Data Series:** A single series of blue vertical bars representing the publication count for each year.

**2. Center Section: Model Evolution Flow Diagram**

* **Left Cluster (Pre-Transformer Architectures):** Six light gray ovals containing text:

* Top: "RNN"

* Upper Middle: "LSTM"

* Middle: "NLP" (connected via arrow to central oval)

* Lower Middle: "CV" (connected via arrow to central oval)

* Bottom: "MLP"

* Bottom Right: "CNN"

* **Central Oval (Target Models):** A large, light gray oval containing the text: "ChatGPT, LLaMA, BERT, CLIP, ViT..." The ellipsis (...) indicates this is a non-exhaustive list.

* **Label Below Central Oval:** "Large Transformer-Based Models"

* **Arrows:** Two black arrows originate from the "NLP" and "CV" ovals, pointing directly to the central "Large Transformer-Based Models" oval.

**3. Right Section: Compression and Implementation Pipeline**

* **Compression Techniques:** Four horizontal black arrows point from left to right. Above each arrow is a label:

* "Pruning"

* "Quantization"

* "Knowledge Distillation"

* "Efficient Architecture"

* **Ellipsis:** Below the fourth arrow, three vertical dots ("...") indicate additional, unspecified compression techniques.

* **Label Below Arrows:** "Compression"

* **Implementation Icons:** On the far right, three black line-art icons represent deployment targets:

* Top: A cloud with network connections (representing cloud/server deployment).

* Middle: A smartphone (representing mobile deployment).

* Bottom: A desktop computer and server tower (representing edge/desktop deployment).

* **Label Below Icons:** "Implementation"

### Detailed Analysis

**Bar Chart Data (Approximate Values):**

* **Trend Verification:** The data series shows a clear, steep upward trend from 2017 to 2022, followed by a slight decline in 2023.

* **2017:** ~0 publications (bar is barely visible).

* **2018:** ~1,000 publications.

* **2019:** ~5,000 publications.

* **2020:** ~11,000 publications.

* **2021:** ~21,000 publications.

* **2022:** ~42,000 publications (peak).

* **2023:** ~37,000 publications.

**Flow Diagram Relationships:**

* The diagram positions "NLP" (Natural Language Processing) and "CV" (Computer Vision) as the primary application domains driving the development of large Transformer-based models, as indicated by the direct arrows.

* Other architectures like RNN, LSTM, MLP, and CNN are shown as related or predecessor technologies in the same conceptual space but without direct arrows to the central models.

**Compression Pipeline:**

* The four listed techniques (Pruning, Quantization, Knowledge Distillation, Efficient Architecture) are presented as parallel or sequential methods for model compression.

* The flow is linear: Large Transformer-Based Models → Compression Techniques → Implementation on various hardware (cloud, mobile, desktop).

### Key Observations

1. **Exponential Growth:** The publication chart demonstrates explosive, near-exponential growth in Transformer research from 2018 to 2022.

2. **Peak and Plateau:** The year 2022 represents the peak of publication volume, with a noticeable but not drastic decrease in 2023, suggesting a potential maturation or consolidation phase in the field.

3. **Central Role of Transformers:** The diagram's structure places "Large Transformer-Based Models" as the central, convergent outcome of prior work in NLP and CV.

4. **Deployment Focus:** The rightmost section highlights that a major current challenge in the field is not just creating large models, but compressing them for practical "Implementation" across diverse computing environments.

### Interpretation

This diagram tells a concise story of a technological paradigm shift. The bar chart provides quantitative evidence of a research revolution centered on Transformers, peaking around 2022. The flow diagram contextualizes this revolution, showing how it synthesized advances from both language (NLP) and vision (CV) domains, moving beyond older architectures like RNNs and CNNs.

The most critical insight lies in the pipeline's conclusion: the creation of massive models (like ChatGPT, LLaMA) is not the end goal. The diagram argues that the current frontier is **compression**—making these powerful but resource-intensive models efficient enough for real-world **implementation** on everyday devices (phones, desktops) and cloud infrastructure. The ellipsis under "Compression" acknowledges this is an active, open area of research with many emerging techniques. The overall message is one of rapid theoretical advancement now grappling with the practical engineering challenges of deployment.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Number of Publications Based on Transformers (2017–2023)

### Overview

A vertical bar chart illustrates the exponential growth in publications related to Transformers from 2017 to 2023. The y-axis represents the number of publications (0–50,000), while the x-axis spans the years 2017–2023. A secondary diagram on the right contextualizes the evolution of Transformer-based models and their implementation techniques.

### Components/Axes

- **Bar Chart**:

- **X-axis (Year)**: Labeled "Year" with ticks for 2017–2023.

- **Y-axis (# Publications)**: Labeled "# Publications" with increments of 10,000 up to 50,000.

- **Bars**: Blue-colored bars represent annual publication counts.

- **Diagram**:

- **Left Side**: Traditional machine learning (ML) models (RNN, LSTM, NLP, CV, CNN, MLP) grouped under "Large Transformer-Based Models."

- **Center**: Arrows connect traditional models to large Transformer-based models (ChatGPT, LLaMA, BERT, CLIP, ViT...).

- **Right Side**: Implementation techniques (Pruning, Quantization, Knowledge Distillation, Efficient Architecture, Compression) linked to deployment icons (cloud, devices).

### Detailed Analysis

- **Bar Chart Trends**:

- **2017**: 0 publications (no data).

- **2018**: ~1,000 publications (smallest bar).

- **2019**: ~5,000 publications.

- **2020**: ~10,000 publications.

- **2021**: ~20,000 publications.

- **2022**: ~40,000 publications (peak).

- **2023**: ~35,000 publications (slight decline from 2022).

- **Trend Verification**: Bars show a consistent upward trajectory until 2022, followed by a minor drop in 2023.

- **Diagram Flow**:

- Traditional models (RNN, LSTM, etc.) feed into NLP/CV domains.

- Large Transformer-based models (ChatGPT, BERT, etc.) dominate the center, indicating their centrality in modern AI.

- Implementation techniques (pruning, quantization, etc.) are positioned as downstream optimizations for deployment.

### Key Observations

1. **Exponential Growth**: Publications surged from ~1,000 (2018) to ~40,000 (2022), reflecting rapid adoption of Transformers.

2. **2023 Dip**: A ~12.5% decline from 2022 to 2023 suggests potential market saturation or shifting research focus.

3. **Model Evolution**: The diagram highlights the transition from classical ML to Transformer-based architectures, emphasizing their dominance in NLP/CV.

4. **Implementation Focus**: Techniques like pruning and quantization address computational challenges, critical for real-world deployment.

### Interpretation

- **Research Trajectory**: The bar chart underscores the explosive growth of Transformer research, likely driven by breakthroughs in NLP (e.g., BERT, GPT). The 2023 dip may reflect consolidation or a pivot toward practical applications.

- **Technical Ecosystem**: The diagram maps the lifecycle of Transformer models, from foundational algorithms (RNN/LSTM) to advanced implementations (pruning, compression). This suggests a maturation phase where efficiency and scalability are prioritized.

- **Anomalies**: The 2023 decline warrants investigation—could it signal a shift toward specialized models (e.g., vision transformers) or ethical/regulatory constraints?

- **Implications**: The emphasis on implementation techniques highlights the industry’s focus on deploying large models in resource-constrained environments (e.g., edge devices).

## Diagram: Evolution of Transformer-Based Models and Implementation Techniques

### Overview

A flowchart-style diagram traces the progression from traditional ML models to large Transformer-based architectures and their optimization strategies for deployment.

### Components/Axes

- **Left Side**: Traditional ML models (RNN, LSTM, NLP, CV, CNN, MLP) grouped under "Large Transformer-Based Models."

- **Center**: Arrows connect traditional models to Transformer variants (ChatGPT, LLaMA, BERT, CLIP, ViT...).

- **Right Side**: Implementation techniques (Pruning, Quantization, Knowledge Distillation, Efficient Architecture, Compression) linked to deployment icons (cloud, devices).

### Content Details

- **Traditional Models**: RNN, LSTM, CNN, and MLP represent pre-Transformer paradigms.

- **Transformer Variants**:

- **NLP**: ChatGPT, LLaMA, BERT.

- **CV**: CLIP, ViT.

- **Implementation Techniques**:

- **Pruning/Quantization**: Reduce model size for efficiency.

- **Knowledge Distillation**: Transfer knowledge from large to smaller models.

- **Efficient Architecture**: Optimize computational graphs.

- **Compression/Implementation**: Deploy models on cloud/edge devices.

### Key Observations

1. **Model Specialization**: Transformers dominate NLP (ChatGPT, BERT) and CV (CLIP, ViT), reflecting their versatility.

2. **Optimization Pipeline**: Techniques like pruning and quantization address the computational demands of large models.

3. **Deployment Focus**: Icons (cloud, devices) emphasize real-world applicability, bridging research and practice.

### Interpretation

- **Technical Maturity**: The diagram illustrates a shift from theoretical exploration (2017–2022) to practical deployment (2023+), aligning with the bar chart’s publication trends.

- **Interdisciplinary Impact**: Transformers bridge NLP and CV, enabling cross-domain applications (e.g., multimodal models like CLIP).

- **Future Directions**: The emphasis on compression and efficient architecture suggests ongoing efforts to democratize access to large models via lightweight variants.

## Final Notes

- **Data Limitations**: Exact publication counts are approximate due to the absence of a numerical legend.

- **Contextual Gaps**: The 2023 dip lacks explanatory context—further analysis of publication topics (e.g., ethics, hardware constraints) could clarify this anomaly.

- **Strategic Insight**: The ecosystem depicted in the diagram highlights the need for interdisciplinary collaboration to advance Transformer-based AI responsibly.

DECODING INTELLIGENCE...