\n

## Screenshot: Reinforcement Learning Environment

### Overview

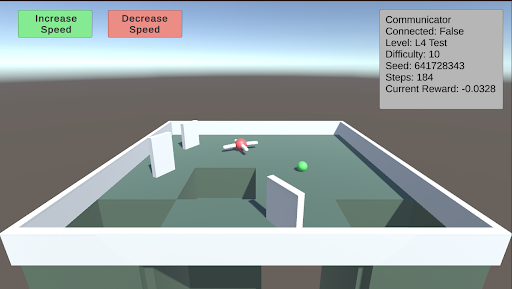

The image depicts a screenshot of a 3D reinforcement learning environment. It appears to be a simulated arena with a white rectangular boundary, a green spherical agent, and a more complex, multi-colored object (likely the target or goal). The environment includes several white rectangular obstacles. The top of the screen displays control buttons for adjusting speed, and the top-right corner shows a status panel with various parameters.

### Components/Axes

The screenshot contains the following elements:

* **Environment:** A 3D arena with a white floor and walls.

* **Agent:** A green sphere.

* **Target/Goal:** A complex object with red, white, and black components.

* **Obstacles:** White rectangular prisms scattered throughout the arena.

* **Control Buttons:** Two rectangular buttons labeled "Increase Speed" (green) and "Decrease Speed" (red). Located in the top-left corner.

* **Status Panel:** A rectangular panel in the top-right corner displaying the following information:

* Communicator: False

* Connected: False

* Level: L4 Test

* Difficulty: 10

* Seed: 641728343

* Steps: 184

* Current Reward: -0.0328

### Detailed Analysis or Content Details

The environment appears to be a simple obstacle course. The agent (green sphere) is positioned near the center of the arena, and the target (red/white/black object) is located slightly below and to the right of the agent. The obstacles are arranged in a non-uniform pattern, creating a challenging navigation task.

The status panel provides the following specific values:

* **Communicator:** False - Indicates no communication is active.

* **Connected:** False - Indicates the environment is not connected to an external system.

* **Level:** L4 Test - Indicates the current level is a test level labeled "L4".

* **Difficulty:** 10 - Indicates the difficulty level is set to 10.

* **Seed:** 641728343 - A random seed used for environment initialization.

* **Steps:** 184 - The number of steps taken in the current episode.

* **Current Reward:** -0.0328 - The reward received at the current step.

### Key Observations

* The negative reward suggests the agent is not performing optimally or is being penalized for its actions.

* The "Communicator" and "Connected" flags being set to "False" indicate a standalone simulation.

* The seed value allows for reproducibility of the environment.

* The level is a test level, suggesting it is used for evaluation or debugging.

### Interpretation

The screenshot represents a reinforcement learning environment designed for training an agent to navigate an obstacle course. The agent's goal is likely to reach the target object while avoiding collisions with the obstacles. The reward function is designed to incentivize successful navigation, with negative rewards potentially indicating collisions or inefficient paths. The status panel provides valuable information about the environment's configuration and the agent's progress. The negative current reward suggests the agent is still learning or is facing challenges in navigating the environment. The environment is likely part of a larger system for training and evaluating reinforcement learning algorithms. The level being a "Test" level suggests this is a controlled environment for assessing performance. The seed value is crucial for ensuring consistent results during experimentation.