\n

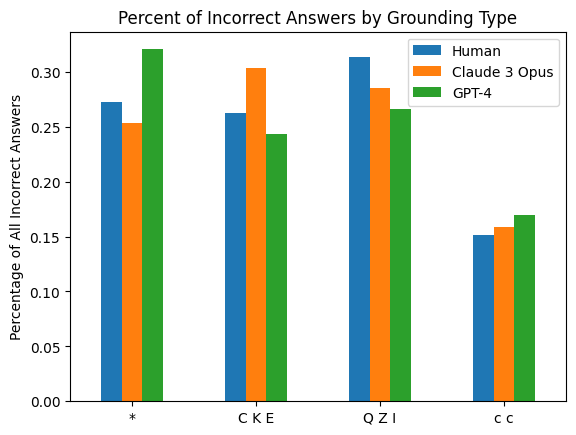

## Bar Chart: Percent of Incorrect Answers by Grounding Type

### Overview

This bar chart compares the percentage of incorrect answers given by Humans, Claude 3 Opus, and GPT-4 across four different grounding types: '*', 'CKE', 'QZI', and 'cc'. The y-axis represents the percentage of all incorrect answers, ranging from 0.00 to 0.35. The x-axis represents the grounding types. Each grounding type has three bars representing the performance of each model.

### Components/Axes

* **Title:** "Percent of Incorrect Answers by Grounding Type" (centered at the top)

* **X-axis Label:** "Grounding Type" (centered at the bottom)

* **Y-axis Label:** "Percentage of All Incorrect Answers" (left side, vertical)

* **Y-axis Scale:** 0.00, 0.05, 0.10, 0.15, 0.20, 0.25, 0.30, 0.35

* **Legend:** Located in the top-right corner.

* Human (Blue)

* Claude 3 Opus (Orange)

* GPT-4 (Green)

### Detailed Analysis

The chart consists of four groups of three bars, one for each grounding type and model.

* **Grounding Type '*':**

* Human: Approximately 0.27 (±0.01)

* Claude 3 Opus: Approximately 0.26 (±0.01)

* GPT-4: Approximately 0.32 (±0.01)

* **Grounding Type 'CKE':**

* Human: Approximately 0.25 (±0.01)

* Claude 3 Opus: Approximately 0.31 (±0.01)

* GPT-4: Approximately 0.24 (±0.01)

* **Grounding Type 'QZI':**

* Human: Approximately 0.28 (±0.01)

* Claude 3 Opus: Approximately 0.32 (±0.01)

* GPT-4: Approximately 0.27 (±0.01)

* **Grounding Type 'cc':**

* Human: Approximately 0.14 (±0.01)

* Claude 3 Opus: Approximately 0.17 (±0.01)

* GPT-4: Approximately 0.16 (±0.01)

**Trends:**

* For grounding type '*', GPT-4 has the highest percentage of incorrect answers, while Claude 3 Opus and Human have similar, lower percentages.

* For grounding type 'CKE', Claude 3 Opus has the highest percentage of incorrect answers, followed by Human, and GPT-4 has the lowest.

* For grounding type 'QZI', Claude 3 Opus has the highest percentage of incorrect answers, followed by Human, and GPT-4 has the lowest.

* For grounding type 'cc', all three models have relatively low percentages of incorrect answers, with Claude 3 Opus slightly higher than the others.

### Key Observations

* The 'cc' grounding type consistently results in the lowest percentage of incorrect answers across all models.

* Claude 3 Opus generally performs worse than GPT-4 and Human on grounding types '*', 'CKE', and 'QZI'.

* GPT-4 consistently performs the worst on grounding type '*'.

* The differences in performance between the models are more pronounced for grounding types '*', 'CKE', and 'QZI' than for 'cc'.

### Interpretation

The chart suggests that the grounding type significantly impacts the accuracy of the models. The 'cc' grounding type appears to be the most reliable, leading to the fewest incorrect answers. Claude 3 Opus demonstrates a higher error rate than both Human and GPT-4 for most grounding types, indicating potential weaknesses in its ability to handle these specific types of grounding. GPT-4's performance on the '*' grounding type is notably worse than its performance on other types, suggesting a specific vulnerability or limitation in its processing of this type of information. The consistent lower error rate for 'cc' could indicate that this grounding type provides clearer or more structured information, making it easier for the models to process and respond accurately. Further investigation into the nature of each grounding type is needed to understand why these differences in performance exist.