\n

## Line Chart: Loss vs. Training Tokens for Two Language Models

### Overview

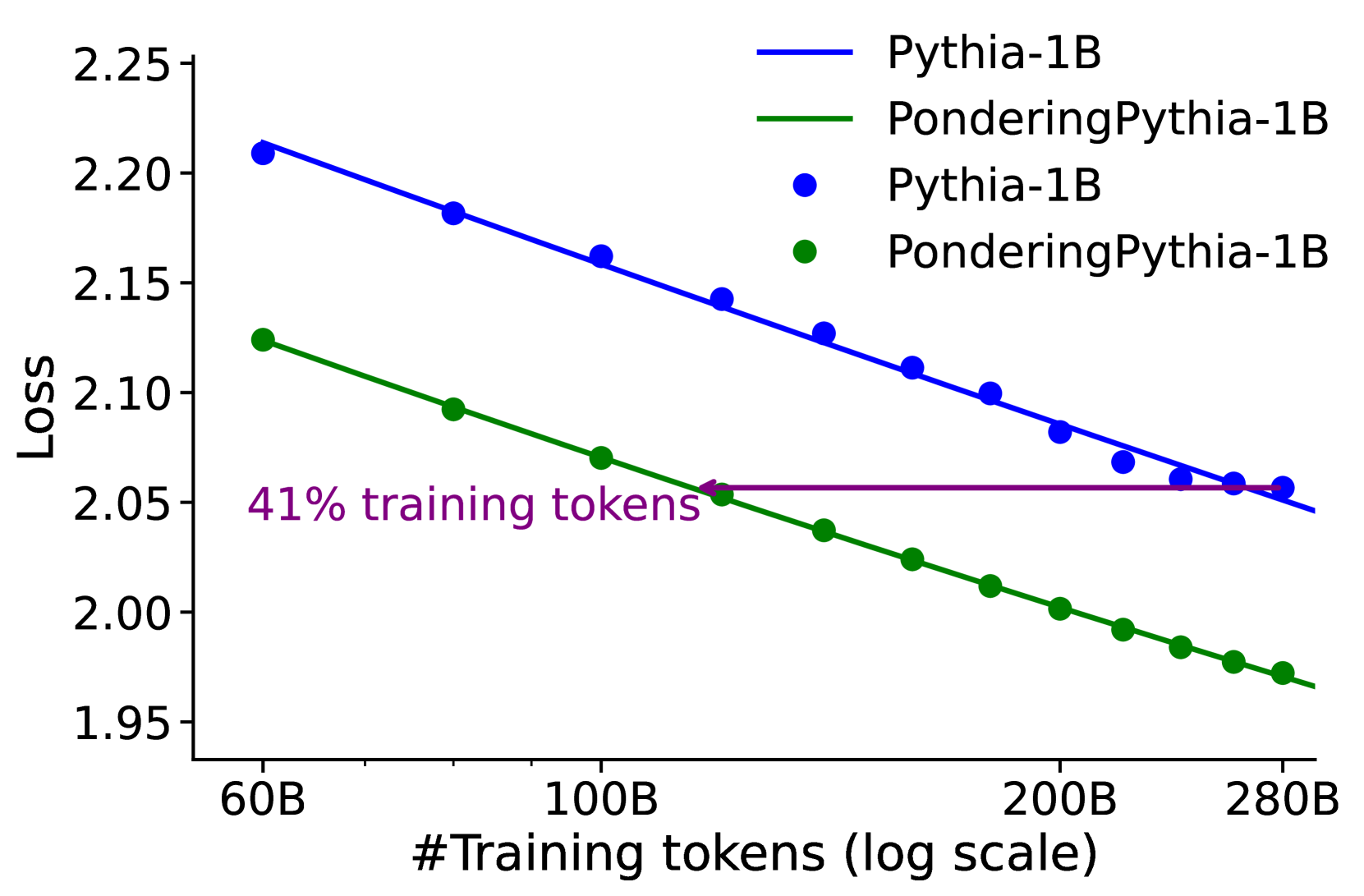

This image is a line chart comparing the training loss of two language models, "Pythia-1B" and "PonderingPythia-1B," as a function of the number of training tokens. The chart demonstrates that the "PonderingPythia-1B" model achieves a lower loss at every measured point and requires significantly fewer training tokens to reach a specific loss value compared to the baseline "Pythia-1B" model.

### Components/Axes

* **Chart Type:** Line chart with data points marked by circular markers.

* **Y-Axis:**

* **Label:** "Loss"

* **Scale:** Linear, ranging from 1.95 to 2.25, with major ticks at 0.05 intervals.

* **X-Axis:**

* **Label:** "#Training tokens (log scale)"

* **Scale:** Logarithmic. Major labeled ticks are at 60B, 100B, 200B, and 280B (where "B" denotes billions).

* **Legend:** Located in the top-right corner of the plot area.

* **Blue line with blue circle markers:** "Pythia-1B"

* **Green line with green circle markers:** "PonderingPythia-1B"

* **Annotation:** A purple text label and arrow.

* **Text:** "41% training tokens"

* **Placement & Function:** The text is positioned in the center-left area of the plot. A purple arrow originates from the text and points to a specific data point on the green "PonderingPythia-1B" line. This point corresponds to a loss value of approximately 2.05.

### Detailed Analysis

**Data Series 1: Pythia-1B (Blue Line)**

* **Trend:** The line shows a consistent, nearly linear downward slope on this log-linear plot, indicating that loss decreases as the number of training tokens increases.

* **Approximate Data Points (Loss vs. Tokens):**

* 60B tokens: ~2.21

* ~80B tokens: ~2.18

* 100B tokens: ~2.16

* ~120B tokens: ~2.14

* ~140B tokens: ~2.125

* ~160B tokens: ~2.11

* ~180B tokens: ~2.10

* 200B tokens: ~2.08

* ~220B tokens: ~2.07

* ~240B tokens: ~2.06

* ~260B tokens: ~2.055

* 280B tokens: ~2.05

**Data Series 2: PonderingPythia-1B (Green Line)**

* **Trend:** This line also shows a consistent downward slope, parallel to but strictly below the blue line, indicating superior performance (lower loss) at all training stages.

* **Approximate Data Points (Loss vs. Tokens):**

* 60B tokens: ~2.125

* ~80B tokens: ~2.09

* 100B tokens: ~2.07

* ~120B tokens: ~2.05 (This is the point indicated by the purple annotation arrow).

* ~140B tokens: ~2.04

* ~160B tokens: ~2.025

* ~180B tokens: ~2.01

* 200B tokens: ~2.00

* ~220B tokens: ~1.99

* ~240B tokens: ~1.98

* ~260B tokens: ~1.975

* 280B tokens: ~1.97

**Annotation Analysis:**

The annotation "41% training tokens" highlights a key comparison. The green line (PonderingPythia-1B) reaches a loss of ~2.05 at approximately 120B tokens. The blue line (Pythia-1B) reaches the same loss level of ~2.05 at approximately 280B tokens. The annotation asserts that 120B is 41% of 280B (120/280 ≈ 0.428, close to 41%), meaning the Pondering model achieves this performance milestone with less than half the training data.

### Key Observations

1. **Consistent Performance Gap:** The green line is uniformly below the blue line. The vertical gap between them appears relatively constant across the log-scale x-axis, suggesting a consistent relative improvement.

2. **Parallel Trajectories:** The slopes of the two lines are very similar, indicating that both models learn at a comparable rate relative to the logarithm of training data, but PonderingPythia-1B starts from and maintains a better loss value.

3. **Efficiency Highlight:** The central message, emphasized by the annotation, is the training efficiency of PonderingPythia-1B. It reaches a target loss (2.05) with a dramatically smaller dataset.

### Interpretation

This chart provides strong empirical evidence for the effectiveness of the "Pondering" modification to the Pythia-1B architecture. The data suggests that PonderingPythia-1B is not only a better-performing model at any given training compute budget (lower loss for the same tokens) but is also significantly more **data-efficient**.

The key takeaway is the 41% figure. In the context of large language model training, where data and compute are primary cost drivers, achieving the same loss with 59% fewer tokens represents a major efficiency gain. This could translate to reduced training time, lower computational cost, or the ability to achieve better performance with the same budget. The chart implies that the "Pondering" mechanism allows the model to extract more learning signal from each token, leading to faster convergence in terms of data consumption. The parallel slopes suggest the fundamental scaling law behavior is preserved, but the modification provides a constant multiplicative improvement in efficiency.