TECHNICAL ASSET FINGERPRINT

23a4caba4700b63d63df457e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

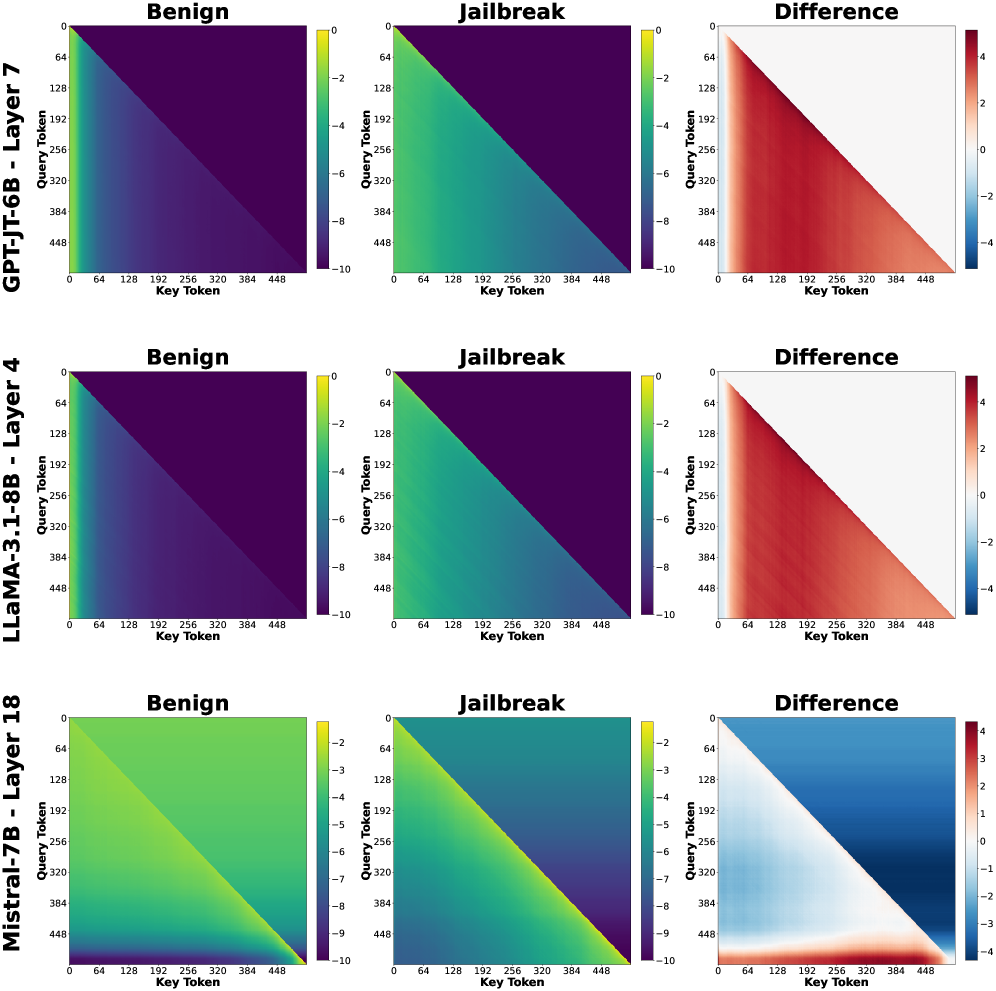

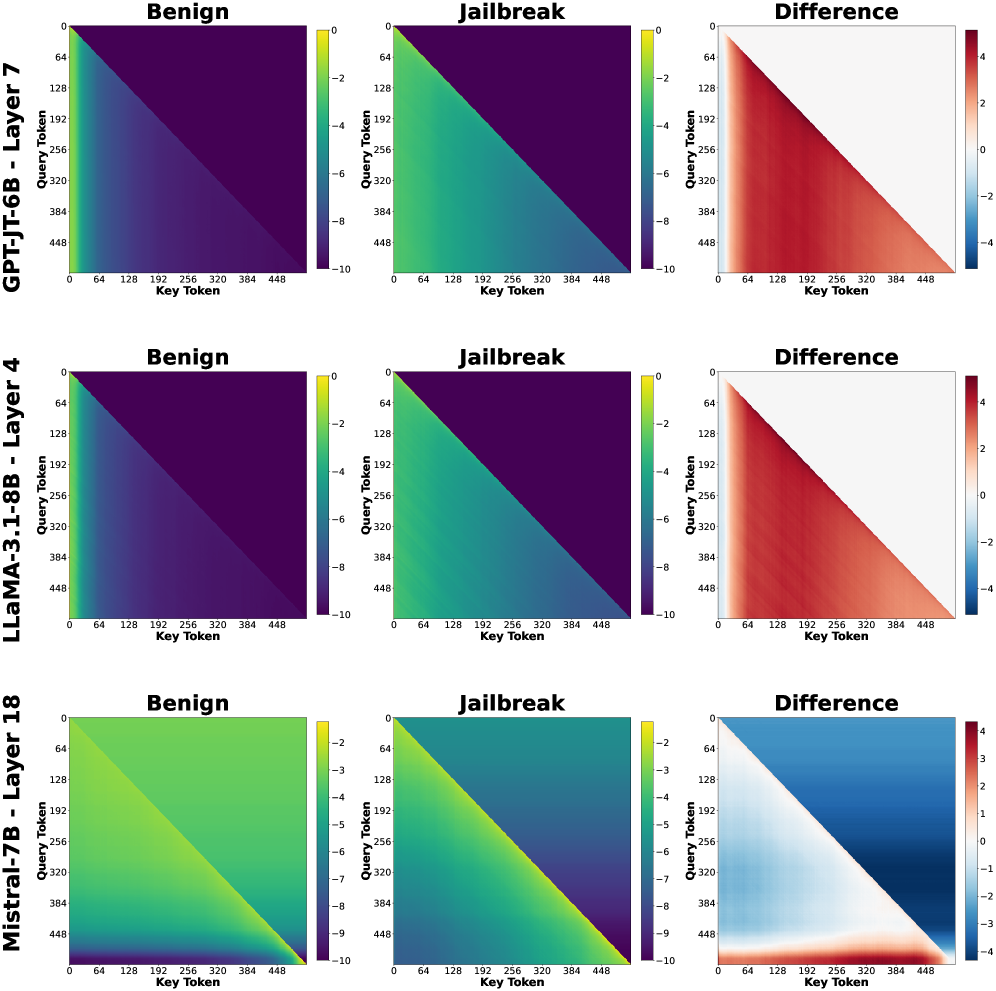

## Heatmap Comparison: Model Behavior on Benign vs. Jailbreak Prompts

### Overview

The image presents a series of heatmaps comparing the behavior of three different language models (GPT-JT-6B, LLaMA-3.1-8B, and Mistral-7B) when processing benign and jailbreak prompts. Each row corresponds to a different model, and each column represents a different condition: "Benign" prompt, "Jailbreak" prompt, and the "Difference" between the two. The heatmaps visualize the interaction between "Query Token" and "Key Token" within specific layers of each model.

### Components/Axes

* **Rows (Models):**

* GPT-JT-6B - Layer 7

* LLaMA-3.1-8B - Layer 4

* Mistral-7B - Layer 18

* **Columns (Conditions):**

* Benign

* Jailbreak

* Difference

* **X-axis (Key Token):** Ranges from 0 to 448, with tick marks at 0, 64, 128, 192, 256, 320, 384, and 448.

* **Y-axis (Query Token):** Ranges from 0 to 448, with tick marks at 0, 64, 128, 192, 256, 320, 384, and 448.

* **Color Scale (Benign & Jailbreak):** Ranges from -10 (dark purple) to 0 (yellow).

* **Color Scale (Difference):** Ranges from -4 (dark blue) to 4 (dark red), with 0 being white.

### Detailed Analysis

**GPT-JT-6B - Layer 7**

* **Benign:** The heatmap shows a gradient, with values decreasing from yellow (0) at the top-left corner to dark purple (-10) towards the bottom-right.

* **Jailbreak:** Similar to the "Benign" condition, the heatmap shows a gradient from yellow (0) to dark purple (-10).

* **Difference:** The heatmap shows a clear separation. The upper-left triangle is predominantly blue (negative difference), while the lower-right triangle is predominantly red (positive difference).

**LLaMA-3.1-8B - Layer 4**

* **Benign:** Similar to GPT-JT-6B, the heatmap shows a gradient from yellow (0) to dark purple (-10).

* **Jailbreak:** Similar to the "Benign" condition, the heatmap shows a gradient from yellow (0) to dark purple (-10).

* **Difference:** The heatmap shows a clear separation. The upper-left triangle is predominantly blue (negative difference), while the lower-right triangle is predominantly red (positive difference).

**Mistral-7B - Layer 18**

* **Benign:** The heatmap shows a gradient, with values decreasing from yellow (0) at the top-left corner to dark purple (-10) towards the bottom-right.

* **Jailbreak:** Similar to the "Benign" condition, the heatmap shows a gradient from yellow (0) to dark purple (-10).

* **Difference:** The heatmap shows a clear separation. The upper-left triangle is predominantly blue (negative difference), while the lower-right triangle is predominantly red (positive difference).

### Key Observations

* The "Benign" and "Jailbreak" heatmaps for each model are visually similar, suggesting that the overall attention patterns are not drastically different between the two conditions.

* The "Difference" heatmaps highlight specific regions where the attention patterns diverge between the "Benign" and "Jailbreak" prompts.

* The "Difference" heatmaps consistently show a separation, with negative differences (blue) in the upper-left triangle and positive differences (red) in the lower-right triangle.

### Interpretation

The heatmaps visualize the attention patterns within different layers of the language models when processing benign and jailbreak prompts. The similarity between the "Benign" and "Jailbreak" heatmaps suggests that the overall attention mechanisms are not fundamentally altered by the jailbreak prompts. However, the "Difference" heatmaps reveal subtle but significant variations in attention patterns.

The consistent separation observed in the "Difference" heatmaps, with negative differences in the upper-left triangle and positive differences in the lower-right triangle, indicates that the jailbreak prompts may be causing the models to shift their attention towards different tokens or regions within the input sequence. This shift in attention could be a contributing factor to the models' susceptibility to jailbreak attacks.

The specific layers chosen for analysis (Layer 7 for GPT-JT-6B, Layer 4 for LLaMA-3.1-8B, and Layer 18 for Mistral-7B) may represent layers where these differences are most pronounced. Further investigation could explore other layers to understand the full extent of the attention pattern variations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmaps: Activation Patterns for Language Models

### Overview

The image presents a 3x3 grid of heatmaps, visualizing activation patterns for three different language models (GPT-J 6B, LLaMA-3 1.8B, and Mistral 7B) under two conditions: "Benign" and "Jailbreak". The third heatmap in each row shows the "Difference" between the "Benign" and "Jailbreak" activations. Each heatmap plots activation values against "Key Token" and "Query Token" dimensions.

### Components/Axes

Each heatmap shares the following components:

* **Title:** Indicates the model and layer being visualized (e.g., "GPT-J 6B - Layer 7").

* **X-axis:** Labeled "Key Token", ranging from 0 to 448, with markers at intervals of 64.

* **Y-axis:** Labeled "Query Token", ranging from 0 to 448, with markers at intervals of 64.

* **Color Scale:** A continuous color scale ranging from approximately -10 to 4, with colors transitioning from dark purple/blue (low values) to yellow/red (high values).

* **Sub-Titles:** "Benign", "Jailbreak", and "Difference" indicate the condition being visualized.

### Detailed Analysis or Content Details

**Row 1: GPT-J 6B - Layer 7**

* **Benign:** A diagonal band of high activation (yellow/red) extends from the bottom-left to the top-right corner. Values appear to peak around 3-4. Activation is low (dark purple) elsewhere.

* **Jailbreak:** A mostly uniform dark purple/blue color indicates low activation across the entire heatmap. There's a slight diagonal band of slightly higher activation (purple) but significantly lower than the "Benign" case. Values are around -2 to -8.

* **Difference:** A diagonal band of positive values (yellow/red) mirrors the "Benign" heatmap, indicating a significant increase in activation in this region when the input is benign. Values range from approximately -2 to 4.

**Row 2: LLaMA-3 1.8B - Layer 4**

* **Benign:** A strong diagonal band of high activation (yellow/red) is present, similar to GPT-J, but more concentrated. Values peak around 3-4.

* **Jailbreak:** Predominantly dark purple/blue, indicating low activation. A faint diagonal band of slightly higher activation (purple) is visible. Values are around -2 to -8.

* **Difference:** A diagonal band of positive values (yellow/red) corresponds to the "Benign" heatmap, showing increased activation in the same region. Values range from approximately -2 to 4.

**Row 3: Mistral 7B - Layer 18**

* **Benign:** A diagonal band of high activation (yellow/red) is present, but it's broader and less sharply defined than in the other models. Values peak around 3-4.

* **Jailbreak:** A diagonal band of negative activation (dark blue/purple) is visible, contrasting with the "Benign" case. Values range from approximately -10 to -2.

* **Difference:** A diagonal band of positive values (yellow/red) indicates increased activation in the same region as the "Benign" heatmap. Values range from approximately -2 to 4.

### Key Observations

* All three models exhibit a strong diagonal activation pattern in the "Benign" condition.

* The "Jailbreak" condition consistently results in lower overall activation across all models.

* The "Difference" heatmaps highlight the regions where activation is most significantly affected by the "Jailbreak" condition.

* Mistral 7B shows a negative activation band in the "Jailbreak" condition, which is different from the other two models.

### Interpretation

The heatmaps suggest that the "Jailbreak" condition significantly alters the activation patterns within these language models. The strong diagonal activation in the "Benign" condition likely represents the model's normal processing of coherent input. The suppression of activation in the "Jailbreak" condition could indicate that the model is recognizing and attempting to mitigate potentially harmful or undesirable input. The "Difference" heatmaps visually confirm this, showing that the regions of highest activation in the "Benign" condition are most affected by the "Jailbreak" condition.

The negative activation band observed in Mistral 7B during the "Jailbreak" condition is particularly interesting. It could suggest a different mechanism for handling adversarial inputs compared to GPT-J and LLaMA-3. This could be due to architectural differences or training data.

The consistent diagonal pattern across all models and conditions suggests a fundamental aspect of how these models process information, potentially related to attention mechanisms or token relationships. The data suggests that jailbreaking attempts disrupt this normal processing pattern, leading to altered activation landscapes.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Grid: Attention Pattern Analysis Across Language Models

### Overview

The image displays a 3x3 grid of heatmaps analyzing attention patterns in three different Large Language Models (LLMs) under two conditions ("Benign" and "Jailbreak") and the calculated difference between them. Each row corresponds to a specific model and layer, while each column represents a condition. The heatmaps visualize the attention scores between query tokens and key tokens.

### Components/Axes

**Global Structure:**

- **Rows (Models & Layers):**

1. Top Row: `GPT-JT-6B - Layer 7`

2. Middle Row: `LLaMA-3.1-8B - Layer 4`

3. Bottom Row: `Mistral-7B - Layer 18`

- **Columns (Conditions):**

1. Left Column: `Benign`

2. Middle Column: `Jailbreak`

3. Right Column: `Difference`

**Axes (Identical for all 9 heatmaps):**

- **X-axis (Bottom):** `Key Token`. Scale ranges from 0 to 448, with major tick marks at 0, 64, 128, 192, 256, 320, 384, 448.

- **Y-axis (Left):** `Query Token`. Scale ranges from 0 to 448, with major tick marks at 0, 64, 128, 192, 256, 320, 384, 448.

**Color Bars (Legends):**

- **For "Benign" and "Jailbreak" columns (Left & Middle):** A vertical color bar is positioned to the right of each heatmap. The scale represents attention scores, ranging from approximately **-10 (dark purple/blue)** to **0 (yellow)**. The gradient transitions from dark purple/blue (low/negative attention) through teal and green to yellow (high/zero attention).

- **For the "Difference" column (Right):** A vertical color bar is positioned to the right of each heatmap. The scale represents the change in attention score (Jailbreak minus Benign), ranging from approximately **-4 (dark blue)** to **+4 (dark red)**, with **0 (white/light gray)** at the center. The gradient transitions from dark blue (decrease) through light blue/white to dark red (increase).

### Detailed Analysis

**Row 1: GPT-JT-6B - Layer 7**

- **Benign (Top-Left):** The heatmap shows a strong triangular pattern. High attention scores (yellow/green, ~0 to -2) are concentrated along the main diagonal (where Query Token index ≈ Key Token index) and in the upper-left triangle (where Query Token index < Key Token index). The lower-right triangle (where Query Token index > Key Token index) is dominated by very low attention scores (dark purple, ~-8 to -10).

- **Jailbreak (Top-Middle):** The pattern is visually similar to the Benign condition, maintaining the same triangular structure. The intensity of the high-attention region (yellow/green) appears slightly more pronounced or extended along the diagonal compared to Benign.

- **Difference (Top-Right):** This heatmap is predominantly red, indicating a positive difference (Jailbreak > Benign) across most of the upper-left triangle and diagonal. The strongest increases (dark red, ~+4) are concentrated in the region where both Query and Key Token indices are low (approximately 0-128). The lower-right triangle shows minimal change (white/light gray, ~0).

**Row 2: LLaMA-3.1-8B - Layer 4**

- **Benign (Middle-Left):** Exhibits a similar triangular attention pattern to GPT-JT-6B. High attention (yellow/green) is in the upper-left triangle and along the diagonal. Low attention (dark purple) fills the lower-right triangle.

- **Jailbreak (Middle-Middle):** Again, the pattern is structurally identical to its Benign counterpart. The high-attention region appears slightly brighter or more extensive.

- **Difference (Middle-Right):** This map is also largely red, showing a widespread increase in attention scores under the Jailbreak condition. The increase is most significant (dark red) in the upper-left quadrant (low token indices). The lower-right triangle shows near-zero change.

**Row 3: Mistral-7B - Layer 18**

- **Benign (Bottom-Left):** The pattern differs notably. While still triangular, the high-attention region (yellow/green) is much broader and extends further into the matrix. The gradient from high to low attention is smoother. The lowest attention scores (dark blue/purple) are confined to the very bottom-right corner.

- **Jailbreak (Bottom-Middle):** The pattern changes significantly. The high-attention region (yellow) becomes more concentrated along the main diagonal and the top edge (low Key Token indices). A larger portion of the matrix, especially the central and lower-left areas, shifts to moderate attention scores (teal/green, ~-4 to -6).

- **Difference (Bottom-Right):** This heatmap shows a complex pattern. A large, central region (spanning roughly Query 128-384 and Key 0-256) is blue, indicating a *decrease* in attention under Jailbreak. The top-left corner and a strip along the bottom edge (high Query Token indices) show red, indicating an *increase*. The diagonal shows mixed or minimal change.

### Key Observations

1. **Consistent Triangular Structure:** All "Benign" and "Jailbreak" heatmaps display a causal attention pattern, where tokens primarily attend to themselves and previous tokens (upper-left triangle), with little to no attention to future tokens (lower-right triangle).

2. **Model-Specific Baseline:** GPT-JT-6B and LLaMA-3.1-8B show very similar baseline ("Benign") attention distributions in the selected layers. Mistral-7B's baseline attention in Layer 18 is more diffuse.

3. **Jailbreak Impact - General Increase:** For GPT-JT-6B and LLaMA-3.1-8B, the "Jailbreak" condition leads to a general, widespread *increase* in attention scores within the causally allowed region (upper-left triangle), most pronounced for early tokens.

4. **Jailbreak Impact - Mistral's Redistribution:** Mistral-7B shows a different response. The Jailbreak condition causes a *redistribution* of attention, not just a uniform increase. Attention decreases in a large central region and increases in specific areas (early tokens and late query tokens).

5. **Spatial Focus of Change:** In all "Difference" maps, the most significant changes (whether increase or decrease) are concentrated in the regions corresponding to lower token indices (top-left of the matrices).

### Interpretation

This visualization provides a technical, layer-specific view of how "jailbreaking" prompts alter the internal attention mechanisms of different LLMs.

* **What the data suggests:** The jailbreak technique appears to modify the model's focus. For GPT-JT and LLaMA, it generally amplifies attention within the standard causal window, potentially making the model more sensitive to or reliant on the context provided by earlier tokens in the sequence when processing a jailbreak prompt. For Mistral, the effect is more nuanced, suggesting a strategic reallocation of attention resources—perhaps suppressing certain internal relationships while enhancing others to bypass safety training.

* **How elements relate:** The "Difference" column is the critical analytical output, directly isolating the effect of the jailbreak from the model's baseline behavior. The consistency of the triangular structure confirms the underlying causal attention mask is unchanged; the jailbreak alters the *strength* of attention, not its fundamental direction.

* **Notable anomalies/outliers:** The stark contrast between the response of Mistral-7B (Layer 18) and the other two models is the primary anomaly. This could be due to differences in model architecture, the specific layer analyzed (18 vs. 4/7), or the jailbreak's effectiveness/mechanism on that model. The concentration of change in low-index tokens across all models is also notable, suggesting the initial context of the prompt is a critical battleground during jailbreak attempts.

* **Peircean investigative reading:** The heatmaps are an *index* of the model's internal state, pointing directly to a physical change (attention score) caused by the jailbreak stimulus. They are also a *symbol* representing a complex computational process. The pattern suggests that successful jailbreaking may not require a complete overhaul of the model's processing, but rather a subtle, targeted modulation of its existing attention patterns, particularly in early processing stages. This has implications for detection and defense strategies, which could focus on monitoring for these characteristic attention shifts.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Comparison: Model Layer Vulnerability Analysis

### Overview

The image presents a comparative analysis of three language models (GPT-J-T6B, LLaMA-3.1-8B, Mistral-7B) across different layers (7, 4, 18) using three heatmaps per model: Benign, Jailbreak, and Difference. Each heatmap visualizes token interaction patterns through color gradients, with spatial grounding of elements following a consistent layout.

### Components/Axes

1. **Models/Layers**:

- Top row: GPT-J-T6B - Layer 7

- Middle row: LLaMA-3.1-8B - Layer 4

- Bottom row: Mistral-7B - Layer 18

2. **Axes**:

- X-axis: Key Token (0-448 range)

- Y-axis: Query Token (0-448 range)

- Color scales:

- Benign/Jailbreak: -10 (dark purple) to 0 (yellow)

- Difference: -4 (blue) to 4 (red)

3. **Legend Placement**:

- Right-aligned color bars with numerical ranges

- Spatial consistency across all panels

### Detailed Analysis

1. **GPT-J-T6B - Layer 7**:

- Benign: Dark purple gradient (values -10 to -6)

- Jailbreak: Green gradient (values -8 to -2)

- Difference: Red gradient (values 2-4)

- Key tokens: 64-448 show strongest differences

2. **LLaMA-3.1-8B - Layer 4**:

- Benign: Purple gradient (-10 to -4)

- Jailbreak: Teal gradient (-8 to -4)

- Difference: Mixed red/blue (values -3 to 3)

- Notable: 192-384 key tokens show highest variability

3. **Mistral-7B - Layer 18**:

- Benign: Light green gradient (-5 to -1)

- Jailbreak: Dark green gradient (-9 to -5)

- Difference: Blue gradient (-4 to 0)

- Key observation: Uniform negative differences across all tokens

### Key Observations

1. **Vulnerability Patterns**:

- GPT-J-T6B Layer 7 shows highest jailbreak susceptibility (red difference gradient)

- Mistral-7B Layer 18 demonstrates strongest resistance (blue difference gradient)

- LLaMA-3.1-8B Layer 4 exhibits mixed vulnerability (bipolar difference values)

2. **Token Interaction**:

- All models show diagonal patterns in Benign/Jailbreak heatmaps

- Difference heatmaps reveal model-specific interaction shifts:

- GPT-J: Consistent positive differences (security vulnerability)

- LLaMA: Mixed positive/negative differences (context-dependent vulnerability)

- Mistral: Consistent negative differences (resilience)

### Interpretation

The data suggests significant architectural differences in how these models handle adversarial inputs:

1. **GPT-J-T6B Layer 7** appears most vulnerable to jailbreaking, with consistent positive differences indicating predictable token manipulation patterns.

2. **Mistral-7B Layer 18** shows architectural robustness, with uniform negative differences suggesting effective token interaction safeguards.

3. **LLaMA-3.1-8B Layer 4** demonstrates context-dependent vulnerabilities, with mixed difference values indicating potential for both exploitation and mitigation through input framing.

The consistent diagonal patterns across Benign/Jailbreak heatmaps suggest shared architectural constraints in token processing, while the Difference heatmaps reveal critical layer-specific security characteristics. These findings highlight the importance of layer-specific security considerations in model deployment.

DECODING INTELLIGENCE...