## Bar Chart Grid: Causal vs. Non-Causal Error Analysis

### Overview

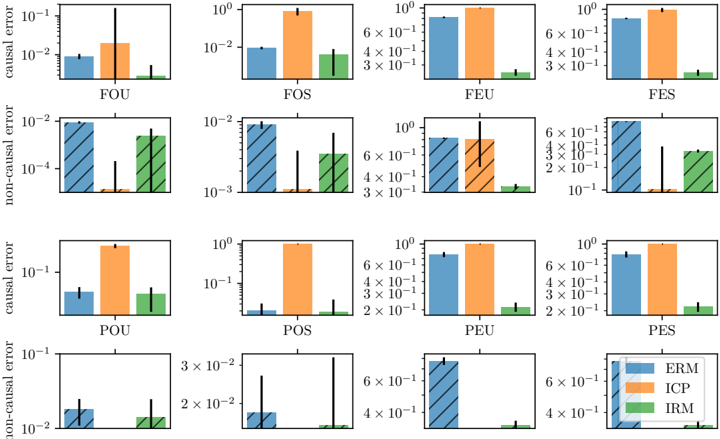

The image displays a 4x4 grid of bar charts comparing the performance of three machine learning methods (ERM, ICP, IRM) across different experimental conditions. The charts are organized into four rows and four columns. The rows alternate between measuring "causal error" (rows 1 and 3) and "non-causal error" (rows 2 and 4). The columns are labeled with three-letter codes (e.g., FOU, FOS). All y-axes use logarithmic scales. A single legend is located in the bottom-right corner of the entire figure.

### Components/Axes

* **Legend:** Positioned in the bottom-right corner of the grid. It defines three colored bars:

* **Blue:** ERM

* **Orange:** ICP

* **Green:** IRM

* **Y-Axis Labels:**

* Rows 1 & 3: "causal error"

* Rows 2 & 4: "non-causal error"

* **Y-Axis Scales:** All charts use a logarithmic scale. The specific range varies per chart (e.g., from 10⁻⁴ to 10⁻², or 10⁻² to 10⁰).

* **X-Axis Labels (Column Identifiers):** Each chart has a unique three-letter code below its x-axis:

* **Row 1:** FOU, FOS, FEU, FES

* **Row 2:** FOU, FOS, FEU, FES

* **Row 3:** POU, POS, PEU, PES

* **Row 4:** POU, POS, PEU, PES

* **Bar Styles:**

* **Solid Bars:** Used for "causal error" charts (Rows 1 & 3).

* **Hatched (Diagonal Lines) Bars:** Used for "non-causal error" charts (Rows 2 & 4).

### Detailed Analysis

**Row 1 (Causal Error - F-series):**

* **FOU:** ERM ≈ 10⁻², ICP ≈ 2x10⁻² (higher), IRM ≈ 10⁻³ (lowest).

* **FOS:** ERM ≈ 10⁻², ICP ≈ 10⁰ (much higher), IRM ≈ 10⁻² (similar to ERM).

* **FEU:** ERM ≈ 10⁰, ICP ≈ 10⁰ (similar), IRM ≈ 3x10⁻¹ (lower).

* **FES:** ERM ≈ 10⁰, ICP ≈ 10⁰ (similar), IRM ≈ 3x10⁻¹ (lower).

**Row 2 (Non-Causal Error - F-series, Hatched Bars):**

* **FOU:** ERM ≈ 10⁻², ICP ≈ 10⁻⁴ (very low), IRM ≈ 10⁻³ (low).

* **FOS:** ERM ≈ 10⁻², ICP ≈ 10⁻³ (low), IRM ≈ 10⁻³ (low).

* **FEU:** ERM ≈ 10⁰, ICP ≈ 10⁰ (similar), IRM ≈ 10⁻¹ (lower).

* **FES:** ERM ≈ 10⁰, ICP ≈ 10⁻¹ (lower), IRM ≈ 10⁻¹ (similar to ICP).

**Row 3 (Causal Error - P-series):**

* **POU:** ERM ≈ 5x10⁻², ICP ≈ 10⁰ (much higher), IRM ≈ 5x10⁻² (similar to ERM).

* **POS:** ERM ≈ 10⁻¹, ICP ≈ 10⁰ (higher), IRM ≈ 10⁻¹ (similar to ERM).

* **PEU:** ERM ≈ 10⁰, ICP ≈ 10⁰ (similar), IRM ≈ 2x10⁻¹ (lower).

* **PES:** ERM ≈ 10⁰, ICP ≈ 10⁰ (similar), IRM ≈ 2x10⁻¹ (lower).

**Row 4 (Non-Causal Error - P-series, Hatched Bars):**

* **POU:** ERM ≈ 10⁻², ICP ≈ 10⁻² (similar), IRM ≈ 10⁻² (similar).

* **POS:** ERM ≈ 10⁻², ICP ≈ 10⁻³ (very low), IRM ≈ 10⁻³ (very low).

* **PEU:** ERM ≈ 10⁰, ICP ≈ 10⁻¹ (lower), IRM ≈ 10⁻¹ (lower).

* **PES:** ERM ≈ 10⁰, ICP ≈ 10⁻¹ (lower), IRM ≈ 10⁻¹ (lower).

### Key Observations

1. **Performance Disparity:** ICP (orange) frequently shows the highest "causal error," often by an order of magnitude (e.g., FOS, POU). Its "non-causal error" is often comparable to or lower than ERM.

2. **IRM Consistency:** IRM (green) generally performs well on "causal error," often matching or beating ERM. Its "non-causal error" is also typically low.

3. **Error Type Contrast:** For a given condition (e.g., FOU), the "non-causal error" values are often significantly lower than the "causal error" values, especially for ICP and IRM.

4. **F-series vs. P-series:** The pattern for the "F" conditions (Rows 1-2) differs from the "P" conditions (Rows 3-4). Notably, in the P-series causal error charts (Row 3), ICP's error is consistently high (~10⁰), while ERM and IRM are lower and similar.

5. **Scale Variance:** The y-axis ranges differ, indicating varying magnitudes of error across conditions. For example, FOS causal error spans 10⁻² to 10⁰, while FOU non-causal error spans 10⁻⁴ to 10⁻².

### Interpretation

This grid likely presents results from a machine learning study evaluating the robustness of different methods (ERM, ICP, IRM) to distributional shifts or confounding factors. The three-letter codes (FOU, POS, etc.) probably represent different datasets or experimental settings, possibly varying in factors like **F**eature/**O**utcome/**U**nconfounded or **P**redictive/**S**purious correlations.

The data suggests a key trade-off:

* **ICP** appears to suffer from high **causal error** (misestimating true causal relationships) but can achieve low **non-causal error** (fitting surface-level patterns). This implies it may be overfitting to spurious, non-causal signals in the data.

* **IRM** demonstrates a more balanced profile, often maintaining low error on both metrics. This suggests it is more successful at isolating invariant, causal mechanisms.

* **ERM** serves as a baseline, with performance that varies but is often intermediate.

The stark difference between causal and non-causal error for methods like ICP highlights the danger of evaluating models solely on predictive accuracy (non-causal error) without testing for causal understanding. The "P-series" conditions seem particularly challenging for ICP's causal estimation. The overall message underscores the importance of methods like IRM that explicitly target invariant feature learning for reliable causal inference.