## Log-Log Scatter Plot with Linear Fits: Gradient Updates vs. Dimension

### Overview

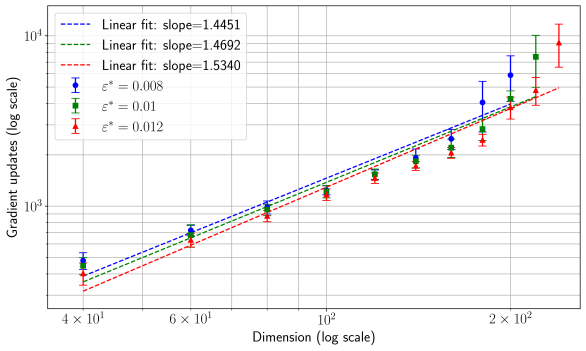

The image is a scientific chart, specifically a log-log scatter plot with error bars and overlaid linear regression lines. It illustrates the relationship between the dimension of a problem (x-axis) and the number of gradient updates required (y-axis) for three different values of a parameter denoted as ε* (epsilon star). The plot demonstrates a power-law scaling relationship.

### Components/Axes

* **Chart Type:** Log-log scatter plot with linear fits.

* **X-Axis:**

* **Label:** "Dimension (log scale)"

* **Scale:** Logarithmic.

* **Major Tick Markers:** `4 × 10¹`, `6 × 10¹`, `10²`, `2 × 10²`. These correspond to the numerical values 40, 60, 100, and 200.

* **Y-Axis:**

* **Label:** "Gradient updates (log scale)"

* **Scale:** Logarithmic.

* **Major Tick Markers:** `10³`, `10⁴`. These correspond to the numerical values 1000 and 10,000.

* **Legend (Position: Top-Left Corner):**

* **Linear Fit Entries (Dashed Lines):**

* Blue dashed line: "Linear fit: slope=1.4451"

* Green dashed line: "Linear fit: slope=1.4692"

* Red dashed line: "Linear fit: slope=1.5340"

* **Data Series Entries (Points with Error Bars):**

* Blue circle marker: "ε* = 0.008"

* Green square marker: "ε* = 0.01"

* Red triangle marker: "ε* = 0.012"

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

**Data Series and Trends:**

1. **Blue Series (ε* = 0.008):**

* **Visual Trend:** The data points (blue circles) follow a clear upward linear trend on the log-log plot. The associated blue dashed linear fit line has a slope of 1.4451.

* **Data Points (Approximate from visual inspection):**

* At Dimension ~40: Gradient updates ~300

* At Dimension ~60: Gradient updates ~600

* At Dimension ~100: Gradient updates ~1,200

* At Dimension ~200: Gradient updates ~4,000

* **Error Bars:** The vertical error bars (representing uncertainty or variance) are relatively small for lower dimensions and increase moderately with dimension.

2. **Green Series (ε* = 0.01):**

* **Visual Trend:** The data points (green squares) also follow a strong upward linear trend, slightly steeper than the blue series. The green dashed linear fit line has a slope of 1.4692.

* **Data Points (Approximate):**

* At Dimension ~40: Gradient updates ~350

* At Dimension ~60: Gradient updates ~700

* At Dimension ~100: Gradient updates ~1,500

* At Dimension ~200: Gradient updates ~5,500

* **Error Bars:** Error bars are larger than those for the blue series at corresponding dimensions, indicating greater variance.

3. **Red Series (ε* = 0.012):**

* **Visual Trend:** The data points (red triangles) show the steepest upward linear trend of the three series. The red dashed linear fit line has the highest slope of 1.5340.

* **Data Points (Approximate):**

* At Dimension ~40: Gradient updates ~400

* At Dimension ~60: Gradient updates ~800

* At Dimension ~100: Gradient updates ~1,800

* At Dimension ~200: Gradient updates ~7,000

* **Error Bars:** This series exhibits the largest error bars, which grow significantly with dimension, suggesting the highest variability in the number of updates required.

**Linear Fits:**

All three linear fits (dashed lines) align well with their respective data series, confirming the power-law relationship: `Gradient updates ∝ (Dimension)^slope`. The slope increases with ε*.

### Key Observations

1. **Consistent Scaling Law:** All three data series exhibit a linear relationship on the log-log plot, indicating a power-law scaling between problem dimension and computational cost (gradient updates).

2. **Slope Increases with ε*:** The slope of the linear fit increases from 1.4451 (ε*=0.008) to 1.5340 (ε*=0.012). This means the required gradient updates grow *super-linearly* with dimension, and the rate of this super-linear growth is higher for larger values of ε*.

3. **Variance Increases with ε* and Dimension:** The size of the error bars increases both with the parameter ε* and with the dimension. The red series (ε*=0.012) at the highest dimension (200) shows the largest uncertainty.

4. **Absolute Values:** For any given dimension, a larger ε* value results in a higher number of gradient updates. For example, at dimension 200, the updates range from ~4,000 (ε*=0.008) to ~7,000 (ε*=0.012).

### Interpretation

This chart likely comes from the field of optimization or machine learning, analyzing the convergence rate of an algorithm (e.g., for training a model or solving a high-dimensional problem).

* **What the data suggests:** The number of iterations (gradient updates) needed for the algorithm to converge scales as a power law with the problem's dimensionality. The exponent of this power law (the slope) is greater than 1, meaning the problem becomes disproportionately harder as dimension increases.

* **Role of ε*:** The parameter ε* appears to be a tolerance or step-size related parameter. A larger ε* (0.012 vs. 0.008) leads to:

1. **More updates needed** (higher y-values), suggesting a more conservative or precise convergence criterion.

2. **A steeper scaling slope**, meaning the penalty for increasing dimension is more severe.

3. **Greater variability** in the number of updates required, as shown by the larger error bars. This could imply that with a larger ε*, the algorithm's performance becomes more sensitive to the specific instance of the problem at a given dimension.

* **Practical Implication:** The chart provides a quantitative model for predicting computational cost. If one knows the dimension of their problem and chooses a tolerance ε*, they can estimate the expected number of gradient updates and the associated uncertainty. The super-linear scaling (slope > 1) is a critical consideration for scalability, warning that simply doubling the dimension will more than double the required computational effort.