## Line Chart: LM Loss vs. MoBA Block Segmentation Settings

### Overview

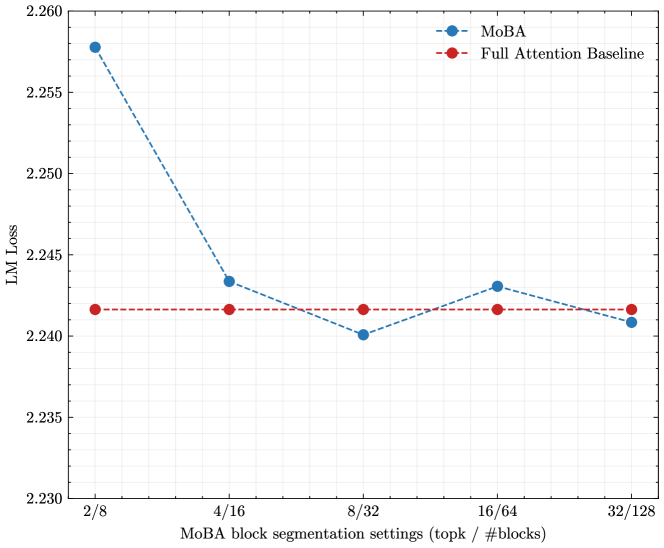

The image is a line chart comparing the Language Model (LM) Loss of MoBA (a model) against a Full Attention Baseline across different MoBA block segmentation settings. The x-axis represents the segmentation settings (topk / #blocks), and the y-axis represents the LM Loss.

### Components/Axes

* **Title:** Implicit, but the chart compares LM Loss vs. MoBA Block Segmentation Settings

* **X-axis:** "MoBA block segmentation settings (topk / #blocks)"

* X-axis markers: 2/8, 4/16, 8/32, 16/64, 32/128

* **Y-axis:** "LM Loss"

* Y-axis markers: 2.230, 2.235, 2.240, 2.245, 2.250, 2.255, 2.260

* **Legend:** Located in the top-right corner.

* Blue line with circular markers: "MoBA"

* Red line with circular markers: "Full Attention Baseline"

### Detailed Analysis

**MoBA (Blue Line):**

The MoBA line starts high, decreases, then fluctuates slightly.

* 2/8: Approximately 2.258

* 4/16: Approximately 2.243

* 8/32: Approximately 2.240

* 16/64: Approximately 2.243

* 32/128: Approximately 2.241

**Full Attention Baseline (Red Line):**

The Full Attention Baseline line is relatively flat.

* 2/8: Approximately 2.242

* 4/16: Approximately 2.242

* 8/32: Approximately 2.242

* 16/64: Approximately 2.242

* 32/128: Approximately 2.241

### Key Observations

* The MoBA model's LM Loss decreases significantly from the 2/8 setting to the 8/32 setting.

* The Full Attention Baseline maintains a relatively constant LM Loss across all segmentation settings.

* At the 32/128 setting, the LM Loss for MoBA is very close to that of the Full Attention Baseline.

### Interpretation

The chart suggests that the MoBA model's performance, as measured by LM Loss, is sensitive to the block segmentation settings. Initially, with fewer blocks (2/8), the loss is high, indicating poorer performance. As the number of blocks increases (up to 8/32), the performance improves significantly. Beyond that, the performance fluctuates slightly. The Full Attention Baseline, however, is not affected by the MoBA block segmentation settings, maintaining a consistent level of performance. The convergence of MoBA's loss to the Full Attention Baseline's loss at the 32/128 setting suggests that with sufficient blocks, MoBA can achieve comparable performance.