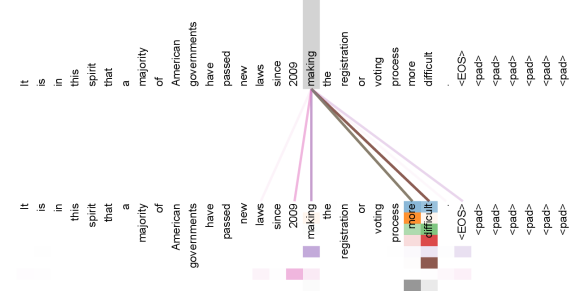

## Diagram: Attention Visualization for a Sentence in a Neural Network

### Overview

The image is a visualization of attention weights from a neural network model, likely a transformer, analyzing an English sentence. It displays how the model's attention mechanism connects the word "difficult" to other words in the same sentence, using colored lines to represent different attention heads or layers. The diagram is structured with two parallel representations of the sentence and a legend at the bottom.

### Components/Axes

* **Primary Text Blocks:** Two identical blocks of English text are presented.

* **Left Block:** Text is arranged horizontally in a single line.

* **Right Block:** The same text is arranged vertically, with each word or token on a new line.

* **Text Content (Identical in both blocks):**

`It is in this spirit that a majority of American governments have passed new laws since 2009 making the registration or voting process more difficult. <EOS> <pad> <pad> <pad> <pad>`

* `<EOS>`: End-of-sequence token.

* `<pad>`: Padding tokens (four instances).

* **Connection Lines:** Multiple colored lines originate from the word "difficult" in the left horizontal block and connect to specific words in the right vertical block.

* **Legend:** Located at the bottom center of the image. It consists of four colored squares (from left to right: light purple, pink, brown, green) which correspond to the colors of the connection lines. No textual labels are provided for the legend colors.

### Detailed Analysis

**Spatial Grounding & Connection Mapping:**

The word "difficult" in the left block is the source point for all attention lines. The lines connect to the following words in the right vertical block, listed from top to bottom:

1. **Light Purple Lines:** Connect to the words: `making`, `the`, `registration`, `or`, `voting`, `process`, `more`, `difficult`.

2. **Pink Lines:** Connect to the words: `since`, `2009`, `making`, `the`, `registration`, `or`, `voting`, `process`, `more`, `difficult`.

3. **Brown Lines:** Connect to the words: `laws`, `since`, `2009`, `making`, `the`, `registration`, `or`, `voting`, `process`, `more`, `difficult`.

4. **Green Lines:** Connect to the words: `passed`, `new`, `laws`, `since`, `2009`, `making`, `the`, `registration`, `or`, `voting`, `process`, `more`, `difficult`.

**Trend Verification:**

The visual trend shows a fan-like pattern of connections radiating from the single word "difficult" to a cascade of preceding words in the sentence. The connections are not random; they follow the syntactic structure backwards from the adjective "difficult" to the verb phrase ("making...difficult"), the prepositional phrase ("since 2009"), and the main clause ("passed new laws").

### Key Observations

* **Focal Point:** The word "difficult" is the central node of attention, indicating it is a key token the model is analyzing in this context.

* **Hierarchical Attention:** The different colors (attention heads) show varying scopes of focus:

* The **light purple** head focuses narrowly on the immediate verb phrase containing "difficult".

* The **pink** head extends attention to include the temporal clause ("since 2009").

* The **brown** head further extends to include the object of the main clause ("laws").

* The **green** head has the broadest scope, attending to the main verb ("passed") and its modifiers.

* **Redundancy:** All four attention heads converge on the sequence `making the registration or voting process more difficult`, highlighting this phrase as critically important for understanding the word "difficult".

* **Padding Tokens:** The `<pad>` tokens at the end of the sentence receive no attention lines, which is expected as they contain no semantic meaning.

### Interpretation

This diagram is a technical visualization from the field of Natural Language Processing (NLP). It demonstrates the **interpretability of a transformer-based model's attention mechanism**.

* **What it Suggests:** The model is attempting to understand the contextual meaning of the word "difficult" by relating it to other parts of the sentence. The attention pattern reveals a logical, syntactically-aware process: to understand *what* is difficult, the model looks at the action causing it ("making"), the specific processes involved ("registration or voting process"), and the historical context ("since 2009", "passed new laws").

* **How Elements Relate:** The left block represents the sentence as a flat sequence. The right block and the lines deconstruct this sequence, showing the model's internal "focus". The colored lines are the key, mapping the strength and source of contextual relationships. The legend, while unlabeled, implies these colors represent distinct attention mechanisms (heads) within the model, each learning a slightly different pattern of relationship.

* **Notable Insight:** The visualization confirms that the model's attention is not arbitrary. It follows a coherent, human-interpretable path that mirrors how a person might analyze the sentence: linking the adjective to the verb that governs it, and then to the broader circumstances described in the sentence. This provides evidence that the model has learned meaningful syntactic and semantic structures from the training data.