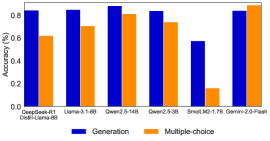

## Bar Chart: Model Accuracy Comparison Across Generation and Multiple-choice Tasks

### Overview

This image displays a bar chart comparing the accuracy of several language models across two distinct task types: "Generation" and "Multiple-choice". Each model is represented by a pair of bars, with blue indicating "Generation" accuracy and orange indicating "Multiple-choice" accuracy. The Y-axis represents accuracy as a percentage.

### Components/Axes

* **Chart Type**: Vertical Bar Chart.

* **Y-axis**:

* **Title**: "Accuracy (%)"

* **Scale**: Ranges from 0.0 to 0.8, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8. The highest visible bar extends slightly above 0.8, suggesting the scale implicitly extends to around 0.9.

* **X-axis**:

* **Labels**: Represents different language models or model configurations. From left to right, these are:

1. DeepSeek-R1 Distil-Llama-6B

2. Llama-3.1-8B

3. Qwen2.5-14B

4. Qwen2.5-3B

5. SmolLM2-1.7B

6. Gemini-2.0-Flash

* **Legend**: Located at the bottom-center of the chart.

* A blue square icon is labeled "Generation".

* An orange square icon is labeled "Multiple-choice".

### Detailed Analysis

The chart presents accuracy percentages for six different models across two task types.

1. **DeepSeek-R1 Distil-Llama-6B**:

* **Trend**: Generation accuracy is notably higher than Multiple-choice accuracy.

* **Generation (Blue)**: Approximately 0.83 (83%).

* **Multiple-choice (Orange)**: Approximately 0.62 (62%).

2. **Llama-3.1-8B**:

* **Trend**: Generation accuracy is higher than Multiple-choice accuracy.

* **Generation (Blue)**: Approximately 0.84 (84%).

* **Multiple-choice (Orange)**: Approximately 0.70 (70%).

3. **Qwen2.5-14B**:

* **Trend**: Generation accuracy is slightly higher than Multiple-choice accuracy. This model shows the highest Generation accuracy among all models.

* **Generation (Blue)**: Approximately 0.87 (87%).

* **Multiple-choice (Orange)**: Approximately 0.81 (81%).

4. **Qwen2.5-3B**:

* **Trend**: Generation accuracy is higher than Multiple-choice accuracy.

* **Generation (Blue)**: Approximately 0.83 (83%).

* **Multiple-choice (Orange)**: Approximately 0.73 (73%).

5. **SmolLM2-1.7B**:

* **Trend**: Both accuracies are significantly lower than other models, with Generation accuracy being substantially higher than Multiple-choice accuracy. This model shows the lowest performance overall.

* **Generation (Blue)**: Approximately 0.58 (58%).

* **Multiple-choice (Orange)**: Approximately 0.16 (16%).

6. **Gemini-2.0-Flash**:

* **Trend**: Multiple-choice accuracy is slightly higher than Generation accuracy, which is a reversal of the trend seen in most other models. This model shows the highest Multiple-choice accuracy.

* **Generation (Blue)**: Approximately 0.83 (83%).

* **Multiple-choice (Orange)**: Approximately 0.85 (85%).

### Key Observations

* **Dominant Trend**: For most models (DeepSeek-R1 Distil-Llama-6B, Llama-3.1-8B, Qwen2.5-14B, Qwen2.5-3B, SmolLM2-1.7B), "Generation" tasks yield higher accuracy than "Multiple-choice" tasks.

* **Outlier Performance**: SmolLM2-1.7B exhibits significantly lower accuracy in both tasks compared to all other models, particularly in "Multiple-choice" where its accuracy drops to about 16%.

* **Reversed Trend**: Gemini-2.0-Flash is the only model where "Multiple-choice" accuracy (approx. 85%) surpasses "Generation" accuracy (approx. 83%).

* **Top Performers**:

* Qwen2.5-14B achieves the highest "Generation" accuracy (approx. 87%).

* Gemini-2.0-Flash achieves the highest "Multiple-choice" accuracy (approx. 85%).

* **Consistency**: Models like DeepSeek-R1 Distil-Llama-6B, Llama-3.1-8B, Qwen2.5-14B, Qwen2.5-3B, and Gemini-2.0-Flash generally perform well, with accuracies mostly above 70% for both tasks, except for the "Multiple-choice" performance of DeepSeek-R1 Distil-Llama-6B.

### Interpretation

The data suggests a varied landscape of model capabilities across different task types.

The general trend of "Generation" tasks yielding higher accuracy than "Multiple-choice" tasks for most models could imply that these models are either optimized for generative tasks, or that the specific "Multiple-choice" tasks presented are inherently more challenging or require a different set of reasoning skills not fully captured by their current architectures.

The stark underperformance of SmolLM2-1.7B highlights that model size (1.7B parameters) might be a significant factor, as it is considerably smaller than most other models listed (e.g., 6B, 8B, 14B). Its particularly low "Multiple-choice" score suggests a severe limitation in understanding or selecting correct options, possibly due to a lack of nuanced reasoning or factual recall compared to larger models.

The performance of Gemini-2.0-Flash is particularly interesting as it bucks the trend, showing superior performance in "Multiple-choice" tasks. This could indicate that Gemini-2.0-Flash possesses strong discriminative capabilities, perhaps excelling at understanding context and selecting the best option from a given set, or that its training data or architecture is specifically geared towards such evaluative tasks. This model's strong "Multiple-choice" performance, combined with competitive "Generation" accuracy, positions it as a versatile performer.

Overall, the chart provides insights into the strengths and weaknesses of different language models, suggesting that model architecture, size, and potentially training objectives play a crucial role in their performance on distinct NLP tasks. For applications requiring high accuracy in "Multiple-choice" scenarios, Gemini-2.0-Flash appears to be a strong candidate, while Qwen2.5-14B leads in "Generation" tasks.