## Heatmap Analysis: Cross-Lingual Attention Patterns

### Overview

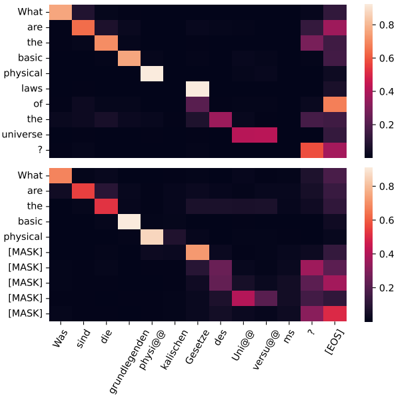

The image displays two vertically stacked heatmaps, likely visualizing attention weights or probability distributions from a neural machine translation or cross-lingual language model. The top heatmap shows attention between an English source sentence and German target words. The bottom heatmap shows a similar pattern but with several source tokens replaced by `[MASK]` tokens, suggesting a masked language modeling or infilling scenario. A shared color scale bar is positioned on the right side of both charts.

### Components/Axes

**Color Scale (Legend):**

* **Position:** Right side, spanning the vertical height of both heatmaps.

* **Scale:** A vertical gradient bar ranging from dark purple/black (0.0) to bright orange/white (0.8).

* **Labels:** Numerical markers at 0.0, 0.2, 0.4, 0.6, and 0.8.

**Top Heatmap:**

* **Y-Axis (Rows - Source/English):** Labeled with the words of the English sentence: "What", "are", "the", "basic", "physical", "laws", "of", "the", "universe", "?".

* **X-Axis (Columns - Target/German):** Labeled with the words of the German translation: "Wie", "sind", "die", "grundlegenden", "physi@lischen", "Gesetze", "des", "Uni@vers", "mo", "?", `[EOS]`.

* *Note:* The German words "physikalischen" and "Universums" appear to be tokenized into subwords ("physi@lischen", "Uni@vers").

**Bottom Heatmap:**

* **Y-Axis (Rows - Source/Masked):** Labeled with a modified version of the English sentence: "What", "are", "the", "basic", "physical", `[MASK]`, `[MASK]`, `[MASK]`, `[MASK]`, "?".

* **X-Axis (Columns - Target/German):** Identical to the top heatmap: "Wie", "sind", "die", "grundlegenden", "physi@lischen", "Gesetze", "des", "Uni@vers", "mo", "?", `[EOS]`.

### Detailed Analysis

**Top Heatmap (Full Sentence Attention):**

* **Trend:** Strong diagonal alignment is visible, indicating a high degree of one-to-one word alignment between the English source and German translation.

* **Key Data Points (High Attention >0.6, bright orange/white):**

* "What" (row 1) aligns strongly with "Wie" (col 1).

* "are" (row 2) aligns strongly with "sind" (col 2).

* "the" (row 3) aligns strongly with "die" (col 3).

* "basic" (row 4) aligns strongly with "grundlegenden" (col 4).

* "physical" (row 5) aligns strongly with "physi@lischen" (col 5).

* "laws" (row 6) aligns strongly with "Gesetze" (col 6).

* "of" (row 7) shows moderate-high attention (~0.5) with "des" (col 7).

* "the" (row 8) shows moderate attention (~0.4) with "des" (col 7) and "Uni@vers" (col 8).

* "universe" (row 9) aligns strongly with "Uni@vers" (col 8).

* "?" (row 10) aligns strongly with "?" (col 10).

* **Lower Attention Areas:** The model shows weaker attention (dark purple, <0.2) between non-corresponding words, as expected in a well-aligned translation.

**Bottom Heatmap (Masked Sentence Attention):**

* **Trend:** The strong diagonal pattern is disrupted. Attention becomes more diffuse, especially for the rows containing `[MASK]` tokens. The model appears to be using context from the unmasked words ("What", "are", "the", "basic", "physical", "?") and the available German words to infer the masked tokens.

* **Key Data Points & Shifts:**

* The unmasked words ("What", "are", "the", "basic", "physical", "?") retain strong, focused attention on their German counterparts, similar to the top heatmap.

* The `[MASK]` tokens (rows 6-9) show broad, distributed attention across multiple German words.

* **Row 6 (`[MASK]`):** Highest attention (~0.5) is on "Gesetze" (col 6), which corresponds to "laws" in the original sentence.

* **Row 7 (`[MASK]`):** Highest attention (~0.4) is on "des" (col 7), corresponding to "of the".

* **Row 8 (`[MASK]`):** Highest attention (~0.5) is on "Uni@vers" (col 8), corresponding to "universe".

* **Row 9 (`[MASK]`):** Shows a notable attention peak (~0.6) on the final "?" (col 10), suggesting the model is considering the sentence's interrogative nature for this masked position.

* The `[EOS]` token (col 11) receives some scattered, low-level attention from several `[MASK]` rows.

### Key Observations

1. **Diagonal vs. Diffuse Patterns:** The top heatmap exhibits a classic, strong diagonal alignment pattern typical of word-level translation attention. The bottom heatmap shows a fragmented pattern where attention is "searching" for context.

2. **Contextual Inference:** The model successfully uses the unmasked context words to anchor its attention. For example, "physical" (row 5) still strongly attends to "physi@lischen" (col 5) in both charts.

3. **Masked Token Behavior:** The attention for `[MASK]` tokens is not random. It peaks at the German words that correspond to the original, now-masked English words ("laws", "of the", "universe"), demonstrating the model's ability to reconstruct the sentence's meaning.

4. **Punctuation Handling:** The question mark "?" maintains a strong, direct alignment in both scenarios, indicating the model correctly identifies and preserves sentence-level punctuation.

### Interpretation

This visualization demonstrates the inner workings of a cross-lingual language model. The **top heatmap** confirms the model has learned a strong, interpretable alignment between English and German words for this specific sentence, which is fundamental for accurate translation.

The **bottom heatmap** is more revealing. It shows the model's **robustness and reasoning capability** when faced with incomplete input. By distributing attention from the `[MASK]` tokens to the corresponding German words, the model is effectively performing **cross-lingual infilling**. It uses the visible German translation as a "hint" to deduce what the masked English words must have been. This suggests the model's internal representations are not just mapping words, but are capturing **shared semantic concepts** across languages. The strong attention from a `[MASK]` token to the question mark is particularly insightful, showing the model considers syntactic and pragmatic features (like sentence type) during reconstruction.

In essence, the image provides a visual proof that the model isn't just a word-for-word translator but has developed a deeper, conceptual understanding that allows it to reason about missing information using parallel linguistic context.