## Heatmap: Attention Visualization of Machine Translation

### Overview

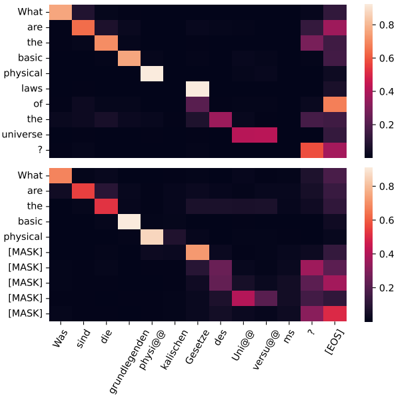

The image presents two heatmaps visualizing the attention mechanism in a machine translation model. The top heatmap shows the attention weights between the English source sentence "What are the basic physical laws of the universe?" and its German translation. The bottom heatmap shows the attention weights when the English sentence is partially masked with "[MASK]" tokens. The heatmaps illustrate how the model aligns words between the source and target languages.

### Components/Axes

* **Y-axis (Left):**

* **Top Heatmap:** English sentence: "What are the basic physical laws of the universe ?"

* **Bottom Heatmap:** English sentence: "What are the basic physical [MASK] [MASK] [MASK] [MASK] [MASK]"

* **X-axis (Bottom):** German translation: "Was sind die grundlegenden physi@@ kalischen Gesetze des Uni@@ versu@@ ms ? [EOS]"

* **Color Scale (Right):** Represents attention weights, ranging from 0.2 to 0.8. Darker shades indicate lower attention weights, while lighter shades indicate higher attention weights.

### Detailed Analysis

**Top Heatmap:**

* **"What"** strongly attends to **"Was"**.

* **"are"** strongly attends to **"sind"**.

* **"the"** strongly attends to **"die"**.

* **"basic"** strongly attends to **"grundlegenden"**.

* **"physical"** strongly attends to **"physi@@"** and **"kalischen"**.

* **"laws"** strongly attends to **"Gesetze"**.

* **"of"** strongly attends to **"des"**.

* **"the"** strongly attends to **"Uni@@"**.

* **"universe"** strongly attends to **"versu@@"** and **"ms"**.

* **"?"** strongly attends to **"?"**.

**Bottom Heatmap:**

* **"What"** strongly attends to **"Was"**.

* **"are"** strongly attends to **"sind"**.

* **"the"** strongly attends to **"die"**.

* **"basic"** strongly attends to **"grundlegenden"**.

* **"physical"** strongly attends to **"physi@@"**.

* The "[MASK]" tokens show some attention to the later parts of the German sentence, particularly "Uni@@", "versu@@", "ms", and "[EOS]".

### Key Observations

* The attention mechanism effectively aligns words between the English and German sentences in the top heatmap.

* Masking parts of the English sentence in the bottom heatmap disrupts the attention pattern, but the model still attends to some relevant words.

* The attention weights are not uniform, indicating that some words are more important for translation than others.

### Interpretation

The heatmaps visualize the inner workings of a machine translation model, specifically the attention mechanism. The attention mechanism allows the model to focus on the most relevant parts of the source sentence when generating the target sentence. The top heatmap demonstrates that the model has learned to align words between English and German. The bottom heatmap shows how the model's attention changes when parts of the input are masked, indicating the model's reliance on context. The model still attempts to find relationships, even with masked input, suggesting a degree of robustness. The varying attention weights highlight the importance of different words in the translation process.