## Scatter Plot: Relation Scores of Language Models on Various Tasks

### Overview

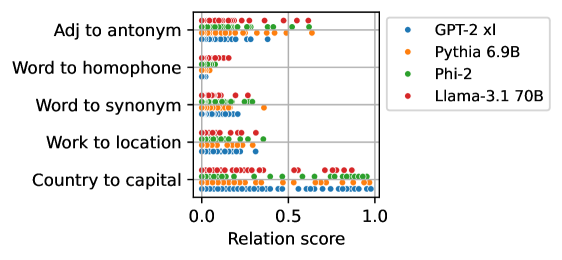

The image is a horizontal scatter plot comparing the performance of four large language models (LLMs) on five different linguistic or knowledge-based tasks. Performance is measured by a "Relation score" on a scale from 0.0 to 1.0. Each model is represented by a distinct color, and each task is a separate horizontal row on the y-axis. The plot visualizes the distribution of scores for each model-task combination.

### Components/Axes

* **Chart Type:** Horizontal Scatter Plot / Dot Plot.

* **Y-Axis (Categories):** Lists five tasks. From top to bottom:

1. `Adj to antonym`

2. `Word to homophone`

3. `Word to synonym`

4. `Work to location` (Note: Likely a typo for "Word to location").

5. `Country to capital`

* **X-Axis (Metric):** Labeled `Relation score`. The axis has major tick marks at `0.0`, `0.5`, and `1.0`.

* **Legend:** Positioned in the top-right corner, outside the main plot area. It maps colors to model names:

* Blue dot: `GPT-2 xl`

* Orange dot: `Pythia 6.9B`

* Green dot: `Phi-2`

* Red dot: `Llama-3.1 70B`

### Detailed Analysis

The analysis is segmented by task (y-axis category), describing the visual trend and approximate data distribution for each model.

**1. Task: Adj to antonym (Top Row)**

* **Trend:** Scores are widely dispersed across the entire range for all models, indicating high variability in performance on this task.

* **Data Distribution:**

* **GPT-2 xl (Blue):** Points are scattered from ~0.1 to ~0.9, with a slight clustering below 0.5.

* **Pythia 6.9B (Orange):** Similar wide spread from ~0.1 to ~0.9.

* **Phi-2 (Green):** Points are densely clustered between ~0.2 and ~0.8.

* **Llama-3.1 70B (Red):** Shows a broad distribution from ~0.1 to ~0.9, with several points near the high end (~0.8-0.9).

**2. Task: Word to homophone (Second Row)**

* **Trend:** All models perform very poorly, with scores tightly clustered near the low end of the scale.

* **Data Distribution:**

* All four models (Blue, Orange, Green, Red) have their data points concentrated in a narrow band between `0.0` and approximately `0.2`. No model achieves a score above ~0.25.

**3. Task: Word to synonym (Third Row)**

* **Trend:** Moderate performance with a clear separation between models. Scores are generally in the low-to-mid range.

* **Data Distribution:**

* **GPT-2 xl (Blue):** Clustered between ~0.1 and ~0.4.

* **Pythia 6.9B (Orange):** Shows the widest spread in this row, from ~0.1 to ~0.6, with one notable outlier near `0.6`.

* **Phi-2 (Green):** Tightly grouped between ~0.2 and ~0.4.

* **Llama-3.1 70B (Red):** Points are concentrated between ~0.2 and ~0.5.

**4. Task: Work to location (Fourth Row)**

* **Trend:** Performance is generally low, similar to the homophone task, but with slightly more spread.

* **Data Distribution:**

* All models have points primarily between `0.0` and `0.4`.

* **GPT-2 xl (Blue)** and **Pythia 6.9B (Orange)** are clustered below `0.3`.

* **Phi-2 (Green)** and **Llama-3.1 70B (Red)** show a slightly higher reach, with some points approaching `0.4`.

**5. Task: Country to capital (Bottom Row)**

* **Trend:** This task shows the highest overall scores and the most significant performance differentiation between models.

* **Data Distribution:**

* **GPT-2 xl (Blue):** Scores are spread across the entire range from `0.0` to `1.0`, indicating inconsistent performance.

* **Pythia 6.9B (Orange):** Points are mostly between `0.0` and `0.7`, with a cluster in the mid-range.

* **Phi-2 (Green):** Shows strong performance, with a dense cluster of points between `0.5` and `1.0`.

* **Llama-3.1 70B (Red):** Demonstrates the best and most consistent performance, with the vast majority of points tightly clustered between `0.7` and `1.0`.

### Key Observations

1. **Task Difficulty Hierarchy:** "Word to homophone" and "Work to location" are the most challenging tasks, with all models scoring poorly (<0.4). "Country to capital" is the easiest, with several models achieving high scores.

2. **Model Performance Patterns:**

* **Llama-3.1 70B (Red)** is the top performer on the two tasks where high scores are possible ("Country to capital" and "Adj to antonym").

* **Phi-2 (Green)** shows strong, consistent performance on "Country to capital" and mid-range performance on others.

* **GPT-2 xl (Blue)** exhibits the most variance, especially on "Country to capital," where its scores span the entire scale.

* **Pythia 6.9B (Orange)** generally performs in the middle of the pack but has a notable high outlier on "Word to synonym."

3. **Notable Anomaly:** The "Word to homophone" task acts as a performance floor, with no model showing any significant capability.

### Interpretation

This chart provides a comparative snapshot of LLM capabilities across distinct types of relational knowledge. The data suggests that:

* **Factual Recall vs. Linguistic Skill:** Models excel at factual recall tasks like "Country to capital" but struggle significantly with phonological ("homophone") and likely spatial/geographical ("location") reasoning. This highlights a potential gap in their training data or architecture for these specific relation types.

* **Model Evolution:** The newer, larger model (Llama-3.1 70B) shows a clear advantage in tasks where high performance is achievable, suggesting scaling and/or architectural improvements lead to better mastery of certain knowledge domains.

* **Task-Specific Evaluation is Crucial:** A single aggregate score would be misleading. The wide dispersion of scores for GPT-2 xl on "Country to capital" indicates that its knowledge is patchy or unreliable for that specific task, even if it can sometimes get the answer right. The tight clustering of Llama-3.1 70B on the same task indicates robust and consistent knowledge.

* **The "Homophone" Barrier:** The uniformly low scores on "Word to homophone" point to a fundamental limitation in the models' understanding of sound-based word relationships, which may be underrepresented in text-centric training corpora.

In essence, the visualization moves beyond average benchmarks to reveal the nuanced strengths and weaknesses of different LLMs, showing that performance is highly dependent on the specific nature of the task.