# Technical Data Extraction: Task Length Comparison (Single Turn)

This document contains a detailed extraction of data from two bar charts, labeled **(a)** and **(b)**, comparing the task lengths of various Large Language Models (LLMs) in a single-turn context.

---

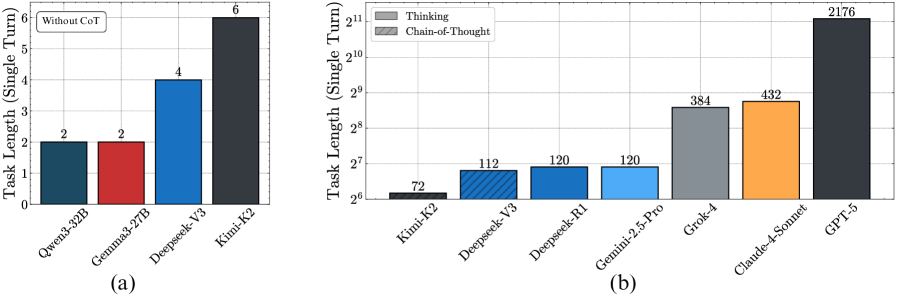

## Chart (a): Without Chain-of-Thought (CoT)

### Metadata and Layout

- **Header/Label:** A text box in the top-left corner contains the text "Without CoT".

- **Y-Axis Title:** "Task Length (Single Turn)"

- **Y-Axis Scale:** Linear, ranging from 0 to 6.

- **X-Axis Labels:** Model names oriented at a 45-degree angle.

- **Trend:** The chart shows a step-wise increase in task length capacity across the four listed models, starting at 2 and peaking at 6.

### Data Table (Reconstructed)

| Model Name | Bar Color | Task Length Value |

| :--- | :--- | :--- |

| Qwen3-32B | Dark Blue/Teal | 2 |

| Gemma3-27B | Red | 2 |

| Deepseek-V3 | Blue | 4 |

| Kimi-K2 | Dark Grey/Black | 6 |

---

## Chart (b): Thinking vs. Chain-of-Thought

### Metadata and Layout

- **Legend [Top-Left]:**

- Solid Grey Square: "Thinking"

- Hatched/Diagonal Striped Grey Square: "Chain-of-Thought"

- **Y-Axis Title:** "Task Length (Single Turn)"

- **Y-Axis Scale:** Logarithmic (Base 2), ranging from $2^6$ (64) to $2^{11}$ (2048).

- **X-Axis Labels:** Model names oriented at a 45-degree angle.

- **Trend:** The chart displays an exponential growth trend. While the first four models (Kimi-K2 through Gemini-2.5-Pro) show relatively similar capacities (72 to 120), there is a significant jump in capacity for Grok-4, Claude-4-Sonnet, and a massive outlier in GPT-5.

### Component Isolation: Bar Styles

- **Hatched Bar:** Only **Deepseek-V3** uses a diagonal hatched pattern, which according to the legend signifies "Chain-of-Thought".

- **Solid Bars:** All other models use solid colors, signifying "Thinking" processes.

### Data Table (Reconstructed)

| Model Name | Bar Color/Style | Task Length Value |

| :--- | :--- | :--- |

| Kimi-K2 | Dark Grey (Solid) | 72 |

| Deepseek-V3 | Blue (Hatched) | 112 |

| Deepseek-R1 | Blue (Solid) | 120 |

| Gemini-2.5-Pro | Light Blue (Solid) | 120 |

| Grok-4 | Medium Grey (Solid) | 384 |

| Claude-4-Sonnet | Orange (Solid) | 432 |

| GPT-5 | Dark Grey/Black (Solid) | 2176 |

---

## Comparative Analysis Summary

- **Scale Difference:** Chart (a) uses a small linear scale (0-6) for non-CoT tasks. Chart (b) uses a logarithmic scale to accommodate values ranging from 72 to over 2000.

- **Top Performer:** In both charts, the dark grey/black bar represents the highest value. In chart (a) this is **Kimi-K2** (Value: 6), and in chart (b) this is **GPT-5** (Value: 2176).

- **Methodology Note:** Deepseek-V3 is the only model explicitly highlighted as using "Chain-of-Thought" (hatched pattern) in the second chart, whereas others are categorized under "Thinking".