## Diagram: Transformer Architecture Comparison

### Overview

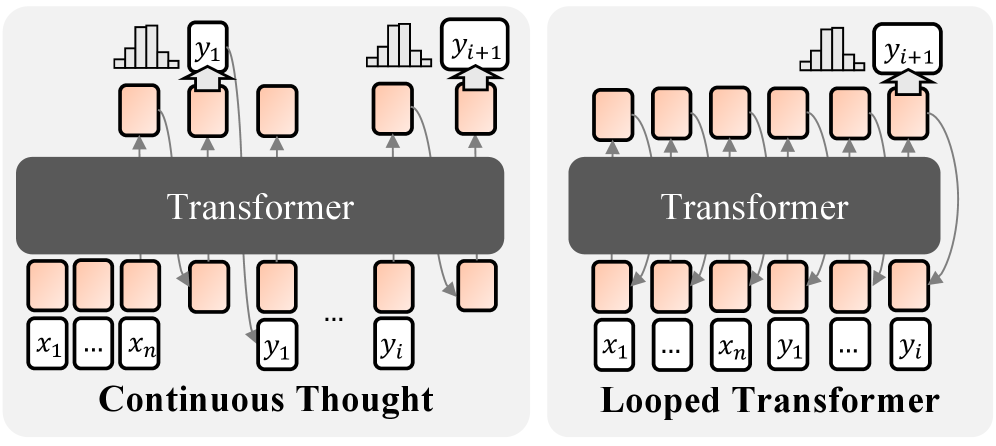

The image displays two side-by-side technical diagrams illustrating different architectural approaches for processing sequences with a Transformer model. The left diagram is labeled "Continuous Thought" and the right is labeled "Looped Transformer." Both diagrams use a consistent visual language: a central dark gray block representing the "Transformer," with sequences of light orange rounded rectangles representing data tokens or hidden states, and arrows indicating data flow.

### Components/Axes

**Common Elements:**

* **Central Block:** A dark gray, horizontally oriented rectangle labeled "Transformer" in white text. This is the core processing unit in both diagrams.

* **Data Tokens:** Light orange, rounded rectangles. These represent input tokens, output tokens, or intermediate hidden states.

* **Arrows:** Gray lines with arrowheads indicating the direction of data flow between tokens and the Transformer block.

* **Probability Distributions:** Small bar chart icons placed above certain output tokens, indicating a predicted probability distribution over a vocabulary.

**Left Diagram: "Continuous Thought"**

* **Title:** "Continuous Thought" in bold, black text at the bottom.

* **Input Sequence (Bottom Row):** A sequence of tokens labeled `x₁`, `...`, `xₙ`, followed by `y₁`, `...`, `yᵢ`. The ellipsis (`...`) indicates a variable-length sequence.

* **Output Sequence (Top Row):** A sequence of tokens. The first token has a probability distribution icon above it and is labeled `y₁`. The last token has a probability distribution icon above it and is labeled `yᵢ₊₁`.

* **Data Flow:**

1. The initial input sequence (`x₁...xₙ`) is fed into the Transformer.

2. The Transformer produces an output sequence. The first output token is `y₁`.

3. A critical feedback loop is shown: the output token `y₁` is fed back into the Transformer as part of the input for the next step.

4. This process continues iteratively. The diagram shows the token `yᵢ` being fed back to help produce the next output, `yᵢ₊₁`.

5. The final output shown is `yᵢ₊₁`.

**Right Diagram: "Looped Transformer"**

* **Title:** "Looped Transformer" in bold, black text at the bottom.

* **Input Sequence (Bottom Row):** A single, combined sequence of tokens labeled `x₁`, `...`, `xₙ`, `y₁`, `...`, `yᵢ`.

* **Output Sequence (Top Row):** A sequence of tokens. The final token has a probability distribution icon above it and is labeled `yᵢ₊₁`.

* **Data Flow:**

1. The entire combined sequence (`x₁...xₙ y₁...yᵢ`) is presented as input to the Transformer in a single pass.

2. The Transformer processes this entire sequence.

3. The diagram shows multiple arrows originating from the Transformer block and pointing to various positions within the input sequence row, suggesting internal recurrence or iterative refinement within the model's processing.

4. The final output token `yᵢ₊₁` is generated from the end of the processed sequence.

### Detailed Analysis

**Spatial Grounding & Component Isolation:**

* **Header Region (Top):** Contains the output tokens and their associated probability distribution icons. In the "Continuous Thought" diagram, outputs are generated sequentially (`y₁` then later `yᵢ₊₁`). In the "Looped Transformer," only the final output `yᵢ₊₁` is explicitly shown.

* **Main Chart Region (Center):** Dominated by the "Transformer" block. The density of connecting arrows differs significantly. The "Continuous Thought" diagram has a clear, sequential loop on the right side. The "Looped Transformer" has a denser web of arrows connecting the Transformer to multiple points in the input sequence.

* **Footer Region (Bottom):** Contains the input sequences and the diagram titles. The "Continuous Thought" input is split into an initial context (`x`) and a growing sequence of generated thoughts (`y`). The "Looped Transformer" input is a single concatenated sequence.

**Flow Comparison:**

* **Continuous Thought Flow:** `x₁...xₙ` → Transformer → `y₁` → (feed `y₁` back) → Transformer → ... → `yᵢ` → (feed `yᵢ` back) → Transformer → `yᵢ₊₁`. This is an **autoregressive, sequential generation** process where each new output depends on all previous outputs.

* **Looped Transformer Flow:** `[x₁...xₙ, y₁...yᵢ]` → Transformer (with internal loops/recurrence) → `yᵢ₊₁`. This suggests a **parallel or iterative refinement** process where the model can revisit and update its internal states for all tokens in the sequence before producing the final output.

### Key Observations

1. **Architectural Distinction:** The core difference is the processing paradigm. "Continuous Thought" is strictly sequential and autoregressive. "Looped Transformer" implies a mechanism for parallel computation or recurrent processing within a fixed forward pass.

2. **Input Representation:** The "Continuous Thought" model treats generated tokens (`y`) as part of the input stream for subsequent steps. The "Looped Transformer" model treats the entire history (both original input `x` and generated tokens `y`) as a single, static input block.

3. **Output Granularity:** The "Continuous Thought" diagram explicitly shows intermediate outputs (`y₁`). The "Looped Transformer" diagram only highlights the final output (`yᵢ₊₁`), emphasizing its end-to-end nature.

4. **Visual Complexity:** The "Looped Transformer" diagram has a more complex arrow pattern, visually representing its more intricate internal connectivity compared to the straightforward loop of the "Continuous Thought" model.

### Interpretation

This diagram contrasts two fundamental approaches to sequence modeling and generation with Transformers.

The **"Continuous Thought"** architecture represents the standard **autoregressive decoding** paradigm used in models like GPT. It generates tokens one-by-one, with each new token conditioned on all previous tokens. This is simple and effective but can be slow for long sequences as it requires `O(n)` sequential steps.

The **"Looped Transformer"** architecture suggests a more advanced, potentially **recurrent or iterative** design. It aims to overcome the sequential bottleneck by allowing the model to process the entire sequence (including partially generated outputs) in parallel, using internal loops to refine its understanding over multiple "virtual" steps within a single forward pass. This could lead to faster inference and the ability to model more complex, non-causal dependencies.

The key implication is a trade-off between simplicity/serial dependency ("Continuous Thought") and potential parallelism/computational efficiency ("Looped Transformer"). The "Looped Transformer" concept aligns with research into models like Universal Transformers or architectures that incorporate recurrence to improve reasoning and generalization beyond standard feed-forward processing.