\n

## Diagram: Continuous Thought vs. Looped Transformer

### Overview

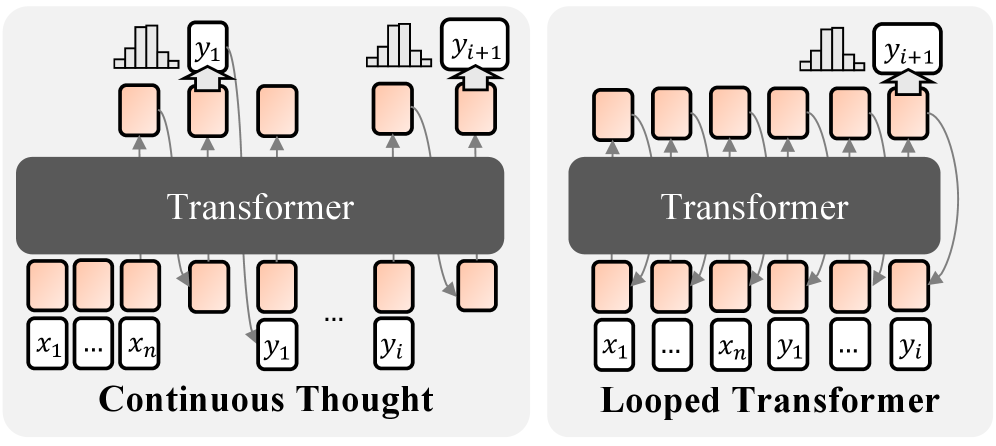

The image presents a comparative diagram illustrating two different approaches to processing sequential data using Transformers: "Continuous Thought" and "Looped Transformer". Both diagrams depict a Transformer model interacting with input data (x1...xn) and generating output data (y1...yi, y1+1). The key difference lies in how the output is fed back into the model.

### Components/Axes

The diagram consists of the following components:

* **Transformer Block:** A large, dark gray rectangle representing the Transformer model. The text "Transformer" is centered within each block.

* **Input Sequence:** Represented by a series of rectangular boxes labeled "x1...xn" at the bottom of each diagram.

* **Output Sequence:** Represented by a series of rectangular boxes labeled "y1...yi" and "y1+1" at the top of each diagram.

* **Intermediate States:** Represented by a series of oval-shaped boxes with arrows indicating the flow of information.

* **Labels:** "Continuous Thought" and "Looped Transformer" are labels placed below each respective diagram.

### Detailed Analysis or Content Details

**Continuous Thought (Left Diagram):**

* The input sequence "x1...xn" is fed into the Transformer.

* The Transformer generates an output sequence "y1...yi".

* The output "yi" is then fed back into the Transformer along with the original input to generate the next output "y1+1".

* The arrows indicate a sequential flow of information from input to output and then back into the input for the next iteration.

**Looped Transformer (Right Diagram):**

* The input sequence "x1...xn" is fed into the Transformer.

* The Transformer generates an output sequence "y1...yi".

* The output "yi" is then fed back into the Transformer *along with the original input* to generate the next output "y1+1".

* The arrows indicate a sequential flow of information from input to output and then back into the input for the next iteration.

The key difference is that in the "Looped Transformer" diagram, the entire input sequence "x1...xn" is re-fed into the Transformer along with the previous output "yi" to generate "y1+1". In "Continuous Thought", only the previous output "yi" is fed back in.

### Key Observations

The diagrams highlight a fundamental difference in how the models handle sequential dependencies. The "Continuous Thought" model appears to be more memory-efficient, as it only passes the previous output back into the model. The "Looped Transformer" model, on the other hand, maintains the entire input sequence in memory, potentially allowing it to capture longer-range dependencies but at a higher computational cost.

### Interpretation

The diagram illustrates two distinct architectural choices for implementing recurrent behavior in Transformer models. "Continuous Thought" represents a more streamlined approach, potentially suitable for tasks where only recent history is relevant. "Looped Transformer" represents a more comprehensive approach, potentially better suited for tasks requiring a broader contextual understanding. The choice between these two architectures likely depends on the specific application and the trade-off between computational cost and performance. The diagram suggests that the "Looped Transformer" is designed to maintain a more complete state representation by re-introducing the entire input sequence at each step, while "Continuous Thought" focuses on a more incremental update based solely on the previous output. This difference in state management could lead to variations in the models' ability to capture long-range dependencies and handle complex sequential patterns.