\n

## Diagram: Transformer Model Architecture

### Overview

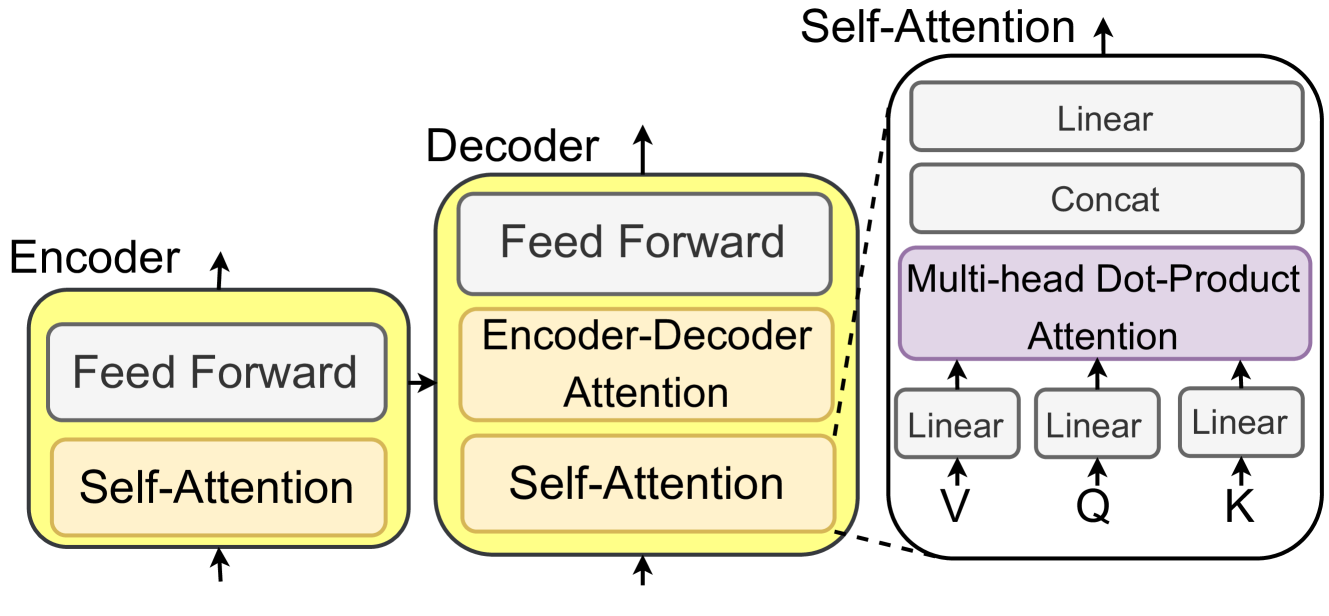

The image depicts a high-level diagram of the Transformer model architecture, a neural network architecture commonly used in natural language processing. The diagram illustrates the Encoder, Decoder, and Self-Attention components, along with the flow of information between them. It's a block diagram showing the major functional blocks and their interconnections.

### Components/Axes

The diagram is composed of three main sections: Encoder (left), Decoder (center), and Self-Attention (right). Each section contains several blocks representing different layers or operations. The blocks are labeled with their respective functions. Arrows indicate the direction of data flow.

* **Encoder:** Contains "Self-Attention" and "Feed Forward" blocks.

* **Decoder:** Contains "Self-Attention", "Encoder-Decoder Attention", and "Feed Forward" blocks.

* **Self-Attention:** Contains "Linear", "Concat", "Multi-head Dot-Product Attention", and further "Linear" blocks for Q, K, and V.

* **Labels within Self-Attention:** Q, K, V.

### Detailed Analysis or Content Details

The diagram shows a sequential flow of information within each section.

1. **Encoder:** Data flows upwards from "Self-Attention" to "Feed Forward" and then back to "Self-Attention" in a loop.

2. **Decoder:** Data flows upwards from "Self-Attention" to "Encoder-Decoder Attention", then to "Feed Forward", and finally back to "Self-Attention" in a loop. The "Encoder-Decoder Attention" block receives input from the Encoder.

3. **Self-Attention:** The "Self-Attention" block is further broken down into a series of operations. Data flows from "Linear" blocks to "Multi-head Dot-Product Attention". The "Multi-head Dot-Product Attention" block receives inputs labeled "Q", "K", and "V" from separate "Linear" blocks. The output of "Multi-head Dot-Product Attention" is concatenated ("Concat") and then passed through another "Linear" layer.

The dashed line connecting the "Self-Attention" block in the Decoder to the "Self-Attention" block on the right suggests a connection or dependency between these two components.

### Key Observations

* The diagram emphasizes the repeated use of "Self-Attention" and "Feed Forward" layers in both the Encoder and Decoder.

* The "Encoder-Decoder Attention" block in the Decoder is a key component for integrating information from the Encoder.

* The Self-Attention block is highly detailed, showing the internal operations of the attention mechanism.

* The labels Q, K, and V within the Self-Attention block represent Query, Key, and Value, which are fundamental concepts in attention mechanisms.

### Interpretation

The diagram illustrates the core architecture of the Transformer model, which relies heavily on attention mechanisms to process sequential data. The Encoder transforms the input sequence into a representation, while the Decoder generates the output sequence based on this representation. The Self-Attention mechanism allows the model to weigh the importance of different parts of the input sequence when making predictions. The repeated use of Self-Attention and Feed Forward layers enables the model to learn complex relationships between the input and output. The diagram highlights the modularity of the Transformer architecture, with each block performing a specific function. The attention mechanism (Q, K, V) is a core component, allowing the model to focus on relevant parts of the input sequence. The diagram is a simplified representation, omitting details such as residual connections and layer normalization, but it effectively conveys the overall structure and flow of information within the Transformer model. The diagram is a conceptual illustration, not a quantitative representation of data. It's a visual aid for understanding the model's architecture.