TECHNICAL ASSET FINGERPRINT

268537a33a7802cecb3cbebd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

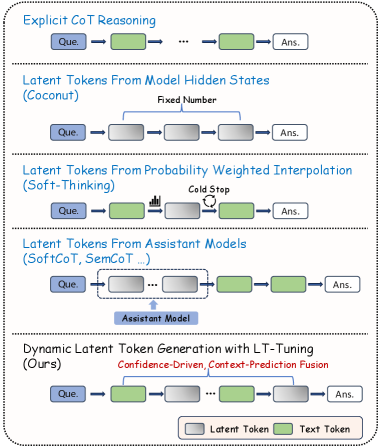

## Diagram: Latent Token Generation Methods

### Overview

The image presents a comparison of different methods for latent token generation in language models. It illustrates five approaches: Explicit CoT Reasoning, Latent Tokens from Model Hidden States (Coconut), Latent Tokens from Probability Weighted Interpolation (Soft-Thinking), Latent Tokens from Assistant Models (SoftCoT, SemCoT...), and Dynamic Latent Token Generation with LT-Tuning (Ours). The diagram uses a consistent visual language to represent each method, highlighting the flow of information and the role of latent tokens.

### Components/Axes

* **Nodes:** Rectangular boxes labeled "Que." (Question) and "Ans." (Answer) represent the input and output, respectively. Other rectangular boxes, colored either gray or green, represent latent tokens or text tokens.

* **Arrows:** Arrows indicate the flow of information between nodes.

* **Text Labels:** Descriptive text provides the name of each method and additional details about its operation.

* **Legend:** Located at the bottom-right, the legend defines the color scheme: Gray boxes represent "Latent Token," and green boxes represent "Text Token."

### Detailed Analysis

**1. Explicit CoT Reasoning:**

* Starts with a "Que." (Question) node.

* Flows through a green "Text Token" node.

* Continues through a series of gray "Latent Token" nodes, indicated by "...".

* Ends with an "Ans." (Answer) node.

* Trend: Question -> Text Token -> Latent Tokens -> Answer

**2. Latent Tokens From Model Hidden States (Coconut):**

* Starts with a "Que." (Question) node.

* Flows through a gray "Latent Token" node.

* Continues through a fixed number of gray "Latent Token" nodes.

* Ends with an "Ans." (Answer) node.

* Label: "Fixed Number" above the latent tokens.

* Trend: Question -> Latent Tokens (Fixed Number) -> Answer

**3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking):**

* Starts with a "Que." (Question) node.

* Flows through a green "Text Token" node.

* Flows through a gray "Latent Token" node.

* A "Cold Stop" label is associated with a circular arrow pointing back to the previous latent token.

* Ends with an "Ans." (Answer) node.

* Trend: Question -> Text Token -> Latent Token (with Cold Stop) -> Answer

**4. Latent Tokens From Assistant Models (SoftCoT, SemCoT...):**

* Starts with a "Que." (Question) node.

* Flows through a series of gray "Latent Token" nodes, indicated by "...". These latent tokens are enclosed in a dashed rectangle labeled "Assistant Model".

* Flows through a green "Text Token" node.

* Ends with an "Ans." (Answer) node.

* Trend: Question -> Latent Tokens (Assistant Model) -> Text Token -> Answer

**5. Dynamic Latent Token Generation with LT-Tuning (Ours):**

* Starts with a "Que." (Question) node.

* Flows through a green "Text Token" node.

* Flows through a series of gray "Latent Token" nodes, indicated by "...".

* Ends with an "Ans." (Answer) node.

* Label: "Confidence-Driven, Context-Prediction Fusion" above the latent tokens.

* Trend: Question -> Text Token -> Latent Tokens -> Answer

### Key Observations

* All methods start with a "Que." node and end with an "Ans." node.

* The primary difference between the methods lies in how the latent tokens are generated and integrated into the process.

* Some methods use a fixed number of latent tokens, while others use a dynamic or context-dependent approach.

* The "Soft-Thinking" method introduces a "Cold Stop" mechanism.

* The "Assistant Models" method uses an external model to generate latent tokens.

* The "Ours" method uses "Confidence-Driven, Context-Prediction Fusion" for latent token generation.

### Interpretation

The diagram illustrates the evolution of latent token generation techniques in language models. It highlights the shift from explicit reasoning chains to more sophisticated methods that leverage model hidden states, probability-weighted interpolation, assistant models, and dynamic tuning. The "Ours" method, which uses confidence-driven and context-prediction fusion, represents a more advanced approach to latent token generation. The diagram suggests that the field is moving towards more flexible and adaptive methods for incorporating latent information into language models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

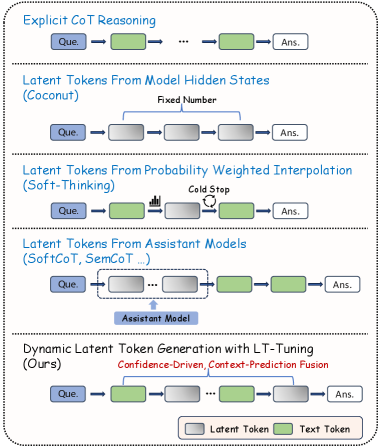

## Diagram: Chain-of-Thought Reasoning Approaches

### Overview

The image is a diagram illustrating five different approaches to Chain-of-Thought (CoT) reasoning in large language models. Each approach is presented as a sequential flow from "Que." (presumably Query) to "Ans." (Answer), with intermediate steps represented by different types of tokens. The diagram visually differentiates between "Latent Tokens" (grey) and "Text Tokens" (green).

### Components/Axes

The diagram is structured into five horizontal sections, each representing a different CoT reasoning method. Each section contains the following elements:

* **Que.** (Query): The starting point of the reasoning process.

* **Arrows:** Representing the flow of information.

* **Tokens:** Representing intermediate reasoning steps. These are either "Latent Tokens" (grey squares) or "Text Tokens" (green rectangles).

* **Ans.** (Answer): The final output of the reasoning process.

* **Titles:** Each section has a title describing the CoT approach.

* **Annotations:** Some sections have additional text annotations describing specific aspects of the method.

* **Legend:** Located at the bottom-right, defining the colors for "Latent Token" and "Text Token".

The five approaches are:

1. Explicit CoT Reasoning

2. Latent Tokens From Model Hidden States (Coconut)

3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

5. Dynamic Latent Token Generation with LT-Tuning (Ours)

### Detailed Analysis or Content Details

Let's analyze each section individually:

1. **Explicit CoT Reasoning:**

* Flow: Que. -> Text Token -> ... -> Text Token -> Ans.

* The flow consists of a series of green "Text Tokens" between the query and the answer. The "..." indicates an unspecified number of intermediate steps.

2. **Latent Tokens From Model Hidden States (Coconut):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Ans.

* Annotation: "Fixed Number" is placed above the latent tokens.

* The flow consists of a series of grey "Latent Tokens" between the query and the answer. The "..." indicates an unspecified number of intermediate steps.

3. **Latent Tokens From Probability Weighted Interpolation (Soft-Thinking):**

* Flow: Que. -> Latent Token -> (Waveform) -> (Circle with question mark) -> Latent Token -> Ans.

* Annotation: "Cold Stop" is placed above the waveform and circle.

* The flow includes a waveform symbol and a circle with a question mark within the latent token sequence.

4. **Latent Tokens From Assistant Models (SoftCoT, SemCoT ...):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Text Token -> Ans.

* Annotation: "Assistant Model" is placed below the latent tokens.

* The flow includes a combination of grey "Latent Tokens" and a final green "Text Token" before the answer.

5. **Dynamic Latent Token Generation with LT-Tuning (Ours):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Text Token -> Ans.

* Annotations: "Confidence-Driven, Context-Prediction Fusion" is placed above the latent tokens.

* The flow consists of a series of grey "Latent Tokens" and a final green "Text Token" before the answer. The "..." indicates an unspecified number of intermediate steps.

### Key Observations

* The primary distinction between the approaches lies in the type of tokens used for intermediate reasoning steps (Text vs. Latent).

* The "Explicit CoT Reasoning" approach uses only text tokens, while the others utilize latent tokens.

* The "Soft-Thinking" approach introduces visual elements (waveform and question mark) within the latent token sequence.

* The "Assistant Models" and "Dynamic Latent Token Generation" approaches combine latent and text tokens.

* The "Ours" approach (Dynamic Latent Token Generation) is highlighted with annotations indicating its key features.

### Interpretation

This diagram presents a comparative overview of different methods for enhancing Chain-of-Thought reasoning in language models. The approaches vary in how they represent and utilize intermediate reasoning steps. The shift from explicit text-based CoT to latent token-based approaches suggests an effort to capture more nuanced and potentially hidden reasoning processes within the model. The inclusion of visual elements in "Soft-Thinking" and the combination of latent and text tokens in the other methods indicate attempts to bridge the gap between internal model representations and human-interpretable reasoning steps. The "Ours" approach, with its emphasis on confidence-driven and context-prediction fusion, likely represents a novel contribution aimed at improving the accuracy and efficiency of latent token generation. The diagram effectively communicates the core concepts and differences between these approaches, providing a valuable visual aid for understanding the evolving landscape of CoT reasoning techniques. The use of "Que." and "Ans." is a simplification, but effectively conveys the input-process-output nature of these methods.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Latent Token Generation Methods

### Overview

The image is a technical diagram comparing five different approaches for generating latent tokens in AI models, particularly for reasoning tasks. Each method is presented as a horizontal flowchart showing the sequence of processing from a question ("Que.") to an answer ("Ans."). The diagram uses a consistent visual language with colored boxes and arrows to represent different types of tokens and processing steps.

### Components/Axes

The diagram is divided into five horizontal sections, each with a title and a flowchart. A legend at the bottom defines the token types:

* **Gray Box**: Latent Token

* **Green Box**: Text Token

**Section Titles (from top to bottom):**

1. Explicit CoT Reasoning

2. Latent Tokens From Model Hidden States (Coconut)

3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

5. Dynamic Latent Token Generation with LT-Tuning (Ours)

**Flowchart Elements:**

* **Que.**: Blue box, representing the input question.

* **Ans.**: White box, representing the output answer.

* **Arrows**: Indicate the flow of information or processing sequence.

* **Text Labels**: Annotations describing specific processes or constraints within a method.

### Detailed Analysis

**1. Explicit CoT Reasoning**

* **Flow**: `Que.` -> [Green Token] -> ... -> [Green Token] -> `Ans.`

* **Description**: This represents a standard Chain-of-Thought (CoT) approach where reasoning steps are explicit, intermediate text tokens (green) are generated between the question and the final answer.

**2. Latent Tokens From Model Hidden States (Coconut)**

* **Flow**: `Que.` -> [Gray Token] -> [Gray Token] -> [Gray Token] -> `Ans.`

* **Annotation**: "Fixed Number" is written above the sequence of three gray latent tokens.

* **Description**: This method (Coconut) generates a fixed number of latent tokens (gray) derived from the model's hidden states. These tokens are not human-readable text but are used internally for reasoning before producing the final answer.

**3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)**

* **Flow**: `Que.` -> [Green Token] -> [Gray Token with a small bar chart icon above it] -> [Green Token] -> `Ans.`

* **Annotation**: "Cold Stop" is written above a circular arrow pointing back to the gray token.

* **Description**: The Soft-Thinking method involves a mix of text and latent tokens. The gray token is annotated with a bar chart, suggesting it's generated via probability weighting or interpolation. The "Cold Stop" loop indicates a potential iterative or stopping condition based on confidence or probability.

**4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)**

* **Flow**: `Que.` -> [Dashed box containing two gray tokens] -> [Green Token] -> [Green Token] -> `Ans.`

* **Annotation**: An arrow points from a blue box labeled "Assistant Model" to the dashed box containing the latent tokens.

* **Description**: This approach uses separate "Assistant Models" to generate the initial latent tokens (gray). These tokens are then processed by the main model to produce explicit text tokens (green) leading to the answer. The dashed box groups the assistant-generated latent tokens.

**5. Dynamic Latent Token Generation with LT-Tuning (Ours)**

* **Flow**: `Que.` -> [Gray Token] -> [Green Token] -> ... -> [Gray Token] -> [Green Token] -> `Ans.`

* **Annotation**: "Confidence-Driven, Context-Prediction Fusion" is written in red above the sequence.

* **Description**: The proposed method ("Ours") features a dynamic, interleaved sequence of latent (gray) and text (green) tokens. The red annotation specifies the core mechanisms: generation is driven by model confidence and involves fusing context predictions. This suggests an adaptive process where the model decides when to use latent vs. explicit reasoning steps.

### Key Observations

1. **Progression of Complexity**: The diagram shows an evolution from purely explicit text reasoning (CoT) to purely latent reasoning (Coconut), then to hybrid models (Soft-Thinking, Assistant Models), culminating in a dynamic, interleaved approach (LT-Tuning).

2. **Token Type Legend**: The consistent use of green for text tokens and gray for latent tokens is critical for interpreting each method's strategy.

3. **Spatial Grounding**: The legend is positioned at the bottom-center. Each method's flowchart is left-aligned within its section. Annotations are placed directly above the relevant part of the flow they describe.

4. **Proposed Method Distinction**: The final method is labeled "(Ours)" and uses red text for its key mechanism, visually setting it apart as the authors' contribution. Its flow is the most complex, showing a non-uniform, interleaved pattern of token types.

### Interpretation

This diagram serves as a conceptual taxonomy and a pitch for a new method. It visually argues that existing approaches to latent reasoning fall into distinct categories: fully explicit, fully latent, or hybrid with fixed patterns. The proposed "LT-Tuning" method is presented as a more advanced, dynamic alternative.

The core innovation suggested is **adaptive reasoning granularity**. Instead of committing to a fixed number of latent steps (Coconut) or a simple alternation, the model using LT-Tuning can flexibly generate latent tokens for internal computation and text tokens for explicit reasoning at points where they are most useful, guided by confidence and context prediction. This aims to combine the interpretability of chain-of-thought with the efficiency and power of latent reasoning, potentially leading to more robust and capable AI reasoning systems. The diagram effectively communicates that the field is moving from static reasoning pathways toward dynamic, model-controlled reasoning processes.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Latent Token Generation Methods

## Diagram Overview

The image presents a comparative analysis of latent token generation methodologies in language models, structured as a multi-stage flowchart. The diagram uses color-coded rectangles to represent different token types and processes.

## Key Components

1. **Token Representation**

- **Latent Tokens**: Gray rectangles (Legend: "Latent Token")

- **Text Tokens**: Green rectangles (Legend: "Text Token")

- **Question Input**: Blue rectangle labeled "Que."

- **Answer Output**: White rectangle labeled "Ans."

2. **Methodology Sections

### 1. Explicit CoT Reasoning

- Flow: `Que. → Text Token → ... → Text Token → Ans.`

- Description: Traditional Chain-of-Thought reasoning using explicit text tokens

### 2. Latent Tokens From Model Hidden States (Coconut)

- Flow: `Que. → Gray Token (Fixed Number) → ... → Gray Token → Ans.`

- Description: Fixed extraction of latent tokens from model hidden states

### 3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

- Flow: `Que. → Text Token → Gray Token (Probability Weighted) → ... → Text Token → Ans.`

- Description: Soft-thinking approach using probability-weighted latent token interpolation with cold stopping

### 4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

- Flow: `Que. → Gray Token → ... → Gray Token → Text Token → ... → Text Token → Ans.`

- Description: Hybrid approach combining assistant models with text token generation

### 5. Dynamic Latent Token Generation with LT-Tuning (Ours)

- Flow: `Que. → Text Token → Gray Token → ... → Text Token → Ans.`

- Description: Confidence-driven, context-prediction fusion approach with LT-Tuning

- Unique Feature: Red text annotation "Confidence-Driven, Context-Prediction Fusion"

## Spatial Analysis

- **Legend Position**: Bottom-right corner

- **Color Consistency**:

- All gray rectangles match "Latent Token" legend

- All green rectangles match "Text Token" legend

## Methodological Progression

1. **Baseline**: Explicit text-based reasoning (CoT)

2. **Intermediate**:

- Fixed latent token extraction (Coconut)

- Probability-weighted latent tokens (Soft-Thinking)

- Assistant model integration (SoftCoT/SemCoT)

3. **Advanced**: Dynamic LT-Tuning with confidence-driven fusion

## Technical Implications

- Demonstrates evolution from purely text-based reasoning to hybrid latent-text approaches

- Highlights increasing complexity in token generation strategies

- Emphasizes confidence and context prediction in state-of-the-art methods

## Limitations

- No quantitative performance metrics provided

- No explicit comparison of computational efficiency

- No error analysis or failure modes discussed

## Conclusion

The diagram illustrates a progression toward more sophisticated latent token generation methods, culminating in the proposed LT-Tuning approach that combines confidence-driven mechanisms with context prediction fusion.

DECODING INTELLIGENCE...