\n

## Diagram: Chain-of-Thought Reasoning Approaches

### Overview

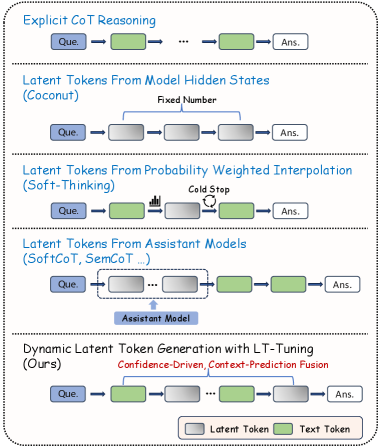

The image is a diagram illustrating five different approaches to Chain-of-Thought (CoT) reasoning in large language models. Each approach is presented as a sequential flow from "Que." (presumably Query) to "Ans." (Answer), with intermediate steps represented by different types of tokens. The diagram visually differentiates between "Latent Tokens" (grey) and "Text Tokens" (green).

### Components/Axes

The diagram is structured into five horizontal sections, each representing a different CoT reasoning method. Each section contains the following elements:

* **Que.** (Query): The starting point of the reasoning process.

* **Arrows:** Representing the flow of information.

* **Tokens:** Representing intermediate reasoning steps. These are either "Latent Tokens" (grey squares) or "Text Tokens" (green rectangles).

* **Ans.** (Answer): The final output of the reasoning process.

* **Titles:** Each section has a title describing the CoT approach.

* **Annotations:** Some sections have additional text annotations describing specific aspects of the method.

* **Legend:** Located at the bottom-right, defining the colors for "Latent Token" and "Text Token".

The five approaches are:

1. Explicit CoT Reasoning

2. Latent Tokens From Model Hidden States (Coconut)

3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

5. Dynamic Latent Token Generation with LT-Tuning (Ours)

### Detailed Analysis or Content Details

Let's analyze each section individually:

1. **Explicit CoT Reasoning:**

* Flow: Que. -> Text Token -> ... -> Text Token -> Ans.

* The flow consists of a series of green "Text Tokens" between the query and the answer. The "..." indicates an unspecified number of intermediate steps.

2. **Latent Tokens From Model Hidden States (Coconut):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Ans.

* Annotation: "Fixed Number" is placed above the latent tokens.

* The flow consists of a series of grey "Latent Tokens" between the query and the answer. The "..." indicates an unspecified number of intermediate steps.

3. **Latent Tokens From Probability Weighted Interpolation (Soft-Thinking):**

* Flow: Que. -> Latent Token -> (Waveform) -> (Circle with question mark) -> Latent Token -> Ans.

* Annotation: "Cold Stop" is placed above the waveform and circle.

* The flow includes a waveform symbol and a circle with a question mark within the latent token sequence.

4. **Latent Tokens From Assistant Models (SoftCoT, SemCoT ...):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Text Token -> Ans.

* Annotation: "Assistant Model" is placed below the latent tokens.

* The flow includes a combination of grey "Latent Tokens" and a final green "Text Token" before the answer.

5. **Dynamic Latent Token Generation with LT-Tuning (Ours):**

* Flow: Que. -> Latent Token -> Latent Token -> ... -> Latent Token -> Text Token -> Ans.

* Annotations: "Confidence-Driven, Context-Prediction Fusion" is placed above the latent tokens.

* The flow consists of a series of grey "Latent Tokens" and a final green "Text Token" before the answer. The "..." indicates an unspecified number of intermediate steps.

### Key Observations

* The primary distinction between the approaches lies in the type of tokens used for intermediate reasoning steps (Text vs. Latent).

* The "Explicit CoT Reasoning" approach uses only text tokens, while the others utilize latent tokens.

* The "Soft-Thinking" approach introduces visual elements (waveform and question mark) within the latent token sequence.

* The "Assistant Models" and "Dynamic Latent Token Generation" approaches combine latent and text tokens.

* The "Ours" approach (Dynamic Latent Token Generation) is highlighted with annotations indicating its key features.

### Interpretation

This diagram presents a comparative overview of different methods for enhancing Chain-of-Thought reasoning in language models. The approaches vary in how they represent and utilize intermediate reasoning steps. The shift from explicit text-based CoT to latent token-based approaches suggests an effort to capture more nuanced and potentially hidden reasoning processes within the model. The inclusion of visual elements in "Soft-Thinking" and the combination of latent and text tokens in the other methods indicate attempts to bridge the gap between internal model representations and human-interpretable reasoning steps. The "Ours" approach, with its emphasis on confidence-driven and context-prediction fusion, likely represents a novel contribution aimed at improving the accuracy and efficiency of latent token generation. The diagram effectively communicates the core concepts and differences between these approaches, providing a valuable visual aid for understanding the evolving landscape of CoT reasoning techniques. The use of "Que." and "Ans." is a simplification, but effectively conveys the input-process-output nature of these methods.