# Technical Document Extraction: Latent Token Generation Methods

## Diagram Overview

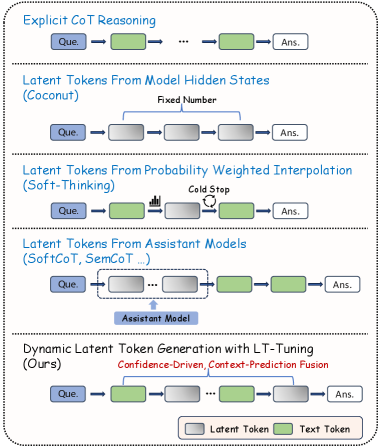

The image presents a comparative analysis of latent token generation methodologies in language models, structured as a multi-stage flowchart. The diagram uses color-coded rectangles to represent different token types and processes.

## Key Components

1. **Token Representation**

- **Latent Tokens**: Gray rectangles (Legend: "Latent Token")

- **Text Tokens**: Green rectangles (Legend: "Text Token")

- **Question Input**: Blue rectangle labeled "Que."

- **Answer Output**: White rectangle labeled "Ans."

2. **Methodology Sections

### 1. Explicit CoT Reasoning

- Flow: `Que. → Text Token → ... → Text Token → Ans.`

- Description: Traditional Chain-of-Thought reasoning using explicit text tokens

### 2. Latent Tokens From Model Hidden States (Coconut)

- Flow: `Que. → Gray Token (Fixed Number) → ... → Gray Token → Ans.`

- Description: Fixed extraction of latent tokens from model hidden states

### 3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

- Flow: `Que. → Text Token → Gray Token (Probability Weighted) → ... → Text Token → Ans.`

- Description: Soft-thinking approach using probability-weighted latent token interpolation with cold stopping

### 4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

- Flow: `Que. → Gray Token → ... → Gray Token → Text Token → ... → Text Token → Ans.`

- Description: Hybrid approach combining assistant models with text token generation

### 5. Dynamic Latent Token Generation with LT-Tuning (Ours)

- Flow: `Que. → Text Token → Gray Token → ... → Text Token → Ans.`

- Description: Confidence-driven, context-prediction fusion approach with LT-Tuning

- Unique Feature: Red text annotation "Confidence-Driven, Context-Prediction Fusion"

## Spatial Analysis

- **Legend Position**: Bottom-right corner

- **Color Consistency**:

- All gray rectangles match "Latent Token" legend

- All green rectangles match "Text Token" legend

## Methodological Progression

1. **Baseline**: Explicit text-based reasoning (CoT)

2. **Intermediate**:

- Fixed latent token extraction (Coconut)

- Probability-weighted latent tokens (Soft-Thinking)

- Assistant model integration (SoftCoT/SemCoT)

3. **Advanced**: Dynamic LT-Tuning with confidence-driven fusion

## Technical Implications

- Demonstrates evolution from purely text-based reasoning to hybrid latent-text approaches

- Highlights increasing complexity in token generation strategies

- Emphasizes confidence and context prediction in state-of-the-art methods

## Limitations

- No quantitative performance metrics provided

- No explicit comparison of computational efficiency

- No error analysis or failure modes discussed

## Conclusion

The diagram illustrates a progression toward more sophisticated latent token generation methods, culminating in the proposed LT-Tuning approach that combines confidence-driven mechanisms with context prediction fusion.