## Diagram Set: Neuro-Symbolic AI Integration Schematics

### Overview

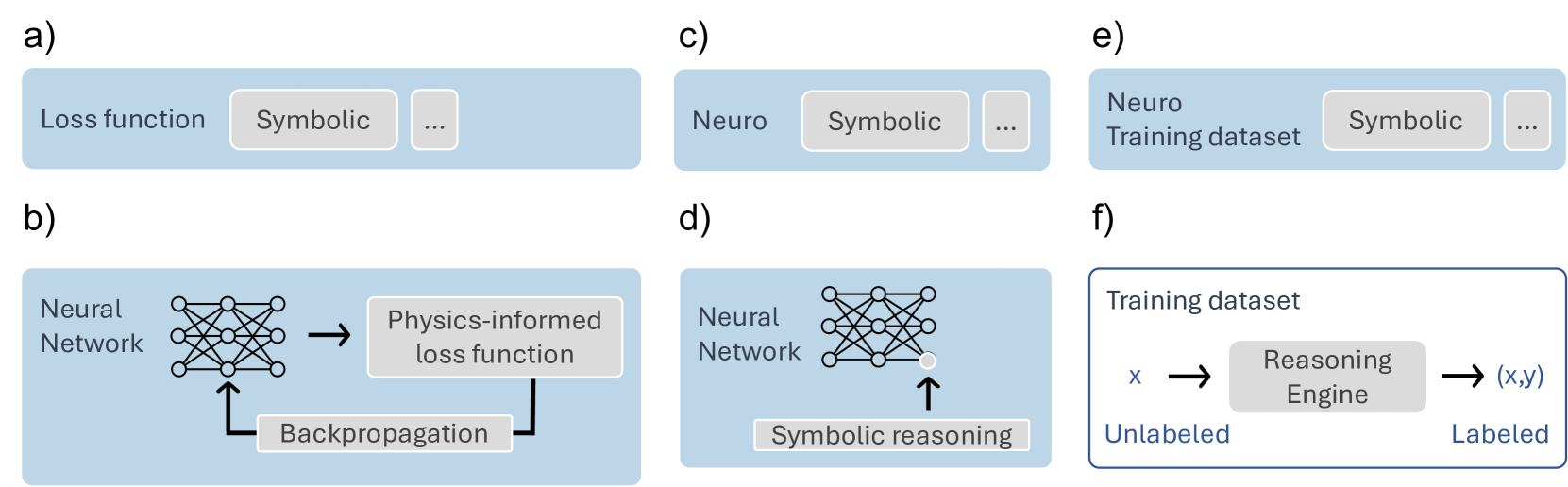

The image displays six schematic diagrams, labeled a) through f), illustrating different conceptual approaches to integrating neural ("Neuro") and symbolic ("Symbolic") components within artificial intelligence systems. The diagrams are presented in a 2x3 grid layout on a white background. Each diagram is contained within a light blue rectangular box, except for diagram f), which has a white background with a blue border. The diagrams use simple icons (a neural network graph, text boxes, arrows) to represent components and data flow.

### Components/Axes

There are no traditional chart axes. The components are labeled text boxes and icons. The key elements across all diagrams are:

* **Neural Network Icon:** A stylized graph of interconnected nodes, representing a neural network model.

* **Text Boxes:** Labeled with specific functions or components (e.g., "Loss function", "Symbolic", "Physics-informed loss function", "Reasoning Engine").

* **Arrows:** Indicate the direction of data flow, influence, or process steps.

* **Ellipsis (...):** Appears in diagrams a), c), and e), suggesting additional, unspecified components or steps.

### Detailed Analysis

The image is segmented into six independent panels. Each is analyzed below:

**a) Top-Left Panel**

* **Content:** A light blue box containing the text "Loss function" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** Suggests a symbolic component is part of or influences the loss function in a training process.

**b) Bottom-Left Panel**

* **Content:** A light blue box showing a process flow.

* Left: Text "Neural Network" next to a neural network icon.

* Center: A right-pointing arrow leads to a gray box labeled "Physics-informed loss function".

* Bottom: A gray box labeled "Backpropagation" has an arrow pointing up to the neural network icon.

* **Flow:** Neural Network -> Physics-informed loss function. The loss function's output is used for Backpropagation, which updates the Neural Network.

**c) Top-Center Panel**

* **Content:** A light blue box containing the text "Neuro" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** A high-level representation of a neuro-symbolic system, implying a "Neuro" component works alongside a "Symbolic" component and others.

**d) Bottom-Center Panel**

* **Content:** A light blue box.

* Left: Text "Neural Network" next to a neural network icon.

* Bottom: A gray box labeled "Symbolic reasoning" has an arrow pointing up to the neural network icon.

* **Flow:** Symbolic reasoning provides input or constraints directly to the Neural Network.

**e) Top-Right Panel**

* **Content:** A light blue box containing the text "Neuro Training dataset" on the left, a gray box labeled "Symbolic" in the center, and a smaller gray box with an ellipsis "..." on the right.

* **Interpretation:** Suggests a symbolic component is involved in the creation or processing of the training dataset for a neural model.

**f) Bottom-Right Panel**

* **Content:** A white box with a blue border, titled "Training dataset".

* Left: Text "Unlabeled" below the variable "x".

* Center: A right-pointing arrow leads to a gray box labeled "Reasoning Engine".

* Right: A right-pointing arrow leads from the engine to the tuple "(x,y)", with the text "Labeled" below it.

* **Flow:** Unlabeled data `x` is processed by a Reasoning Engine to produce labeled data `(x,y)`. This depicts a symbolic system generating labels for a dataset.

### Key Observations

1. **Integration Points:** The diagrams highlight four primary integration points for neuro-symbolic AI:

* **Loss Function Design** (a, b): Symbolic knowledge or physics constraints are embedded into the objective function.

* **Direct Model Influence** (d): Symbolic reasoning modules directly influence the neural network's parameters or activations.

* **Dataset Curation** (e, f): Symbolic systems are used to create, label, or structure training data.

* **High-Level Architecture** (c): A generic representation of combined neuro and symbolic components.

2. **Flow Direction:** Diagrams b), d), and f) explicitly show directional flow. In b) and d), the symbolic component influences the neural component. In f), the symbolic engine transforms data from unlabeled to labeled.

3. **Visual Consistency:** The neural network icon is identical in b) and d). The "Symbolic" text box is visually consistent in a), c), and e). The ellipsis box is consistent in a), c), and e).

### Interpretation

This set of diagrams serves as a taxonomy of fundamental strategies for combining neural and symbolic AI paradigms. It moves from abstract representations (a, c, e) to more concrete process flows (b, d, f).

* **What it demonstrates:** The core idea is that symbolic knowledge (rules, logic, physics) can be injected into the neural AI pipeline at different stages: during data preparation, within the model's objective function, or as a direct input to the model itself. Diagram f) is particularly notable as it shows a pure symbolic system ("Reasoning Engine") acting as a data labeler for a subsequent neural training process.

* **Relationships:** The diagrams contrast "soft" integration (where symbolic elements are part of a larger pipeline, as in a, b, e, f) with "hard" integration (where symbolic reasoning directly acts on the neural network, as in d). Panel b) specifically illustrates a popular sub-field: Physics-Informed Neural Networks (PINNs), where known physical laws (symbolic knowledge) are encoded into the loss function.

* **Notable Patterns:** The consistent use of the ellipsis in a), c), and e) implies these are simplified views of more complex systems. The shift from a blue background to a white background in f) may visually distinguish the data generation/labeling process from the model training processes shown in the other panels. The absence of any diagram showing a neural network influencing a symbolic system suggests the focus here is on using symbolic AI to enhance or structure neural AI, not the reverse.