## Diagram: Neuro-Symbolic System Architecture

### Overview

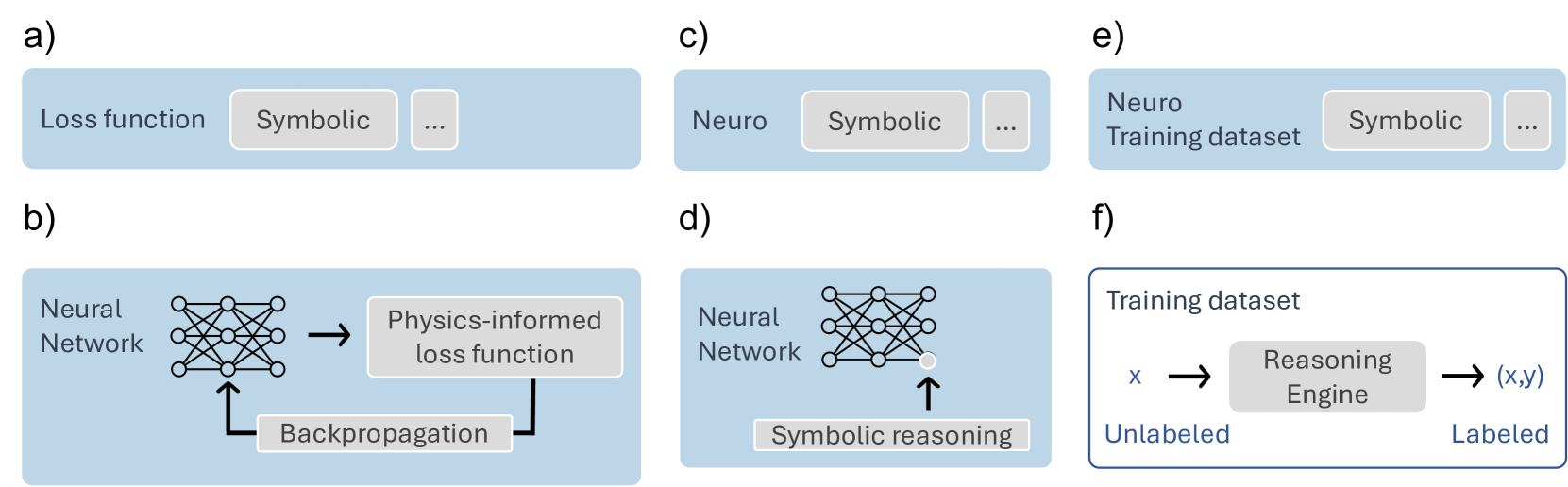

The image depicts a six-panel diagram illustrating the integration of neural networks with symbolic reasoning systems. Panels a-f show components like loss functions, neural networks, physics-informed training, and neuro-symbolic datasets.

### Components/Axes

- **Panel a**:

- **Labels**: "Loss function", "Symbolic", "..."

- **Description**: A horizontal bar representing a loss function with symbolic components.

- **Panel b**:

- **Labels**: "Neural Network", "Physics-informed loss function", "Backpropagation"

- **Description**: A neural network (6-layer) connected to a physics-informed loss function via backpropagation.

- **Panel c**:

- **Labels**: "Neuro", "Symbolic", "..."

- **Description**: A neuro-symbolic system combining neural and symbolic elements.

- **Panel d**:

- **Labels**: "Neural Network", "Symbolic reasoning"

- **Description**: A neural network with an upward arrow labeled "Symbolic reasoning," indicating integration.

- **Panel e**:

- **Labels**: "Neuro Training dataset", "Symbolic", "..."

- **Description**: A dataset combining neuro and symbolic elements.

- **Panel f**:

- **Labels**: "Training dataset", "x → Reasoning Engine → (x,y)", "Unlabeled", "Labeled"

- **Description**: A dataset processed by a reasoning engine to generate labeled outputs.

### Detailed Analysis

- **Panel b**: The neural network’s backpropagation directly influences the physics-informed loss function, suggesting physics constraints guide training.

- **Panel d**: Symbolic reasoning is positioned as an output or feedback mechanism for the neural network.

- **Panel f**: The reasoning engine acts as a bridge between unlabeled inputs (x) and labeled outputs (x,y), implying semi-supervised learning.

### Key Observations

1. **Symbolic Integration**: Symbolic elements appear in loss functions (a), datasets (e), and reasoning engines (f), indicating hybrid architectures.

2. **Physics Constraints**: Panel b explicitly ties neural training to physics, suggesting domain-specific optimization.

3. **Data Labeling**: Panel f highlights a reasoning engine’s role in converting unlabeled data to labeled pairs, reducing reliance on manual annotation.

### Interpretation

This architecture demonstrates a neuro-symbolic framework where neural networks are trained using physics-informed loss functions (b) and symbolic reasoning (d). The reasoning engine (f) enables scalable data labeling, while neuro-symbolic datasets (e) and loss functions (a, c) bridge data-driven and rule-based learning. The system likely aims to improve generalization by incorporating domain knowledge (physics) and logical reasoning, addressing limitations of pure neural approaches.