## Scatter Plots: Top 10 Safety Heads on Undiff Attention and Scaling Continuity

### Overview

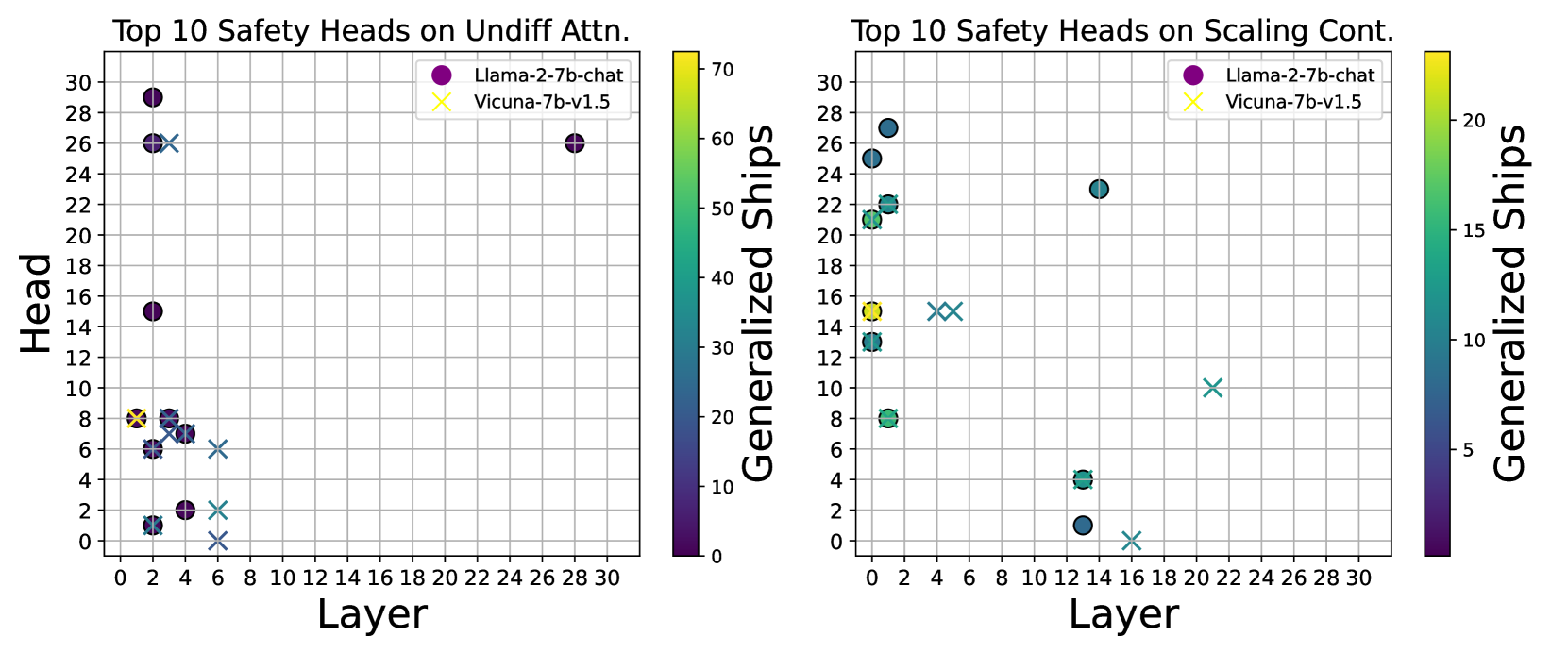

The image contains two side-by-side scatter plots comparing safety head distributions across neural network layers for two models: Llama-2-7b-chat (purple circles) and Vicuna-7b-v1.5 (yellow crosses). The left plot focuses on "Undiff Attention" safety heads, while the right plot examines "Scaling Continuity" safety heads. Both plots use a color gradient ("Generalized Ships") to indicate data point intensity, ranging from 0 (dark purple) to 70 (bright yellow).

---

### Components/Axes

#### Left Plot (Undiff Attention)

- **X-axis**: Layer (0–30, integer increments)

- **Y-axis**: Head (0–30, integer increments)

- **Legend**:

- Purple circles: Llama-2-7b-chat

- Yellow crosses: Vicuna-7b-v1.5

- **Color Bar**: "Generalized Ships" (0–70, darker = lower, brighter = higher)

#### Right Plot (Scaling Continuity)

- **X-axis**: Layer (0–30, integer increments)

- **Y-axis**: Head (0–30, integer increments)

- **Legend**: Same as left plot

- **Color Bar**: Same scale as left plot

---

### Detailed Analysis

#### Left Plot (Undiff Attention)

- **Llama-2-7b-chat** (purple circles):

- Concentrated in upper layers (26–30) with high head numbers (24–28).

- One outlier at layer 2 with head 14.

- Color intensity varies: darker (lower ships) in upper layers, brighter (higher ships) in lower layers.

- **Vicuna-7b-v1.5** (yellow crosses):

- Spread across lower layers (0–6) with heads 0–8.

- One outlier at layer 4 with head 8.

- Color intensity: brighter (higher ships) in lower layers.

#### Right Plot (Scaling Continuity)

- **Llama-2-7b-chat** (purple circles):

- Clustered in middle layers (12–14) with heads 14–16.

- Additional points in upper layers (26–30) with heads 24–26.

- Color intensity: darker (lower ships) in middle layers, brighter in upper layers.

- **Vicuna-7b-v1.5** (yellow crosses):

- Concentrated in lower layers (4–6) with heads 8–10.

- One outlier at layer 20 with head 12.

- Color intensity: brighter (higher ships) in lower layers.

---

### Key Observations

1. **Layer Distribution**:

- Llama-2-7b-chat safety heads dominate **upper layers** in both plots.

- Vicuna-7b-v1.5 safety heads are concentrated in **lower layers** for undiff attention and **middle-lower layers** for scaling continuity.

2. **Head Numbers**:

- Llama-2-7b-chat consistently shows higher head numbers (14–28) compared to Vicuna-7b-v1.5 (0–12).

3. **Color Intensity**:

- Vicuna-7b-v1.5 data points generally exhibit brighter colors (higher "Generalized Ships") in lower layers, suggesting stronger safety signals in these regions.

4. **Outliers**:

- Vicuna-7b-v1.5 has an outlier at layer 20 (head 12) in the scaling continuity plot, deviating from its lower-layer trend.

---

### Interpretation

1. **Model Behavior**:

- Llama-2-7b-chat’s safety heads in upper layers (undiff attention) and middle/upper layers (scaling continuity) may indicate specialized safety mechanisms in later processing stages.

- Vicuna-7b-v1.5’s lower-layer dominance suggests safety features are more active in early computational stages.

2. **Generalized Ships**:

- The color gradient implies that Vicuna-7b-v1.5’s safety signals are more pronounced (higher ships) in lower layers, while Llama-2-7b-chat’s signals are stronger in upper layers.

3. **Anomalies**:

- The Vicuna-7b-v1.5 outlier at layer 20 (scaling continuity) may reflect an unexpected safety mechanism or data artifact.

---

### Conclusion

The plots reveal distinct safety head distributions between the two models, with Llama-2-7b-chat favoring higher layers and Vicuna-7b-v1.5 prioritizing lower layers. The "Generalized Ships" metric highlights differences in safety signal strength across layers, offering insights into model architecture and safety design priorities.