## Line Chart: Agreement with Bayesian Assistant vs. Number of Interactions for Different LLMs

### Overview

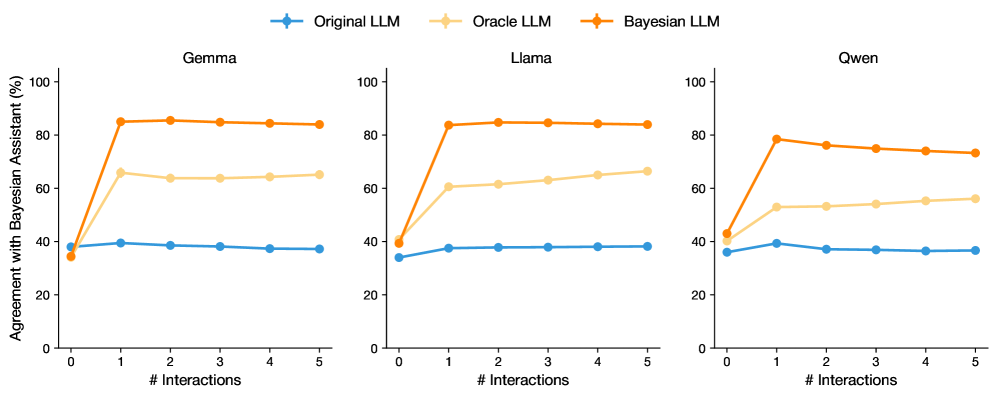

The image presents three line charts comparing the agreement percentage with a Bayesian Assistant for three different Language Learning Models (LLMs): Original LLM, Oracle LLM, and Bayesian LLM. The charts are separated by LLM type: Gemma, Llama, and Qwen. The x-axis represents the number of interactions (0 to 5), and the y-axis represents the agreement percentage (0% to 100%).

### Components/Axes

* **Title:** Agreement with Bayesian Assistant (%) vs. # Interactions

* **X-axis:** # Interactions, with markers at 0, 1, 2, 3, 4, and 5.

* **Y-axis:** Agreement with Bayesian Assistant (%), with markers at 0, 20, 40, 60, 80, and 100.

* **Chart Titles (Top):** Gemma, Llama, Qwen

* **Legend (Top):**

* Blue line with circle marker: Original LLM

* Light Orange line with circle marker: Oracle LLM

* Orange line with circle marker: Bayesian LLM

### Detailed Analysis

**Gemma**

* **Original LLM (Blue):** Relatively flat, hovering around 40%.

* 0 Interactions: ~38%

* 1 Interaction: ~40%

* 5 Interactions: ~38%

* **Oracle LLM (Light Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 65%.

* 0 Interactions: ~38%

* 1 Interaction: ~65%

* 5 Interactions: ~65%

* **Bayesian LLM (Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 85%.

* 0 Interactions: ~35%

* 1 Interaction: ~85%

* 5 Interactions: ~85%

**Llama**

* **Original LLM (Blue):** Relatively flat, hovering around 38%.

* 0 Interactions: ~35%

* 1 Interaction: ~38%

* 5 Interactions: ~38%

* **Oracle LLM (Light Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 65%.

* 0 Interactions: ~40%

* 1 Interaction: ~60%

* 5 Interactions: ~65%

* **Bayesian LLM (Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 85%.

* 0 Interactions: ~40%

* 1 Interaction: ~85%

* 5 Interactions: ~83%

**Qwen**

* **Original LLM (Blue):** Relatively flat, hovering around 38%.

* 0 Interactions: ~36%

* 1 Interaction: ~38%

* 5 Interactions: ~37%

* **Oracle LLM (Light Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 55%.

* 0 Interactions: ~42%

* 1 Interaction: ~53%

* 5 Interactions: ~57%

* **Bayesian LLM (Orange):** Increases sharply from 0 to 1 interaction, then plateaus around 75%.

* 0 Interactions: ~40%

* 1 Interaction: ~78%

* 5 Interactions: ~73%

### Key Observations

* The "Original LLM" consistently shows the lowest agreement percentage across all three LLM types (Gemma, Llama, Qwen).

* The "Bayesian LLM" consistently shows the highest agreement percentage across all three LLM types.

* The "Oracle LLM" shows an agreement percentage between the "Original LLM" and "Bayesian LLM".

* For all three LLM types, the agreement percentage for "Bayesian LLM" and "Oracle LLM" increases sharply from 0 to 1 interaction, then plateaus. The "Original LLM" remains relatively flat.

### Interpretation

The data suggests that incorporating Bayesian methods into LLMs significantly improves their agreement with a Bayesian Assistant. The "Bayesian LLM" consistently outperforms the "Original LLM" and "Oracle LLM" across all three LLM types (Gemma, Llama, Qwen). The sharp increase in agreement percentage from 0 to 1 interaction for "Bayesian LLM" and "Oracle LLM" indicates that even a single interaction can significantly improve their performance. The "Original LLM" shows little to no improvement with increasing interactions, suggesting that it lacks the ability to effectively learn from interactions in the same way as the other two models.