# Technical Data Extraction: Throughput Comparison Chart

## 1. Image Overview

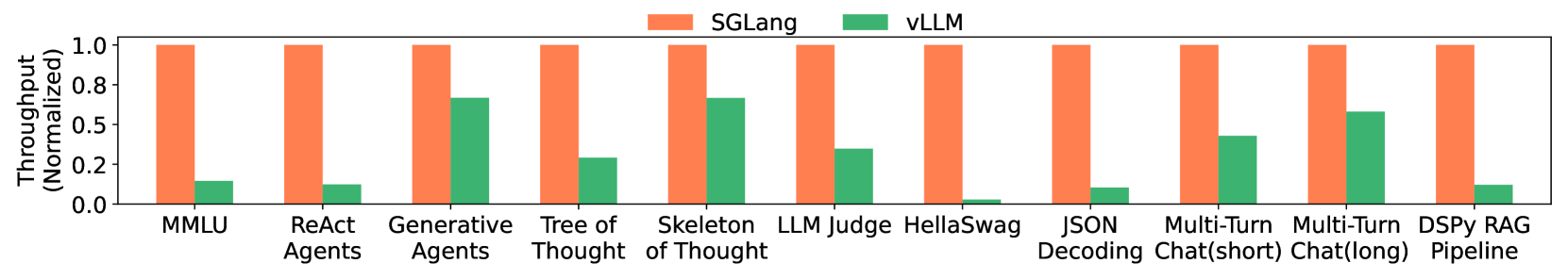

This image is a grouped bar chart comparing the normalized throughput of two software frameworks, **SGLang** and **vLLM**, across eleven different Large Language Model (LLM) benchmarks and tasks.

## 2. Component Isolation

### Header / Legend

* **Location:** Top center of the chart.

* **Legend Items:**

* **SGLang:** Represented by an orange bar.

* **vLLM:** Represented by a green bar.

### Main Chart Area

* **Y-Axis Label:** Throughput (Normalized)

* **Y-Axis Scale:** Linear, ranging from 0.0 to 1.0 with markers at [0.0, 0.2, 0.5, 0.8, 1.0].

* **X-Axis Categories:** Eleven distinct benchmarks/tasks.

* **Data Representation:** For every category, SGLang is normalized to 1.0 (full height), and vLLM is shown as a relative fraction of that performance.

## 3. Data Table Extraction

The following table reconstructs the visual data points. Values for vLLM are estimated based on their alignment with the Y-axis markers.

| Benchmark / Task | SGLang (Orange) | vLLM (Green) | Visual Trend Description |

| :--- | :---: | :---: | :--- |

| **MMLU** | 1.0 | ~0.15 | SGLang significantly outperforms vLLM. |

| **ReAct Agents** | 1.0 | ~0.12 | SGLang significantly outperforms vLLM. |

| **Generative Agents** | 1.0 | ~0.68 | vLLM performs relatively well but remains lower than SGLang. |

| **Tree of Thought** | 1.0 | ~0.30 | SGLang maintains a large lead. |

| **Skeleton of Thought** | 1.0 | ~0.68 | vLLM performance is higher here than in most other tasks. |

| **LLM Judge** | 1.0 | ~0.35 | SGLang is approximately 3x faster. |

| **HellaSwag** | 1.0 | ~0.03 | vLLM shows near-zero throughput relative to SGLang. |

| **JSON Decoding** | 1.0 | ~0.10 | SGLang significantly outperforms vLLM. |

| **Multi-Turn Chat (short)** | 1.0 | ~0.45 | vLLM reaches nearly half the throughput of SGLang. |

| **Multi-Turn Chat (long)** | 1.0 | ~0.60 | vLLM performance improves relative to the short chat task. |

| **DSPy RAG Pipeline** | 1.0 | ~0.12 | SGLang significantly outperforms vLLM. |

## 4. Key Findings and Trends

* **Baseline Performance:** SGLang serves as the performance baseline (1.0) across all tested scenarios.

* **Performance Gap:** SGLang consistently outperforms vLLM in every category shown.

* **Maximum Variance:** The largest performance gaps are observed in **HellaSwag**, **JSON Decoding**, and **ReAct Agents**, where vLLM throughput is a small fraction of SGLang's.

* **Minimum Variance:** vLLM performs most competitively in **Generative Agents**, **Skeleton of Thought**, and **Multi-Turn Chat (long)**, though it still does not exceed ~70% of SGLang's throughput.