\n

## Diagram: Architecture of an LLM-Empowered Agent

### Overview

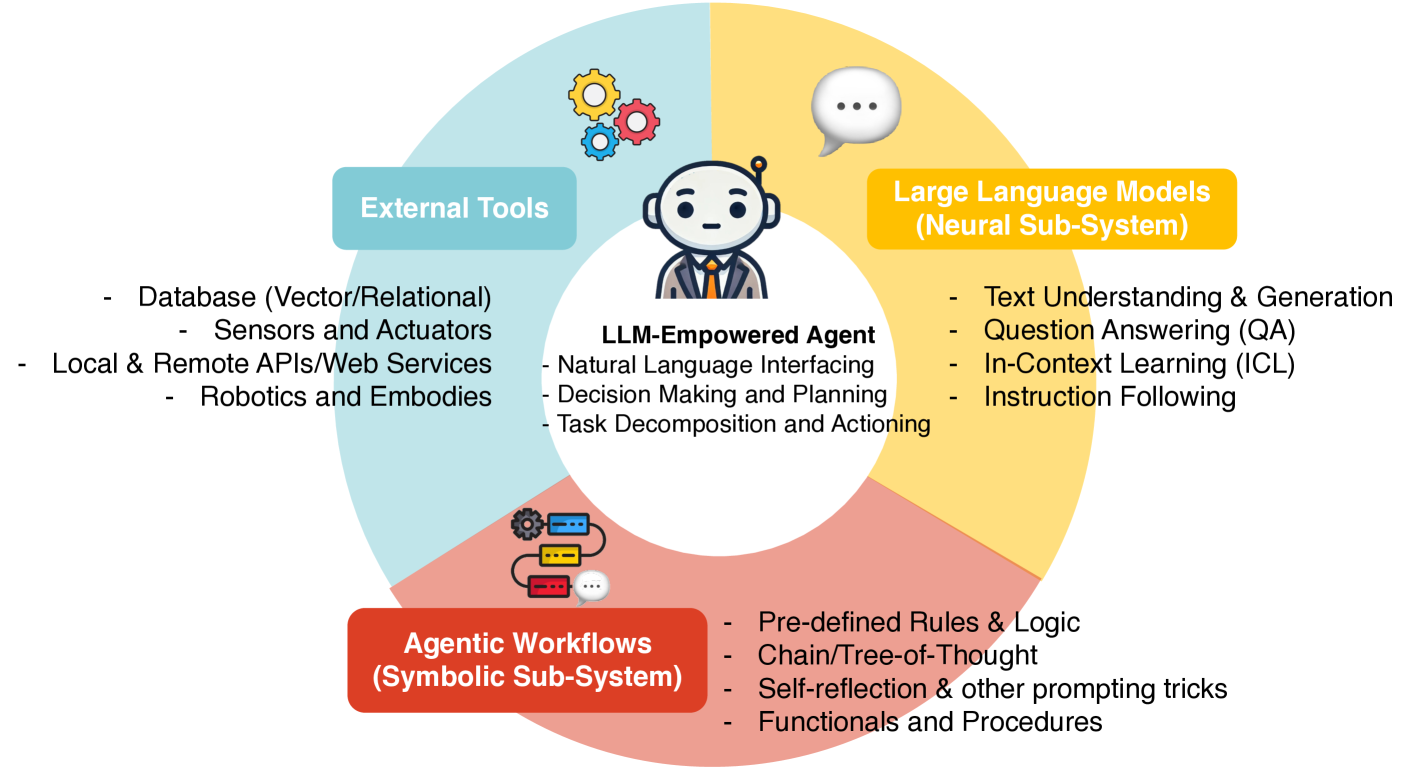

The image is a conceptual diagram illustrating the architecture of an "LLM-Empowered Agent." It depicts the agent as a central entity integrating three distinct but interconnected subsystems: External Tools, Large Language Models (Neural Sub-System), and Agentic Workflows (Symbolic Sub-System). The diagram uses a circular layout with the agent at the core and the three subsystems as colored segments surrounding it.

### Components/Axes

The diagram is structured around a central circle containing an icon of a robot/agent and text. This central hub is surrounded by three colored segments, each representing a subsystem with its own icon and list of components.

**Central Hub:**

* **Icon:** A stylized robot head and shoulders wearing a suit and tie.

* **Title:** `LLM-Empowered Agent`

* **List of Core Functions:**

* Natural Language Interfacing

* Decision Making and Planning

* Task Decomposition and Actioning

**Surrounding Subsystems (Clockwise from Top-Left):**

1. **Blue Segment (Top-Left): External Tools**

* **Icon:** Three interlocking gears (yellow, red, blue).

* **List of Components:**

* Database (Vector/Relational)

* Sensors and Actuators

* Local & Remote APIs/Web Services

* Robotics and Embodies

2. **Yellow Segment (Top-Right): Large Language Models (Neural Sub-System)**

* **Icon:** A speech bubble with three dots inside.

* **List of Capabilities:**

* Text Understanding & Generation

* Question Answering (QA)

* In-Context Learning (ICL)

* Instruction Following

3. **Red Segment (Bottom): Agentic Workflows (Symbolic Sub-System)**

* **Icon:** A flowchart with connected boxes and a gear.

* **List of Components:**

* Pre-defined Rules & Logic

* Chain/Tree-of-Thought

* Self-reflection & other prompting tricks

* Functionals and Procedures

### Detailed Analysis

The diagram presents a modular, integrated architecture. The **LLM-Empowered Agent** is the central orchestrator. Its core functions (Natural Language Interfacing, Decision Making, Task Decomposition) suggest it acts as the "brain" that interprets goals and breaks them down.

The three surrounding subsystems provide the agent with different types of capabilities:

* **External Tools (Blue):** This segment represents the agent's interface with the physical and digital world. It includes data storage (Databases), physical interaction (Sensors/Actuators, Robotics), and software interaction (APIs/Web Services). This is the "hands and senses" of the agent.

* **Large Language Models (Yellow):** Labeled as the "Neural Sub-System," this provides the core cognitive and linguistic abilities. The listed capabilities (Text Understanding, QA, ICL, Instruction Following) are the foundational AI model skills that enable the agent to process information and communicate.

* **Agentic Workflows (Red):** Labeled as the "Symbolic Sub-System," this provides structured, rule-based, and procedural reasoning. Components like "Chain/Tree-of-Thought" and "Pre-defined Rules & Logic" suggest methods for enhancing the LLM's reasoning with more deterministic or step-by-step processes. This acts as the "structured reasoning engine."

### Key Observations

1. **Tripartite Integration:** The architecture explicitly separates capabilities into Neural (LLM), Symbolic (Workflows), and Physical/Digital (Tools) domains, with the central agent integrating them.

2. **Agent as Orchestrator:** The central agent is not just an LLM but a system that *uses* an LLM alongside other components to perform complex tasks requiring planning, tool use, and structured reasoning.

3. **Bidirectional Flow Implied:** While not shown with arrows, the layout suggests a constant flow of information: the agent receives a task, uses the LLM to understand it, employs workflows to plan, and executes actions via external tools, with feedback looping back.

4. **Complementary Subsystems:** The Neural Sub-System (LLM) handles fuzzy, language-based tasks, while the Symbolic Sub-System (Workflows) handles structured, logical tasks. They are presented as complementary, not competing.

### Interpretation

This diagram illustrates a sophisticated blueprint for building autonomous AI agents. It argues that a powerful Large Language Model alone is insufficient for complex, real-world tasks. True agency requires the integration of three pillars:

1. **Perception & Action (External Tools):** The ability to gather data from and affect the environment is fundamental for any agent that must operate beyond a text interface.

2. **Core Cognition (LLMs):** The neural network provides the flexible, human-like understanding and generation of language, which is the primary interface for defining goals and communicating results.

3. **Structured Reasoning (Agentic Workflows):** Symbolic methods and predefined workflows provide reliability, explainability, and enhanced reasoning capabilities that can compensate for the occasional unpredictability or logical shortcomings of pure neural approaches.

The architecture suggests that the future of practical AI agents lies in **hybrid systems** that combine the strengths of neural networks (flexibility, language understanding) with symbolic AI (structure, reliability) and robust tool-use frameworks. The central "LLM-Empowered Agent" is the synthesizer that makes this combination coherent and goal-directed. This model moves beyond seeing an LLM as a chatbot and re-frames it as one critical component within a larger, more capable autonomous system.