## Line Chart: Benchmark: OlympiadBench

### Overview

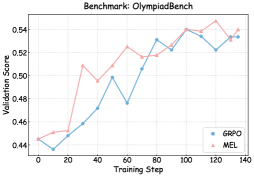

The image displays a line chart comparing the validation score performance of two methods, GRPO and MEL, over the course of training steps on a benchmark named "OlympiadBench". The chart tracks performance from step 0 to approximately step 140.

### Components/Axes

* **Chart Title:** "Benchmark: OlympiadBench" (Top center)

* **Y-Axis:**

* **Label:** "Validation Score" (Left side, rotated vertically)

* **Scale:** Linear scale ranging from 0.44 to 0.54, with major tick marks at 0.02 intervals (0.44, 0.46, 0.48, 0.50, 0.52, 0.54).

* **X-Axis:**

* **Label:** "Training Step" (Bottom center)

* **Scale:** Linear scale from 0 to 140, with major tick marks every 20 steps (0, 20, 40, 60, 80, 100, 120, 140).

* **Legend:** Located in the bottom-right corner of the plot area.

* **GRPO:** Represented by a blue line with circular markers.

* **MEL:** Represented by a red line with triangular markers.

### Detailed Analysis

**Data Series Trends & Approximate Points:**

1. **GRPO (Blue Line, Circles):**

* **Trend:** Starts at the lowest point, experiences an initial dip, then follows a generally upward trend with moderate fluctuations. It shows a significant dip around step 60 before recovering.

* **Approximate Data Points:**

* Step 0: ~0.445

* Step 10: ~0.450

* Step 20: ~0.440 (Local minimum)

* Step 30: ~0.455

* Step 40: ~0.470

* Step 50: ~0.500

* Step 60: ~0.480 (Significant dip)

* Step 70: ~0.515

* Step 80: ~0.530

* Step 90: ~0.525

* Step 100: ~0.540 (Peak)

* Step 110: ~0.535

* Step 120: ~0.520

* Step 130: ~0.535

* Step 140: ~0.535

2. **MEL (Red Line, Triangles):**

* **Trend:** Starts higher than GRPO, dips early, then exhibits a strong upward trend with higher volatility (larger swings up and down) compared to GRPO. It achieves the highest overall score on the chart.

* **Approximate Data Points:**

* Step 0: ~0.450

* Step 10: ~0.445 (Local minimum)

* Step 20: ~0.460

* Step 30: ~0.510

* Step 40: ~0.500

* Step 50: ~0.520

* Step 60: ~0.510

* Step 70: ~0.525

* Step 80: ~0.520

* Step 90: ~0.530

* Step 100: ~0.540

* Step 110: ~0.530

* Step 120: ~0.545 (Highest point on chart)

* Step 130: ~0.530

* Step 140: ~0.540

### Key Observations

1. **Overall Improvement:** Both GRPO and MEL show a clear positive trend, indicating that validation scores improve with increased training steps on the OlympiadBench benchmark.

2. **Performance Crossover:** MEL starts with a slight advantage, but GRPO catches up and briefly surpasses it around step 80. The lines cross multiple times, indicating competitive performance.

3. **Volatility Difference:** The MEL series (red) exhibits greater volatility, with sharper peaks and troughs, particularly between steps 20-40 and 110-130. The GRPO series (blue) is comparatively smoother, with its most notable deviation being the dip at step 60.

4. **Peak Performance:** The highest recorded validation score (~0.545) is achieved by MEL at approximately step 120. Both methods end the tracked period (step 140) at a similar high level (~0.535-0.540).

5. **Initial Phase:** Both methods experience a performance dip within the first 20 training steps before beginning their sustained ascent.

### Interpretation

The chart demonstrates a comparative learning curve analysis for two algorithms on a challenging benchmark. The data suggests that while both methods are effective, they exhibit different learning dynamics:

* **MEL** may have a higher potential for peak performance (as seen at step 120) but comes with less stability during training, as evidenced by its larger fluctuations. This could imply sensitivity to specific training batches or a more aggressive optimization strategy.

* **GRPO** appears to be a more stable learner. Its significant dip at step 60 is an anomaly that warrants investigation—it could correspond to a difficult subset of data, a learning rate issue, or a temporary instability in the optimization process. Its recovery from this dip shows robustness.

The fact that both methods converge to a similar final performance range suggests that for this specific benchmark, the choice between them might depend on secondary factors: if consistent, predictable progress is valued, GRPO might be preferred. If the training process can tolerate volatility in pursuit of potentially higher interim peaks, MEL could be the candidate. The initial dips for both models are curious and might indicate a common challenge in the early phase of learning for this task, such as overcoming a local minimum or adapting from a pre-trained state.