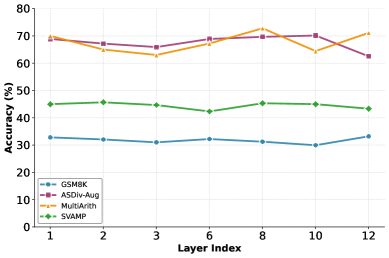

## Line Chart: Accuracy (%) vs. Layer Index for Various Benchmarks

### Overview

The image displays a line chart comparing the performance (accuracy percentage) of four different benchmarks or models across a series of layers, indexed from 1 to 12. The chart illustrates how accuracy changes as the layer index increases for each benchmark.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Title:** "Layer Index"

* **Scale:** Linear, with major tick marks and labels at indices 1, 2, 3, 6, 8, 10, and 12.

* **Y-Axis:**

* **Title:** "Accuracy (%)"

* **Scale:** Linear, ranging from 0 to 80, with major tick marks every 10 units (0, 10, 20, 30, 40, 50, 60, 70, 80).

* **Legend:**

* **Position:** Bottom-left corner of the chart area.

* **Entries (from top to bottom as listed in legend):**

1. **GSM8K:** Blue line with circle markers.

2. **MATH (Aqua):** Orange line with square markers.

3. **MMLU:** Purple line with diamond markers.

4. **SVAMP:** Green line with triangle markers.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **GSM8K (Blue line, circles):**

* **Trend:** Relatively flat, hovering in the low 30% range with minor fluctuations.

* **Data Points:**

* Layer 1: ~32%

* Layer 2: ~31%

* Layer 3: ~30%

* Layer 6: ~31%

* Layer 8: ~30%

* Layer 10: ~29%

* Layer 12: ~32%

2. **MATH (Aqua) (Orange line, squares):**

* **Trend:** Shows a general upward trend from layer 1 to 8, followed by a dip at layer 10 and a recovery at layer 12. It is the highest-performing series for most layers.

* **Data Points:**

* Layer 1: ~69%

* Layer 2: ~65%

* Layer 3: ~62%

* Layer 6: ~68%

* Layer 8: ~73% (Peak)

* Layer 10: ~64%

* Layer 12: ~71%

3. **MMLU (Purple line, diamonds):**

* **Trend:** Starts high, dips slightly at layer 3, recovers, and then shows a notable decline from layer 10 to 12.

* **Data Points:**

* Layer 1: ~70%

* Layer 2: ~68%

* Layer 3: ~66%

* Layer 6: ~69%

* Layer 8: ~70%

* Layer 10: ~70%

* Layer 12: ~61% (Significant drop)

4. **SVAMP (Green line, triangles):**

* **Trend:** Very stable and flat, consistently positioned in the mid-40% range across all layers.

* **Data Points:**

* Layer 1: ~45%

* Layer 2: ~46%

* Layer 3: ~45%

* Layer 6: ~42%

* Layer 8: ~45%

* Layer 10: ~45%

* Layer 12: ~43%

### Key Observations

* **Performance Hierarchy:** MATH (Aqua) and MMLU consistently achieve the highest accuracy (60-70%+ range), followed by SVAMP (~45%), with GSM8K being the lowest (~30%).

* **Stability:** SVAMP and GSM8K show remarkably stable performance across layers, with minimal variance. In contrast, MATH (Aqua) and MMLU exhibit more volatility.

* **Notable Anomaly:** The MMLU series experiences a sharp, significant drop in accuracy of approximately 9 percentage points between Layer 10 (~70%) and Layer 12 (~61%).

* **Peak Performance:** The highest single accuracy point on the chart is achieved by MATH (Aqua) at Layer 8 (~73%).

* **Layer Sensitivity:** The chart suggests that the performance of MATH (Aqua) and MMLU is more sensitive to the specific layer index than that of SVAMP or GSM8K.

### Interpretation

This chart likely visualizes the performance of a multi-layer neural network model (or models) on different reasoning or knowledge benchmarks. The "Layer Index" probably corresponds to the depth within the model's architecture.

* **What the data suggests:** The model's ability to solve different types of problems (as categorized by the benchmarks) is not uniform and evolves differently across its layers. The high and volatile performance on MATH (Aqua) and MMLU indicates these tasks engage complex, layer-sensitive processing pathways. The stability of SVAMP and GSM8K suggests these tasks rely on features that are either learned early and remain constant or are processed in a more layer-agnostic manner.

* **How elements relate:** The divergence in trends implies that deeper layers (e.g., 10-12) may be specializing or suffering from degradation for certain tasks (like MMLU) while continuing to benefit others (like MATH at layer 12). The consistent gap between benchmark groups highlights inherent differences in task difficulty or the model's inductive biases.

* **Notable Outlier:** The sharp decline in MMLU accuracy at the final layer is a critical finding. It could indicate overfitting, a breakdown in representation for that specific task at extreme depth, or an artifact of the model's training objective not aligning with the MMLU benchmark in the deepest layers. This warrants further investigation into the model's internal representations at layers 10 through 12.