## Chart Type: Line Chart - Agent Performance in Goal Achievement

### Overview

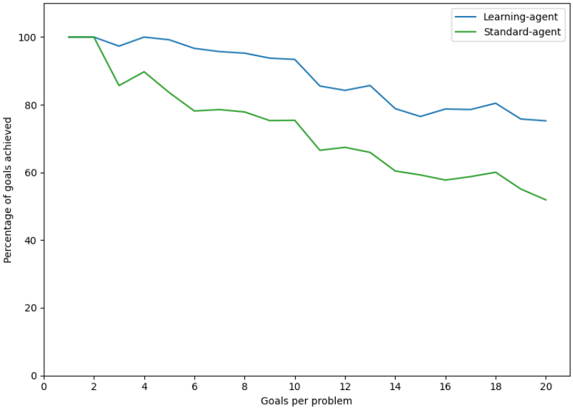

This image displays a line chart comparing the "Percentage of goals achieved" by two different agents, a "Learning-agent" and a "Standard-agent," as the "Goals per problem" increases. The chart illustrates how the performance of these agents changes with increasing problem complexity, represented by the number of goals per problem.

### Components/Axes

The chart consists of a main plotting area, an X-axis, a Y-axis, and a legend.

* **X-axis Label**: "Goals per problem"

* **X-axis Range**: From 0 to 20.

* **X-axis Tick Markers**: Major ticks are present at 0, 2, 4, 6, 8, 10, 12, 14, 16, 18, and 20.

* **Y-axis Label**: "Percentage of goals achieved"

* **Y-axis Range**: From 0 to 100.

* **Y-axis Tick Markers**: Major ticks are present at 0, 20, 40, 60, 80, and 100.

* **Legend**: Located in the top-right corner of the plotting area.

* **Blue Line**: Labeled "Learning-agent"

* **Green Line**: Labeled "Standard-agent"

### Detailed Analysis

The chart presents two distinct data series, each representing the performance of an agent.

**1. Learning-agent (Blue Line)**

The blue line, representing the "Learning-agent," generally shows a high percentage of goals achieved, starting at 100% and gradually declining with some fluctuations.

* **Trend**: The "Learning-agent" starts at peak performance, maintains it for low problem complexity, then exhibits a gradual, somewhat fluctuating decline in performance as the number of goals per problem increases. It consistently performs better than the "Standard-agent" after the initial few problems.

* **Data Points (approximate values for 'Goals per problem' vs. 'Percentage of goals achieved'):**

* 1 Goal/problem: ~100%

* 2 Goals/problem: ~99%

* 3 Goals/problem: ~97%

* 4 Goals/problem: ~100%

* 5 Goals/problem: ~99%

* 6 Goals/problem: ~97%

* 7 Goals/problem: ~95%

* 8 Goals/problem: ~94%

* 9 Goals/problem: ~92%

* 10 Goals/problem: ~92%

* 11 Goals/problem: ~85%

* 12 Goals/problem: ~84%

* 13 Goals/problem: ~86%

* 14 Goals/problem: ~85%

* 15 Goals/problem: ~78%

* 16 Goals/problem: ~79%

* 17 Goals/problem: ~79%

* 18 Goals/problem: ~81%

* 19 Goals/problem: ~76%

* 20 Goals/problem: ~76%

**2. Standard-agent (Green Line)**

The green line, representing the "Standard-agent," also starts at 100% but experiences a more significant and consistent decline in performance compared to the "Learning-agent."

* **Trend**: The "Standard-agent" begins with perfect performance but quickly drops off. Its performance shows a more pronounced and steady downward trend as the problem complexity increases, generally staying below the "Learning-agent" after the first two problems.

* **Data Points (approximate values for 'Goals per problem' vs. 'Percentage of goals achieved'):**

* 1 Goal/problem: ~100%

* 2 Goals/problem: ~100%

* 3 Goals/problem: ~85%

* 4 Goals/problem: ~89%

* 5 Goals/problem: ~82%

* 6 Goals/problem: ~78%

* 7 Goals/problem: ~79%

* 8 Goals/problem: ~77%

* 9 Goals/problem: ~76%

* 10 Goals/problem: ~76%

* 11 Goals/problem: ~67%

* 12 Goals/problem: ~68%

* 13 Goals/problem: ~65%

* 14 Goals/problem: ~60%

* 15 Goals/problem: ~59%

* 16 Goals/problem: ~57%

* 17 Goals/problem: ~58%

* 18 Goals/problem: ~60%

* 19 Goals/problem: ~55%

* 20 Goals/problem: ~52%

### Key Observations

* Both agents start with 100% goal achievement for 1 and 2 goals per problem.

* The "Standard-agent" experiences a sharp drop in performance between 2 and 3 goals per problem (from 100% to ~85%), while the "Learning-agent" shows a much smaller dip (from ~99% to ~97%) before recovering.

* From 3 goals per problem onwards, the "Learning-agent" consistently outperforms the "Standard-agent."

* Both agents show a general downward trend in the percentage of goals achieved as the number of goals per problem increases, indicating that higher complexity reduces performance for both.

* The "Learning-agent" maintains a higher floor for its performance, ending at approximately 76% for 20 goals per problem, whereas the "Standard-agent" drops to about 52% for the same complexity.

* The "Learning-agent" exhibits more resilience to increasing complexity, with its performance curve being flatter and less volatile than the "Standard-agent" after the initial phase.

### Interpretation

The data strongly suggests that the "Learning-agent" is significantly more robust and effective at handling increasing problem complexity (more goals per problem) compared to the "Standard-agent."

The initial identical performance at low complexity (1-2 goals/problem) indicates that for simple tasks, both agents are equally capable. However, as the problem complexity increases beyond 2 goals per problem, the "Standard-agent" quickly falters, showing a substantial decrease in its ability to achieve goals. This could imply that the "Standard-agent" relies on a fixed set of rules or heuristics that become less effective or computationally intractable as the problem space expands.

In contrast, the "Learning-agent" demonstrates a more graceful degradation in performance. While its goal achievement percentage does decrease with complexity, the decline is less steep and its overall performance remains considerably higher. This suggests that the "Learning-agent" possesses mechanisms (e.g., adaptability, generalization, or more sophisticated problem-solving strategies) that allow it to cope better with novel or more complex scenarios. The "Learning-agent" might be leveraging machine learning techniques to adapt its strategy, whereas the "Standard-agent" might be a rule-based or traditional AI system.

The consistent performance gap, which widens as complexity increases, highlights the practical advantage of the "Learning-agent" in real-world applications where problems are rarely simple and often involve multiple interacting objectives. The "Learning-agent" is better equipped to maintain a high level of success even when faced with challenging, multi-goal problems.