\n

## Diagram: Language Model Decoding Steps

### Overview

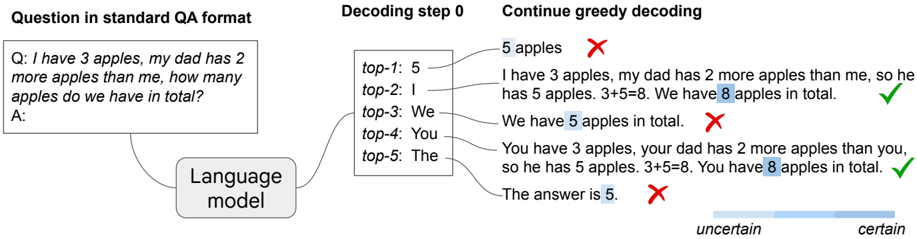

The image illustrates the decoding process of a language model when answering a question. It shows a question in a standard Question-Answer (QA) format, the initial decoding step, and a continuation of the decoding process using greedy decoding. The diagram highlights potential answer candidates and indicates whether they are correct or incorrect. A certainty scale is also present.

### Components/Axes

The diagram is divided into three main sections:

1. **Question in standard QA format:** Displays the input question and the expected answer space.

2. **Decoding step 0:** Shows the top 5 potential next tokens (words) generated by the language model at the initial decoding step.

3. **Continue greedy decoding:** Demonstrates the iterative process of selecting the most probable token at each step, along with correctness indicators.

A certainty scale is located at the bottom, ranging from "uncertain" to "certain".

### Detailed Analysis or Content Details

**Question in standard QA format:**

* **Q:** "I have 3 apples, my dad has 2 more apples than me, how many apples do we have in total?"

* **A:** (Empty space for the answer)

**Decoding step 0:**

* **top-1:** 5 (Probability not explicitly shown)

* **top-2:** I

* **top-3:** We

* **top-4:** You

* **top-5:** The

**Continue greedy decoding:**

The diagram shows several attempts at completing the answer, with correctness indicators (red 'X' for incorrect, green checkmark for correct). The numbers in blue highlight the predicted values.

* "5 apples" - Incorrect (marked with a red 'X')

* "I have 3 apples, my dad has 2 more apples than me, so he has 5 apples. 3+5=8. We have 8 apples in total." - Correct (marked with a green checkmark)

* "We have 5 apples in total." - Incorrect (marked with a red 'X')

* "You have 3 apples, your dad has 2 more apples than you, so he has 5 apples. 3+5=8. You have 8 apples in total." - Correct (marked with a green checkmark)

* "The answer is 5." - Incorrect (marked with a red 'X')

**Certainty Scale:**

The scale is a gradient from "uncertain" on the left to "certain" on the right.

### Key Observations

* The language model initially considers several possible next tokens, including numbers ("5") and pronouns ("I", "We", "You").

* Greedy decoding, where the most probable token is selected at each step, does not always lead to the correct answer.

* The model can generate complete sentences, but requires multiple steps to arrive at the correct solution.

* The certainty scale suggests that the model's confidence in its predictions can vary.

### Interpretation

This diagram demonstrates the iterative nature of language model decoding. The model doesn't directly "know" the answer; it generates text step-by-step, based on probabilities and the input question. The incorrect attempts highlight the challenges of natural language understanding and the potential for the model to generate plausible but incorrect responses. The certainty scale suggests that the model's confidence is not always aligned with correctness. The successful completions show that, with enough steps, the model can arrive at the correct answer through a process of refinement. The diagram illustrates the importance of evaluating not just the final answer, but also the intermediate steps and the model's confidence levels. The use of a simple arithmetic problem allows for easy verification of the model's reasoning. The diagram is a visual representation of the probabilistic nature of language models and the complexities of generating coherent and accurate text.