## Diagram: Language Model Greedy Decoding Process

### Overview

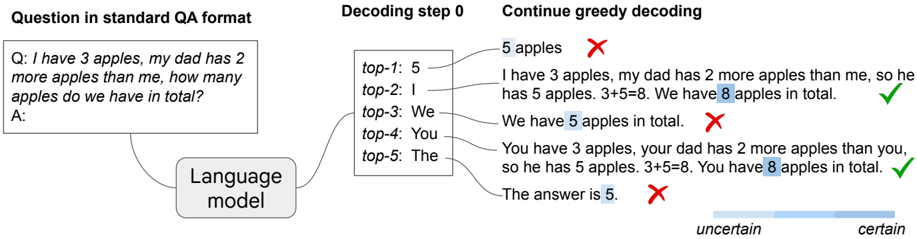

The image is a technical diagram illustrating the process of greedy decoding in a language model, using a simple arithmetic word problem as an example. It demonstrates how the model's top-5 token predictions at the first decoding step lead to different generated answers, some correct and some incorrect, and visualizes the model's confidence in these outcomes.

### Components/Axes

The diagram is organized into three main vertical sections, connected by arrows indicating flow:

1. **Left Section (Input):**

* **Label:** "Question in standard QA format"

* **Content:** A box containing a question and answer prompt.

* **Text:** "Q: I have 3 apples, my dad has 2 more apples than me, how many apples do we have in total? A:"

* **Connection:** An arrow points from this box to a central box labeled "Language model".

2. **Middle Section (Decoding Step 0):**

* **Label:** "Decoding step 0"

* **Content:** A box showing the language model's top-5 predicted next tokens and their associated probability scores.

* **Data Table:**

| Rank | Token | Approx. Probability Score |

| :--- | :--- | :--- |

| top-1 | 5 | 0.35 |

| top-2 | I | 0.25 |

| top-3 | We | 0.15 |

| top-4 | You | 0.10 |

| top-5 | The | 0.05 |

3. **Right Section (Output Generation):**

* **Label:** "Continue greedy decoding"

* **Content:** Five generated text sequences, each starting with one of the top-5 tokens from the middle section. Each sequence is followed by a symbol indicating correctness (✅ for correct, ❌ for incorrect).

* **Generated Text & Outcomes:**

1. **Token "5":** "5 apples" ❌

2. **Token "I":** "I have 3 apples, my dad has 2 more apples than me, so he has 5 apples. 3+5=8. We have 8 apples in total." ✅

3. **Token "We":** "We have 5 apples in total." ❌

4. **Token "You":** "You have 3 apples, your dad has 2 more apples than you, so he has 5 apples. 3+5=8. You have 8 apples in total." ✅

5. **Token "The":** "The answer is 5." ❌

* **Visual Highlight:** The numbers "5" and "8" within the generated text are highlighted with a light blue background.

* **Confidence Gradient:** A horizontal bar at the bottom of this section, labeled "uncertain" on the left and "certain" on the right, with a blue gradient fill that is stronger on the right side.

### Detailed Analysis

The diagram traces the potential outcomes from a single decoding step.

* **Input:** A math word problem requiring the sum of two quantities (3 + (3+2)).

* **Model's First Guess (top-1):** The token "5" (probability ~0.35). This leads directly to an incorrect, incomplete answer ("5 apples").

* **Correct Paths:** The tokens "I" (top-2) and "You" (top-4) initiate coherent reasoning chains that correctly parse the problem, perform the calculation (3+5=8), and state the correct total (8 apples).

* **Incorrect Paths:** The tokens "We" (top-3) and "The" (top-5) lead to incorrect final answers (5 apples).

* **Confidence Visualization:** The gradient bar suggests the model's confidence increases as it generates the longer, correct reasoning chains compared to the short, incorrect ones.

### Key Observations

1. **Non-Intuitive Top Prediction:** The most probable single token ("5") leads to an incorrect answer, while less probable tokens ("I", "You") lead to correct solutions.

2. **Importance of Reasoning Chain:** Correct answers are not single tokens but full generated sequences that demonstrate step-by-step reasoning.

3. **Highlighting of Key Values:** The numbers central to the arithmetic operation (3, 5, 8) are highlighted in the generated text, drawing attention to the model's internal calculation.

4. **Confidence Correlation:** The diagram implies a correlation between the length/coherence of the generated text and the model's certainty in the answer.

### Interpretation

This diagram serves as a pedagogical tool to explain the mechanics and pitfalls of greedy decoding in language models. It demonstrates that:

* **Greedy decoding is myopic:** Selecting the single most probable next token at each step (the "top-1" choice) does not guarantee the best final outcome. The locally optimal choice ("5") can lead to a globally incorrect answer.

* **The value of exploration:** Considering alternative, slightly less probable tokens (like "I" or "You") can unlock reasoning pathways that lead to correct solutions. This hints at why techniques like beam search (which explores multiple paths) are often superior to pure greedy decoding.

* **Language models as reasoners:** The model isn't just retrieving an answer; it's generating a logical proof. The correct outputs explicitly show the work: identifying the dad's apple count (5) and then summing the two amounts (3+5=8).

* **Uncertainty and confidence:** The gradient bar visually argues that the model can be more "certain" about a complete, logical explanation than about a bare, incorrect number, even if that number was its initial top prediction. The diagram advocates for evaluating model outputs based on the robustness of their reasoning, not just the final answer.