## Flowchart: Language Model Decoding Process for Math Problem

### Overview

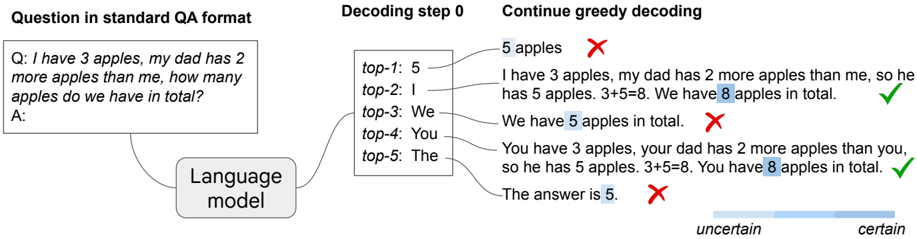

The image illustrates a language model's decoding process for solving a math word problem. It shows the initial question, top-5 token predictions, greedy decoding outputs with annotations, and an uncertainty visualization. The problem involves calculating total apples when one person has 3 apples and another has 2 more.

### Components/Axes

1. **Question Section** (Top-left):

- Text: "Q: I have 3 apples, my dad has 2 more apples than me, how many apples do we have in total? A:"

- Format: Standard QA template with blank answer field

2. **Decoding Step 0** (Center-left):

- Top-5 Predictions:

- Top-1: "5 apples" (Red cross ✗)

- Top-2: "I"

- Top-3: "We"

- Top-4: "You"

- Top-5: "The"

- Annotation: Red crosses indicate incorrect answers

3. **Greedy Decoding** (Right):

- Generated Sentences with Annotations:

- "I have 3 apples, my dad has 2 more apples than me, so he has 5 apples. 3+5=8. We have 8 apples in total." (Green check ✓)

- "We have 5 apples in total." (Red cross ✗)

- "You have 3 apples, your dad has 2 more apples than you, so he has 5 apples. 3+5=8. You have 8 apples in total." (Green check ✓)

- "The answer is 5." (Red cross ✗)

- Uncertainty Bar (Bottom):

- Gradient from light blue (uncertain) to dark blue (certain)

- Correct answers (✓) align with darker blue regions

- Incorrect answers (✗) align with lighter blue regions

### Detailed Analysis

1. **Top-5 Predictions**:

- Initial model output prioritizes "5 apples" (Top-1) despite being incorrect

- Pronouns ("I", "We", "You") appear in lower-ranked positions

- No correct answer ("8 apples") in top-5 predictions

2. **Greedy Decoding Outputs**:

- First correct answer appears in second attempt (✓)

- Model alternates between correct (✓) and incorrect (✗) responses

- Final output repeats incorrect "5 apples" conclusion

3. **Uncertainty Visualization**:

- Correct answers correlate with higher certainty (darker blue)

- Incorrect answers show lower confidence (lighter blue)

- Gradient suggests model uncertainty decreases with correct responses

### Key Observations

1. **Initial Prediction Bias**: Model favors "5 apples" despite incorrect arithmetic

2. **Pronoun Ambiguity**: Pronouns rank higher than numerical answers in initial predictions

3. **Self-Correction Pattern**: Model occasionally corrects itself through continued decoding

4. **Uncertainty Correlation**: Correct answers show higher confidence levels

5. **Persistent Error**: Final output repeats initial incorrect conclusion despite intermediate corrections

### Interpretation

The diagram reveals critical limitations in the language model's mathematical reasoning capabilities:

1. **Arithmetic Flaws**: The model struggles with basic addition (3+5=8), initially favoring incorrect answers

2. **Contextual Misunderstanding**: Pronouns appear more prominently than numerical solutions in initial predictions

3. **Decoding Instability**: While the model demonstrates some self-correction ability, it ultimately reverts to incorrect conclusions

4. **Confidence Calibration**: The uncertainty bar suggests the model associates correctness with higher confidence, but this doesn't prevent persistent errors

5. **Instruction Following**: The model partially follows the problem structure (e.g., "3+5=8") but fails to maintain consistent accuracy

This analysis highlights the need for improved mathematical reasoning modules and better uncertainty calibration in language models handling quantitative tasks.