## Configuration/Data Structure: Software Configuration Settings

### Overview

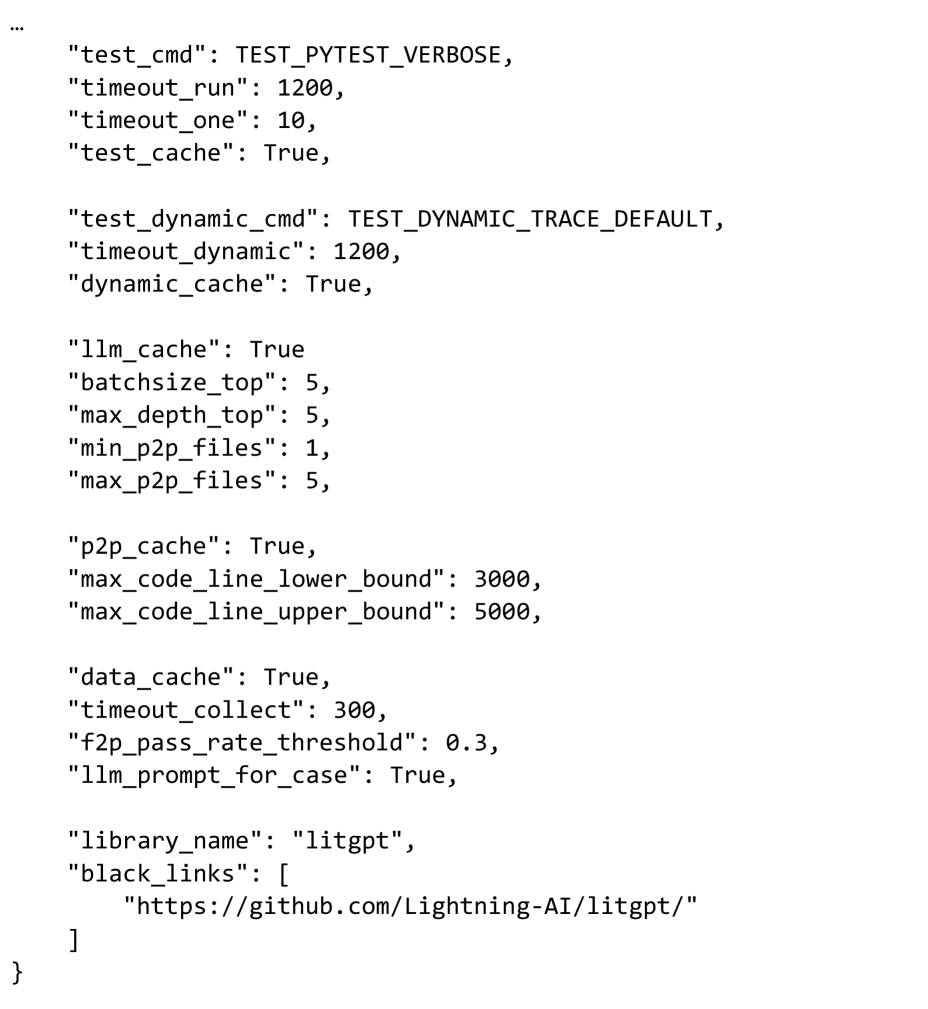

This image displays a block of text formatted as a key-value pair structure, highly resembling a JSON object or a configuration file. It outlines various parameters and settings, likely for a software application, testing framework, or a system involving Large Language Models (LLMs) and caching mechanisms. The settings cover aspects like test commands, timeouts, caching strategies, batch sizes, file limits, code line bounds, data collection, and library metadata.

### Structure/Format

The content is presented as a single block of text, starting with an implied opening curly brace `{` (the first line is indented as if part of an object) and ending with an explicit closing curly brace `}` at the bottom-left. Each line represents a key-value pair, where keys are strings enclosed in double quotes, followed by a colon, and then their corresponding value. Values can be strings, integers, booleans, or a list of strings. Pairs are separated by commas, except for the last pair within a logical grouping or the entire object. Indentation is used to visually group related settings.

### Content Details

The following key-value pairs are extracted:

```json

{

"test_cmd": "TEST_PYTEST_VERBOSE",

"timeout_run": 1200,

"timeout_one": 10,

"test_cache": True,

"test_dynamic_cmd": "TEST_DYNAMIC_TRACE_DEFAULT",

"timeout_dynamic": 1200,

"dynamic_cache": True,

"llm_cache": True,

"batchsize_top": 5,

"max_depth_top": 5,

"min_p2p_files": 1,

"max_p2p_files": 5,

"p2p_cache": True,

"max_code_line_lower_bound": 3000,

"max_code_line_upper_bound": 5000,

"data_cache": True,

"timeout_collect": 300,

"f2p_pass_rate_threshold": 0.3,

"llm_prompt_for_case": True,

"library_name": "litgpt",

"black_links": [

"https://github.com/Lightning-AI/litgpt/"

]

}

```

**Detailed Breakdown of Key-Value Pairs:**

* **"test_cmd"**: "TEST_PYTEST_VERBOSE" (String) - Specifies a command for testing, likely using Pytest in verbose mode.

* **"timeout_run"**: 1200 (Integer) - A timeout value, possibly in seconds, for a general test run.

* **"timeout_one"**: 10 (Integer) - A shorter timeout value, perhaps for a single test case or operation.

* **"test_cache"**: True (Boolean) - Indicates that a test-related cache is enabled.

* **"test_dynamic_cmd"**: "TEST_DYNAMIC_TRACE_DEFAULT" (String) - Specifies a command for dynamic testing, using a default trace.

* **"timeout_dynamic"**: 1200 (Integer) - A timeout value for dynamic testing.

* **"dynamic_cache"**: True (Boolean) - Indicates that a dynamic cache is enabled.

* **"llm_cache"**: True (Boolean) - Indicates that a cache specifically for Large Language Models (LLMs) is enabled.

* **"batchsize_top"**: 5 (Integer) - A batch size parameter, possibly for top-level operations or LLM processing.

* **"max_depth_top"**: 5 (Integer) - A maximum depth parameter, likely for a hierarchical structure or processing.

* **"min_p2p_files"**: 1 (Integer) - The minimum number of "p2p" (peer-to-peer or similar) files.

* **"max_p2p_files"**: 5 (Integer) - The maximum number of "p2p" files.

* **"p2p_cache"**: True (Boolean) - Indicates that a "p2p" specific cache is enabled.

* **"max_code_line_lower_bound"**: 3000 (Integer) - A lower bound for the number of code lines.

* **"max_code_line_upper_bound"**: 5000 (Integer) - An upper bound for the number of code lines.

* **"data_cache"**: True (Boolean) - Indicates that a general data cache is enabled.

* **"timeout_collect"**: 300 (Integer) - A timeout value for data collection.

* **"f2p_pass_rate_threshold"**: 0.3 (Float) - A threshold for a "f2p" pass rate, set at 30%.

* **"llm_prompt_for_case"**: True (Boolean) - Indicates that the LLM should prompt for a specific case.

* **"library_name"**: "litgpt" (String) - The name of the library being configured.

* **"black_links"**: ["https://github.com/Lightning-AI/litgpt/"] (List of Strings) - A list of "blacklisted" or perhaps "reference" links, in this case, a GitHub repository URL for "litgpt".

### Key Observations

* **Modular Configuration**: The settings appear to be logically grouped, suggesting a modular design for the underlying system.

* **Extensive Caching**: Multiple caching mechanisms are enabled by default (`test_cache`, `dynamic_cache`, `llm_cache`, `p2p_cache`, `data_cache`), indicating a performance-sensitive application.

* **Timeouts**: Several timeout parameters (`timeout_run`, `timeout_one`, `timeout_dynamic`, `timeout_collect`) are defined, suggesting operations that could potentially hang or take too long.

* **LLM Integration**: Specific parameters like `llm_cache`, `llm_prompt_for_case`, `batchsize_top`, and `max_depth_top` strongly suggest the system interacts with or utilizes Large Language Models.

* **Code Analysis/Processing**: `max_code_line_lower_bound` and `max_code_line_upper_bound` imply that the system might be involved in analyzing or processing code, potentially related to the "p2p" (peer-to-peer or perhaps "program-to-program") context.

* **Library Specifics**: The `library_name` "litgpt" and its associated GitHub link provide a clear context for the configuration, pointing to a library developed by Lightning AI, likely related to GPT models.

### Interpretation

This configuration file provides a detailed blueprint for how a system, specifically one built around the "litgpt" library (likely a variant of GPT models from Lightning AI), is intended to operate. The prevalence of `True` values for various `_cache` settings indicates a strong emphasis on performance optimization and resource reuse across different operational phases (general testing, dynamic testing, LLM operations, P2P interactions, and data handling).

The `test_cmd` and `test_dynamic_cmd` parameters, along with their respective timeouts, suggest a robust testing framework is integrated, capable of both standard and dynamic analysis. The `timeout_run` and `timeout_dynamic` values being identical (1200 seconds or 20 minutes) might imply a standard maximum duration for these types of operations, while `timeout_one` (10 seconds) is for quicker, atomic tasks. `timeout_collect` (300 seconds or 5 minutes) for data collection is also a significant duration, indicating potentially large datasets or complex collection processes.

The `llm_cache`, `batchsize_top`, `max_depth_top`, and `llm_prompt_for_case` parameters are critical for understanding the LLM's behavior within this system. They suggest that the LLM's responses or intermediate states are cached, processed in batches, and potentially involve a hierarchical or recursive structure up to a certain depth. The `llm_prompt_for_case` setting implies an interactive or adaptive prompting mechanism.

The `p2p_cache` and `min_p2p_files`/`max_p2p_files` parameters, combined with `max_code_line_lower_bound`/`upper_bound`, hint at a system that might be analyzing or processing code files, possibly in a distributed or collaborative (peer-to-peer) manner. The code line bounds could be used for filtering, chunking, or setting limits on the scope of code analysis.

Finally, the `f2p_pass_rate_threshold` at 0.3 (30%) is an interesting metric, possibly related to a success rate for a specific "f2p" process, which might be a quality gate or a performance indicator. The `black_links` entry, containing the GitHub repository for `litgpt`, serves as a direct reference to the project's source, which is crucial for documentation and maintenance.

In essence, this configuration describes a sophisticated system designed for efficient, potentially large-scale, and robust operation, with a clear focus on leveraging LLMs and managing various forms of caching and testing, likely within a code-centric or data-intensive environment.