## Configuration Snippet: LitGPT Test & Benchmark Parameters

### Overview

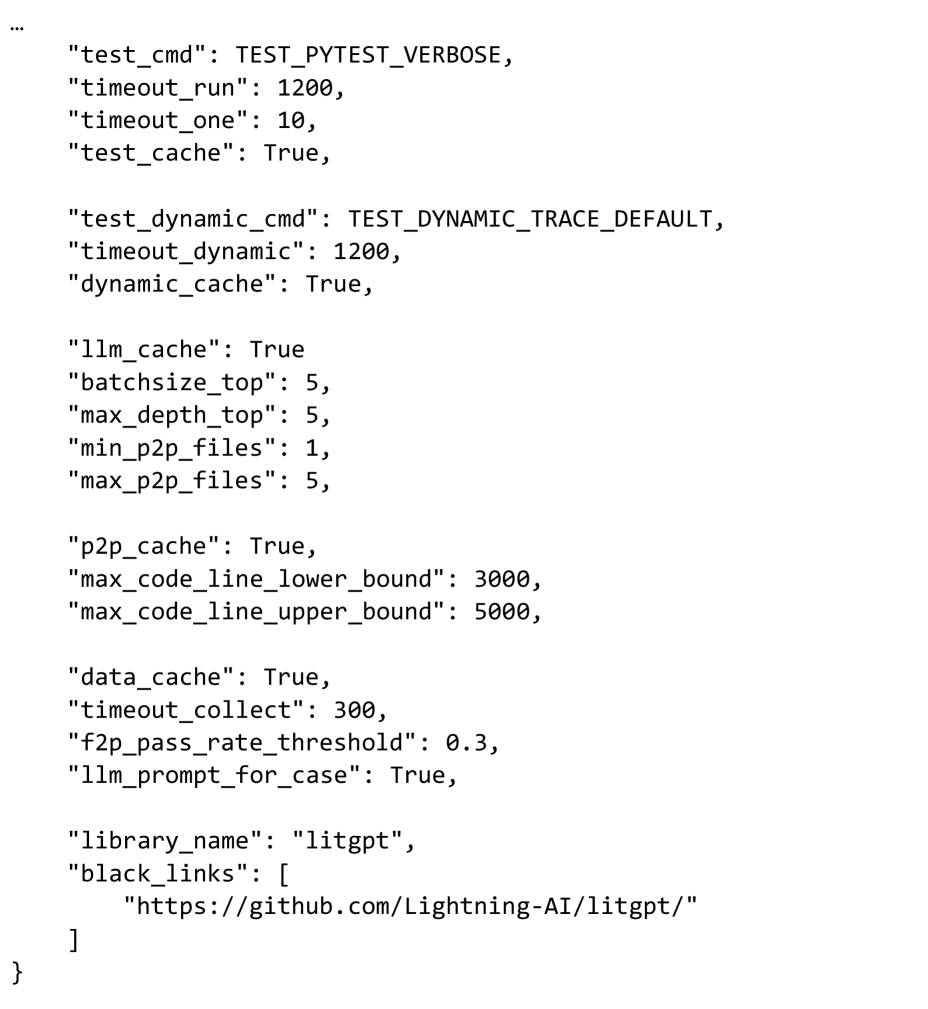

The image displays a fragment of a JSON configuration object, likely from a Python script or settings file. It contains parameters for controlling test execution, caching behavior, and resource limits for a project named "litgpt". The snippet begins with an ellipsis (`...`), indicating it is a continuation of a larger structure.

### Components/Axes

The content is structured as a series of JSON key-value pairs. The keys are strings, and the values are a mix of booleans (`True`), integers, and strings. The configuration is grouped thematically into several blocks.

### Detailed Analysis

The configuration parameters are transcribed below in the order they appear:

**Test Execution & Caching:**

* `"test_cmd": TEST_PYTEST_VERBOSE` - The command for running tests, set to a constant for verbose pytest output.

* `"timeout_run": 1200` - Timeout for a test run, likely in seconds (20 minutes).

* `"timeout_one": 10` - Timeout for a single test, likely in seconds.

* `"test_cache": True` - Enables caching for test results.

**Dynamic Tracing:**

* `"test_dynamic_cmd": TEST_DYNAMIC_TRACE_DEFAULT` - Command for dynamic tracing tests.

* `"timeout_dynamic": 1200` - Timeout for dynamic tracing, likely in seconds (20 minutes).

* `"dynamic_cache": True` - Enables caching for dynamic tracing results.

**LLM & Batch Processing:**

* `"llm_cache": True` - Enables caching for Large Language Model operations.

* `"batchsize_top": 5` - Batch size for top-level operations.

* `"max_depth_top": 5` - Maximum recursion or processing depth for top-level operations.

* `"min_p2p_files": 1` - Minimum number of files for peer-to-peer (P2P) operations.

* `"max_p2p_files": 5` - Maximum number of files for P2P operations.

**Peer-to-Peer (P2P) & Code Analysis:**

* `"p2p_cache": True` - Enables caching for P2P operations.

* `"max_code_line_lower_bound": 3000` - Lower bound for a maximum code line count threshold.

* `"max_code_line_upper_bound": 5000` - Upper bound for a maximum code line count threshold.

**Data Collection & Prompting:**

* `"data_cache": True` - Enables caching for collected data.

* `"timeout_collect": 300` - Timeout for data collection, likely in seconds (5 minutes).

* `"f2p_pass_rate_threshold": 0.3` - A pass rate threshold (30%) for a metric abbreviated as "f2p".

* `"llm_prompt_for_case": True` - A flag indicating whether to use LLM prompting for test cases.

**Library Identification:**

* `"library_name": "litgpt"` - The name of the software library this configuration pertains to.

* `"black_links": [ "https://github.com/Lightning-AI/litgpt/" ]` - A list containing a single URL, presumably a repository link to be excluded or treated specially ("black_links").

### Key Observations

1. **Caching Pervades the System:** Almost every major operation (test, dynamic trace, LLM, P2P, data) has an associated cache flag set to `True`, indicating a strong emphasis on performance optimization through result reuse.

2. **Generous Timeouts:** The primary run and dynamic tracing timeouts are set to 1200 seconds (20 minutes), suggesting the processes are expected to be computationally intensive or long-running.

3. **Constrained Resource Parameters:** Parameters like `batchsize_top`, `max_depth_top`, and `max_p2p_files` are set to small integers (5), indicating controlled resource usage or a focus on smaller-scale, manageable operations.

4. **Specific Thresholds:** The configuration defines precise numerical bounds for code lines (3000-5000) and a pass rate (0.3), which are likely critical for automated quality gates or filtering logic.

### Interpretation

This configuration snippet defines the operational envelope for a testing and benchmarking framework associated with the **LitGPT** library (from Lightning AI). The parameters suggest a system designed for:

* **Performance:** Heavy use of caching to speed up iterative testing and data collection.

* **Stability:** Long timeouts to accommodate potentially slow LLM operations or complex code analysis.

* **Control:** Tight limits on batch sizes, recursion depth, and file counts to prevent resource exhaustion.

* **Quality Control:** Explicit thresholds for code size and pass rates, which could be used to filter or prioritize test cases.

The presence of `llm_cache` and `llm_prompt_for_case` strongly indicates that the testing framework itself leverages or evaluates Large Language Models, possibly for generating test cases, analyzing code, or as the subject of the benchmarks. The "black_links" field pointing to the LitGPT GitHub repository might be used to exclude this specific link from certain link-checking or validation processes within the test suite. Overall, this is a configuration for a sophisticated, resource-aware, and performance-optimized automated testing pipeline for an LLM-related codebase.