\n

## Diagram: System Architecture for LLM Interaction

### Overview

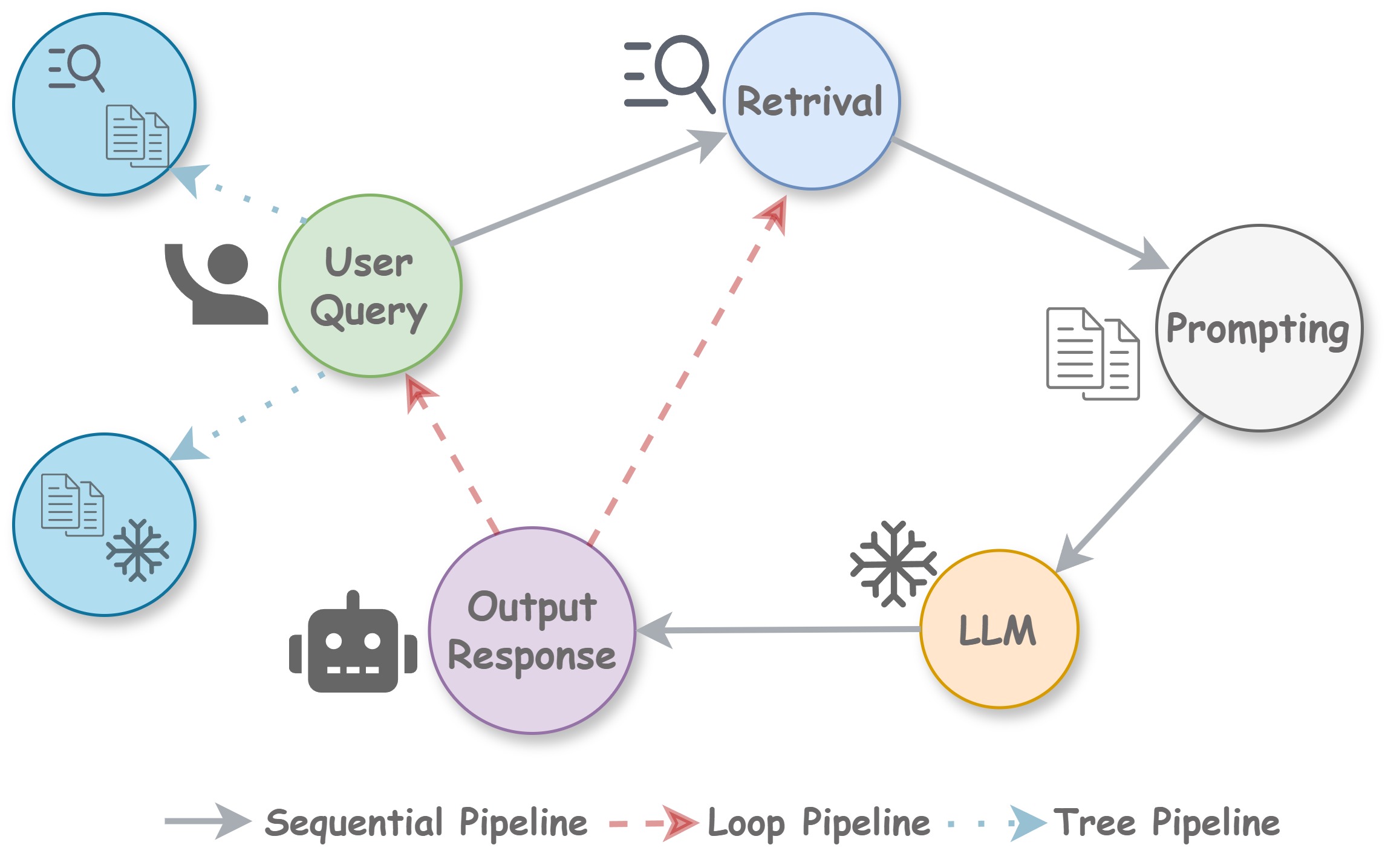

The image depicts a diagram illustrating the architecture of a system involving a Large Language Model (LLM) interacting with a user. The diagram shows the flow of information between different components, including a user query, retrieval mechanisms, prompting, the LLM itself, and the final output response. The connections between components are represented by arrows, with different styles indicating different types of pipelines: sequential, loop, and tree.

### Components/Axes

The diagram consists of the following components:

* **User Query:** Represented by a light-green oval in the top-left, containing an icon of a person speaking and a document with a search symbol.

* **Retrieval:** Represented by a light-blue oval at the top-center, containing a search icon and a document.

* **Prompting:** Represented by a light-yellow oval on the top-right, containing a document with lines.

* **LLM:** Represented by a yellow circle in the center-right, containing a snowflake icon.

* **Output Response:** Represented by a light-purple oval at the bottom-center, containing a robot icon.

The diagram also includes a legend at the bottom:

* **Sequential Pipeline:** Represented by a solid gray arrow.

* **Loop Pipeline:** Represented by a dashed red arrow.

* **Tree Pipeline:** Represented by a dotted gray arrow.

### Detailed Analysis or Content Details

The diagram illustrates the following information flow:

1. **User Query to Retrieval:** A dotted gray arrow (Tree Pipeline) connects the "User Query" to the "Retrieval" component.

2. **User Query to Output Response:** A dotted gray arrow (Tree Pipeline) connects the "User Query" to the "Output Response" component.

3. **Retrieval to Prompting:** A solid gray arrow (Sequential Pipeline) connects the "Retrieval" component to the "Prompting" component.

4. **Prompting to LLM:** A solid gray arrow (Sequential Pipeline) connects the "Prompting" component to the "LLM" component.

5. **LLM to Output Response:** A solid gray arrow (Sequential Pipeline) connects the "LLM" component to the "Output Response" component.

6. **Output Response to User Query:** A dashed red arrow (Loop Pipeline) connects the "Output Response" component back to the "User Query" component.

7. **LLM to Retrieval:** A dashed red arrow (Loop Pipeline) connects the "LLM" component to the "Retrieval" component.

### Key Observations

The diagram highlights a system where the LLM is central to processing information. The presence of both loop and tree pipelines suggests a complex interaction where the system can refine its responses based on previous outputs (loop) and explore multiple paths to retrieve information (tree). The sequential pipeline indicates a standard flow of information from retrieval to prompting to the LLM and finally to the output.

### Interpretation

The diagram represents a sophisticated system for interacting with an LLM. The architecture suggests that the system is designed to be iterative and adaptive. The loop pipelines allow the system to refine its responses based on user feedback or internal evaluation. The tree pipelines enable the system to explore multiple sources of information to provide a more comprehensive and accurate response. The sequential pipeline represents the core processing flow. This architecture is likely used in applications such as question answering, chatbots, or content generation, where the ability to refine responses and access diverse information sources is crucial. The inclusion of retrieval suggests the LLM is not solely relying on its internal knowledge but is also augmenting it with external data. The diagram does not provide any quantitative data, but it clearly illustrates the functional relationships between the different components of the system.