## Diagram Type: Flowchart

### Overview

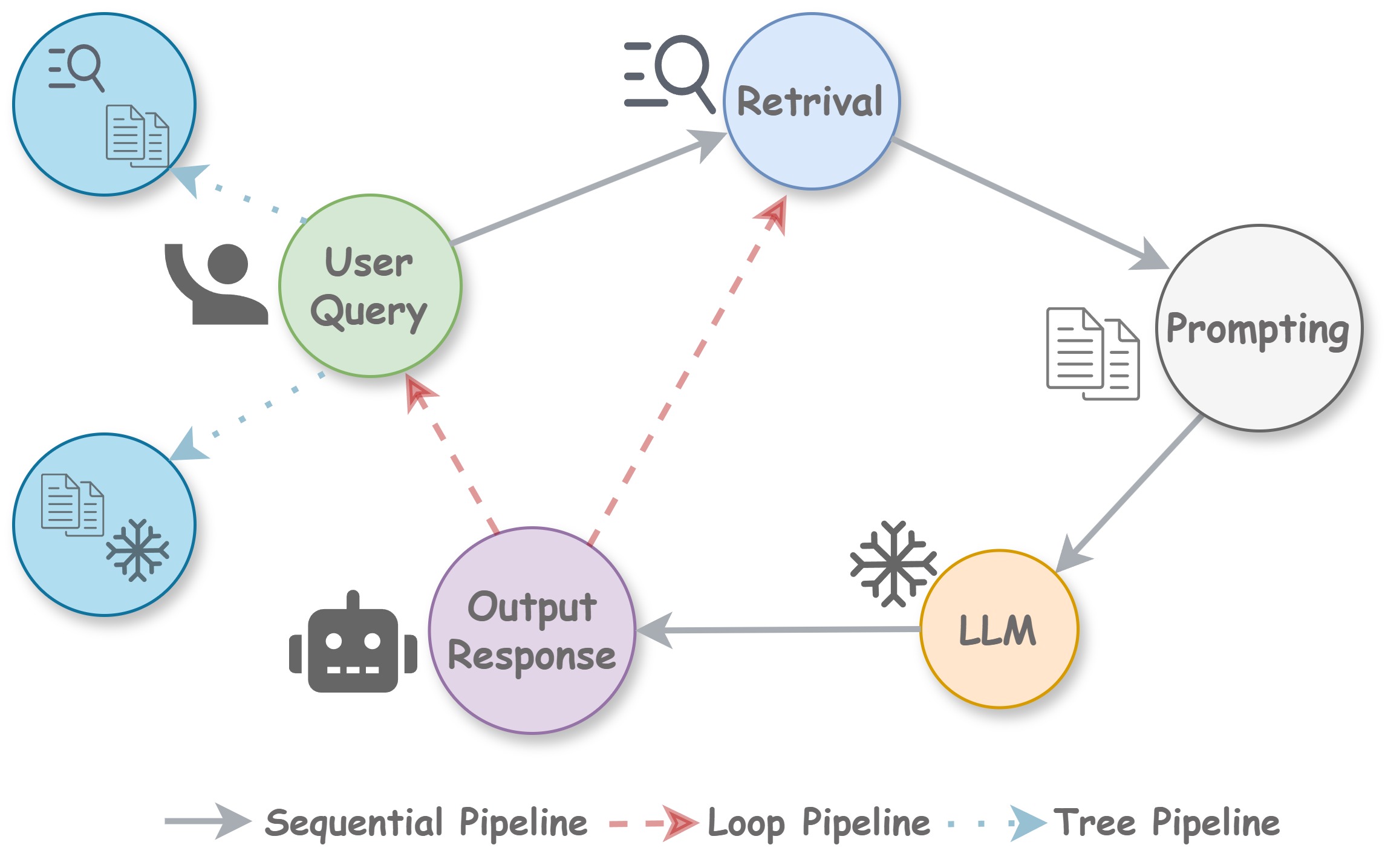

The diagram illustrates the process of a language model (LLM) interacting with a user to retrieve and respond to a query. It shows three different pipeline types: Sequential, Loop, and Tree.

### Components/Axes

- **User Query**: Represented by a magnifying glass icon, indicating the user's input.

- **Retrieval**: A central node with a magnifying glass icon, representing the retrieval of relevant information.

- **Prompting**: A node with a paper icon, representing the user's prompt to the LLM.

- **LLM**: A node with a snowflake icon, representing the language model.

- **Output Response**: A node with a robot icon, representing the LLM's response.

- **Sequential Pipeline**: A dashed line connecting User Query to Retrieval, Retrieval to Prompting, Prompting to LLM, and LLM to Output Response.

- **Loop Pipeline**: A dashed line connecting User Query to Retrieval, Retrieval to Prompting, Prompting to LLM, LLM to Output Response, and Output Response back to Retrieval.

- **Tree Pipeline**: A dashed line connecting User Query to Retrieval, Retrieval to Prompting, Prompting to LLM, LLM to Output Response, and Output Response back to Retrieval, with additional branches for Loop and Tree pipelines.

### Detailed Analysis or ### Content Details

- The **User Query** is the starting point of the process.

- The **Retrieval** node is where the LLM retrieves relevant information based on the user's query.

- The **Prompting** node is where the user provides a prompt to the LLM.

- The **LLM** processes the prompt and generates an **Output Response**.

- The **Output Response** is then sent back to the **Retrieval** node for further processing.

### Key Observations

- The **Loop Pipeline** and **Tree Pipeline** both involve a loop where the output response is sent back to the retrieval node, allowing for iterative processing.

- The **Sequential Pipeline** is a linear process where each step is executed in a specific order.

### Interpretation

The diagram demonstrates the process of a language model interacting with a user to retrieve and respond to a query. The LLM processes the user's prompt, retrieves relevant information, and generates an output response. The diagram also shows the different pipeline types that can be used to process the query, including Sequential, Loop, and Tree pipelines. The Loop and Tree pipelines involve a loop where the output response is sent back to the retrieval node, allowing for iterative processing. The Sequential pipeline is a linear process where each step is executed in a specific order. The diagram illustrates the complexity of language model interactions and the different ways in which they can be processed.