## Flowchart: Information Retrieval and Response Generation System

### Overview

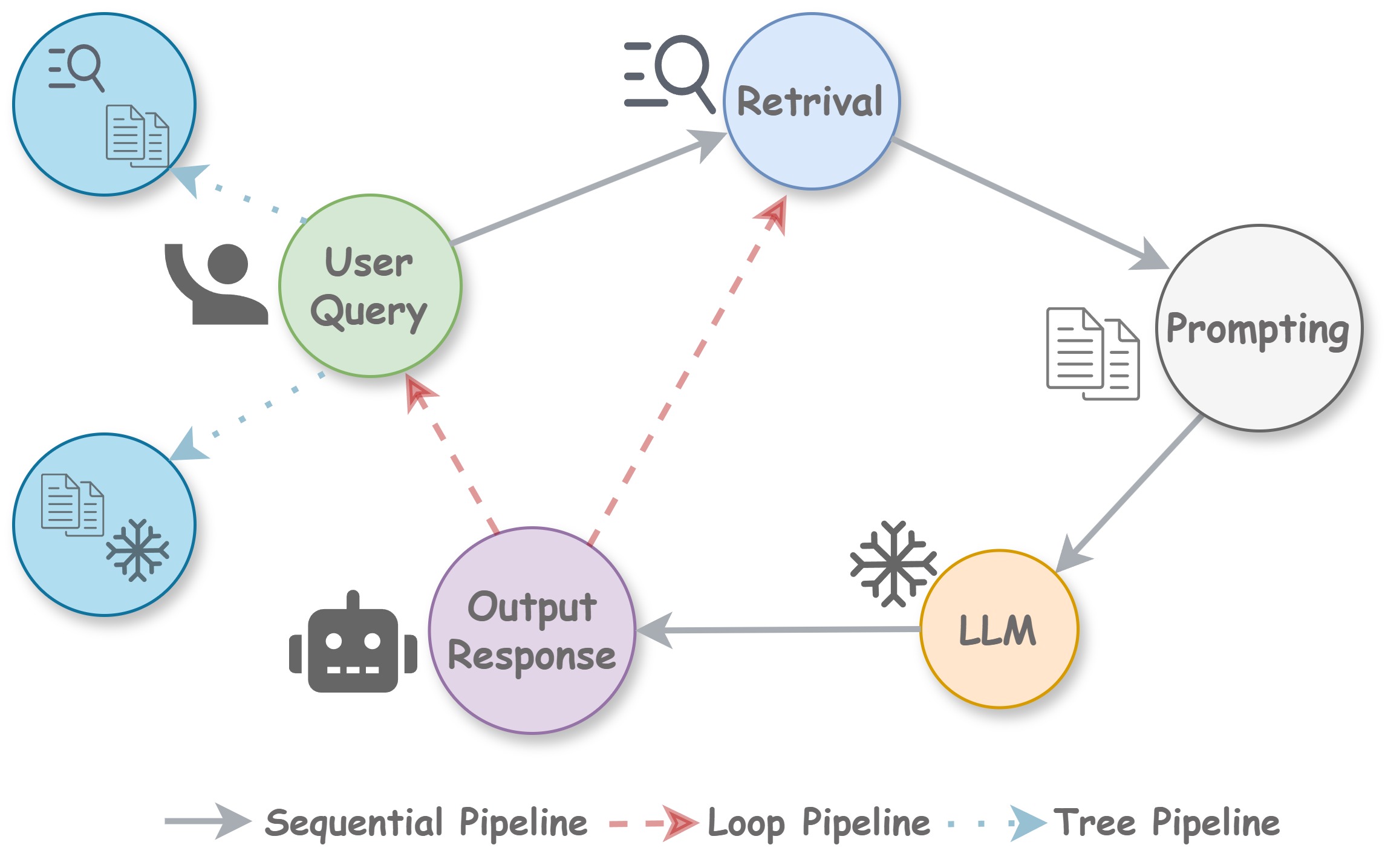

The diagram illustrates a multi-stage process for handling user queries through information retrieval, prompting, and language model (LLM) interaction. It includes three pipeline types (sequential, loop, tree) represented by different arrow styles.

### Components/Axes

**Nodes (Process Stages):**

1. **User Query** (Green circle with person icon) - Top-left

2. **Retrieval** (Blue circle with search icon) - Center-top

3. **Prompting** (Gray circle with document icon) - Right-center

4. **LLM** (Yellow circle with robot icon) - Bottom-right

5. **Output Response** (Purple circle with robot icon) - Bottom-center

**Arrows (Pipeline Types):**

- **Sequential Pipeline** (Solid gray arrows)

- **Loop Pipeline** (Dashed red arrows)

- **Tree Pipeline** (Dotted blue arrows)

**Legend Position:** Bottom of diagram, clearly labeling arrow types

### Detailed Analysis

1. **User Query** (Green) initiates the process, connected via:

- Sequential pipeline (solid gray) to **Retrieval**

- Loop pipeline (dashed red) back to itself (self-loop)

2. **Retrieval** (Blue) connects to:

- Sequential pipeline to **Prompting**

- Loop pipeline back to **User Query**

3. **Prompting** (Gray) connects to:

- Tree pipeline (dotted blue) to **LLM**

- Sequential pipeline to **Output Response**

4. **LLM** (Yellow) connects via:

- Sequential pipeline to **Output Response**

5. **Output Response** (Purple) has no outgoing connections

### Key Observations

- The system features three distinct pipeline architectures:

- **Sequential Flow**: Main path from User Query → Retrieval → Prompting → LLM → Output Response

- **Loop Mechanism**: Allows iterative refinement between User Query ↔ Retrieval and Retrieval ↔ User Query

- **Branching Path**: Prompting → LLM represents a decision point with potential parallel processing

- No numerical data points present; the diagram focuses on process flow rather than quantitative metrics

### Interpretation

This flowchart represents a hybrid information processing system combining:

1. **Iterative Refinement**: The loop pipeline between User Query and Retrieval suggests a feedback mechanism for query optimization

2. **Parallel Processing**: The tree pipeline from Prompting to LLM indicates potential for concurrent model interactions

3. **Linear Execution**: The sequential pipeline forms the primary execution path through the system

The architecture implies a balance between:

- **Efficiency** (through sequential processing)

- **Adaptability** (via loop-based query refinement)

- **Complexity Handling** (through tree-based model interactions)

The absence of quantitative metrics suggests this is a conceptual workflow rather than a performance benchmark. The system design prioritizes flexibility in handling different query types and processing requirements.