## Bar Chart Grid: Attack Success Rates of Various Defense Methods Against Different Adversarial Attacks

### Overview

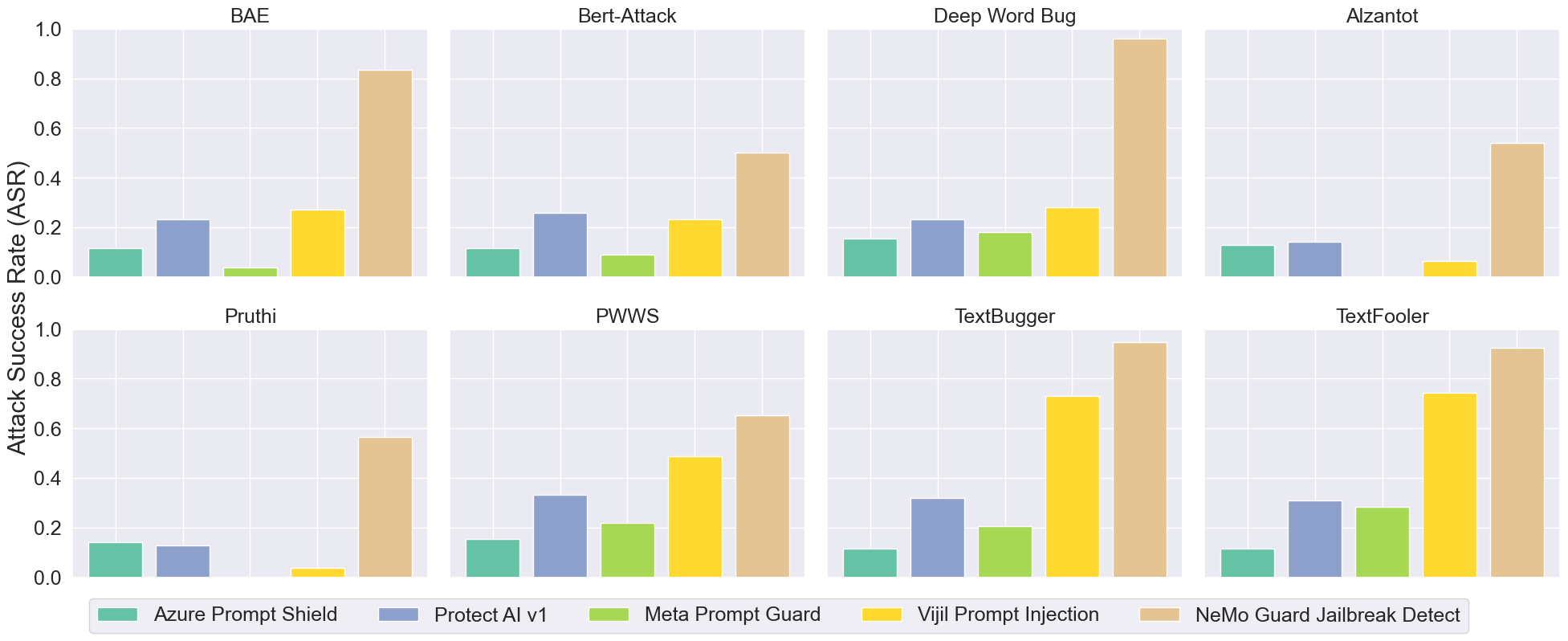

The image displays a 2x4 grid of bar charts, each comparing the performance of five different defense mechanisms against a specific adversarial text attack method. The primary metric is the Attack Success Rate (ASR), where a higher bar indicates the attack was more successful (i.e., the defense was less effective). The overall purpose is to benchmark and compare the robustness of these AI safety tools.

### Components/Axes

* **Y-Axis (Common to all subplots):** Labeled "Attack Success Rate (ASR)". The scale runs from 0.0 to 1.0, with major gridlines at intervals of 0.2.

* **X-Axis (Implicit):** Each subplot represents a different attack method. The five bars within each subplot correspond to the five defense methods, ordered consistently.

* **Subplot Titles (Attack Methods):** The eight attack methods are, from top-left to bottom-right: BAE, Bert-Attack, Deep Word Bug, Alzantot, Pruthi, PWWS, TextBugger, TextFooler.

* **Legend (Defense Methods):** Located at the bottom of the entire figure. It maps colors to defense methods:

* **Teal/Green:** Azure Prompt Shield

* **Blue-Grey:** Protect AI v1

* **Light Green:** Meta Prompt Guard

* **Yellow:** Vijil Prompt Injection

* **Tan/Beige:** NeMo Guard Jailbreak Detect

### Detailed Analysis

Below are the approximate ASR values for each defense method within each attack subplot. Values are estimated from the bar heights relative to the y-axis gridlines.

**1. BAE Attack:**

* Azure Prompt Shield: ~0.12

* Protect AI v1: ~0.23

* Meta Prompt Guard: ~0.03 (very low)

* Vijil Prompt Injection: ~0.27

* NeMo Guard Jailbreak Detect: ~0.85 (highest)

**2. Bert-Attack:**

* Azure Prompt Shield: ~0.12

* Protect AI v1: ~0.26

* Meta Prompt Guard: ~0.09

* Vijil Prompt Injection: ~0.23

* NeMo Guard Jailbreak Detect: ~0.50

**3. Deep Word Bug:**

* Azure Prompt Shield: ~0.15

* Protect AI v1: ~0.23

* Meta Prompt Guard: ~0.18

* Vijil Prompt Injection: ~0.28

* NeMo Guard Jailbreak Detect: ~0.97 (near maximum)

**4. Alzantot:**

* Azure Prompt Shield: ~0.13

* Protect AI v1: ~0.14

* Meta Prompt Guard: ~0.00 (no visible bar, likely 0)

* Vijil Prompt Injection: ~0.06

* NeMo Guard Jailbreak Detect: ~0.54

**5. Pruthi:**

* Azure Prompt Shield: ~0.14

* Protect AI v1: ~0.13

* Meta Prompt Guard: ~0.00 (no visible bar, likely 0)

* Vijil Prompt Injection: ~0.04

* NeMo Guard Jailbreak Detect: ~0.57

**6. PWWS:**

* Azure Prompt Shield: ~0.16

* Protect AI v1: ~0.33

* Meta Prompt Guard: ~0.22

* Vijil Prompt Injection: ~0.49

* NeMo Guard Jailbreak Detect: ~0.65

**7. TextBugger:**

* Azure Prompt Shield: ~0.11

* Protect AI v1: ~0.32

* Meta Prompt Guard: ~0.21

* Vijil Prompt Injection: ~0.73

* NeMo Guard Jailbreak Detect: ~0.95

**8. TextFooler:**

* Azure Prompt Shield: ~0.11

* Protect AI v1: ~0.31

* Meta Prompt Guard: ~0.29

* Vijil Prompt Injection: ~0.74

* NeMo Guard Jailbreak Detect: ~0.93

### Key Observations

1. **Consistent Underperformer:** The **NeMo Guard Jailbreak Detect** (tan bar) consistently shows the highest or near-highest Attack Success Rate across all eight attack methods. Its ASR is particularly high (>0.85) against Deep Word Bug, TextBugger, and TextFooler.

2. **Consistent Performers:** **Azure Prompt Shield** (teal) and **Protect AI v1** (blue-grey) generally maintain lower ASRs, typically below 0.35 across all attacks. Azure often has the lowest or second-lowest rate.

3. **Variable Performance:**

* **Meta Prompt Guard** (light green) shows extreme variability. It is highly effective (ASR ~0.00-0.03) against BAE, Alzantot, and Pruthi, but less so against others like TextFooler (~0.29).

* **Vijil Prompt Injection** (yellow) also varies significantly. It performs relatively well against Alzantot and Pruthi but is highly vulnerable to TextBugger and TextFooler (ASR >0.70).

4. **Attack Potency:** The **TextBugger** and **TextFooler** attacks appear to be the most potent overall, achieving very high ASRs against multiple defenses, especially NeMo Guard and Vijil. The **Pruthi** and **Alzantot** attacks seem less effective against this set of defenses.

### Interpretation

This chart provides a comparative security analysis of AI prompt defense systems. The data suggests a significant disparity in effectiveness:

* **NeMo Guard Jailbreak Detect** appears to be the least robust defense among those tested against this suite of adversarial text attacks. Its high failure rate indicates it may be vulnerable to a wide range of jailbreaking or prompt injection techniques.

* **Azure Prompt Shield** and **Protect AI v1** demonstrate more consistent, though not perfect, resilience. Their relatively low and stable ASRs suggest they employ more generalized or robust detection mechanisms.

* The performance of **Meta Prompt Guard** and **Vijil Prompt Injection** is highly attack-dependent. This could indicate they are specialized against certain types of adversarial perturbations (e.g., Meta Guard excels against word-substitution attacks like BAE) but lack broad-spectrum coverage.

* From a security perspective, the high ASRs for **TextBugger** and **TextFooler** mark them as particularly dangerous attack methods that require strong, specialized defenses. The near-total failure of NeMo Guard against these attacks is a critical finding.

**Conclusion:** The visualization effectively argues that defense selection must be informed by the expected threat model. No single defense is universally effective, and a layered or adaptive approach may be necessary for robust protection. The stark contrast between NeMo Guard and the others warrants further investigation into their underlying methodologies.