# Technical Document Extraction: Roofline Model (Llama 13B, A6000)

## 1. Document Header

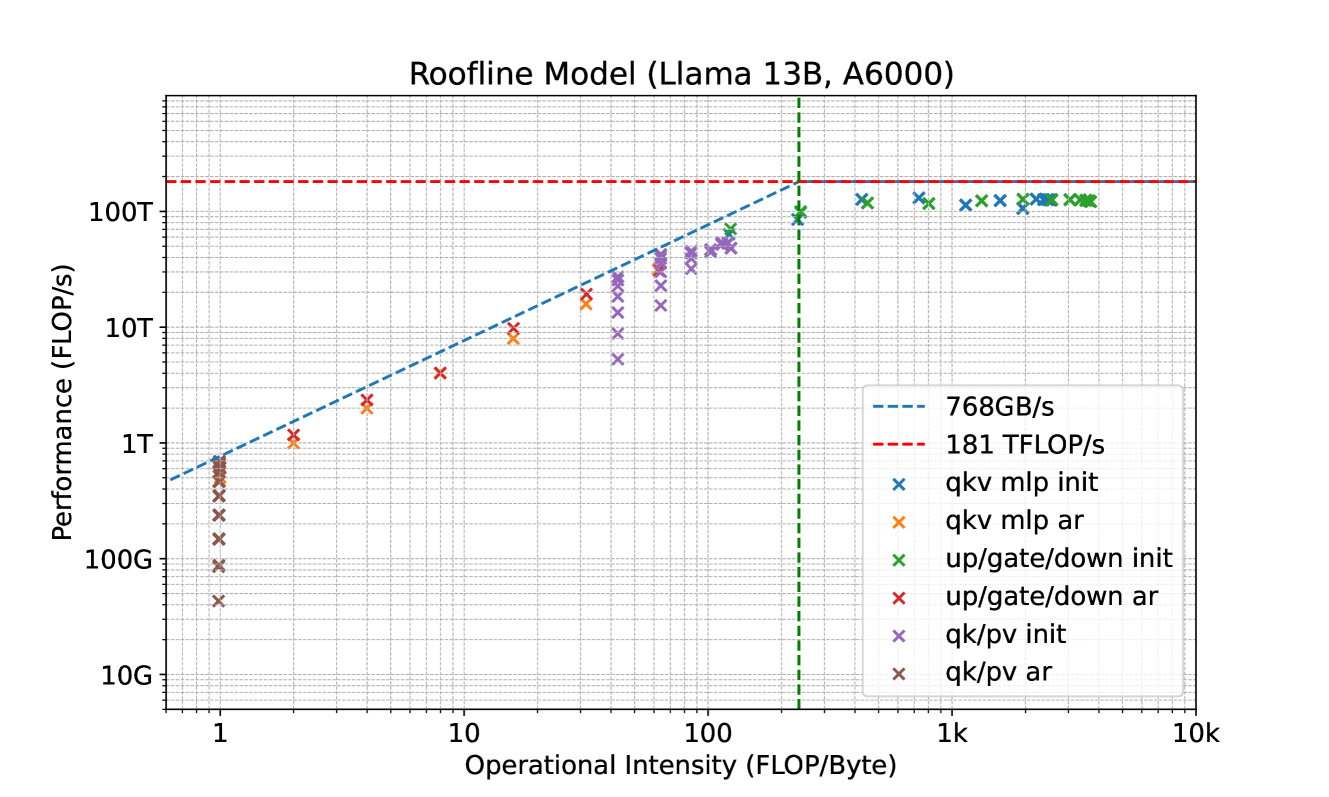

* **Title:** Roofline Model (Llama 13B, A6000)

* **Language:** English

## 2. Chart Specifications

This is a **Roofline Model** chart, a standard visualization used to represent the performance limits of a computing system (NVIDIA A6000 GPU) running a specific workload (Llama 13B model).

### Axis Definitions

* **Y-Axis (Vertical):** Performance (FLOP/s)

* **Scale:** Logarithmic (Base 10)

* **Range:** 10G to ~300T

* **Major Markers:** 10G, 100G, 1T, 10T, 100T

* **X-Axis (Horizontal):** Operational Intensity (FLOP/Byte)

* **Scale:** Logarithmic (Base 10)

* **Range:** ~0.6 to 10k

* **Major Markers:** 1, 10, 1k, 10k

### Legend and Thresholds

The legend is located in the bottom-right quadrant of the plot area.

| Legend Item | Color/Style | Description | Value/Threshold |

| :--- | :--- | :--- | :--- |

| **768GB/s** | Blue Dashed Line | Memory Bandwidth Limit (Slope) | 768 GB/s |

| **181 TFLOP/s** | Red Dashed Line | Peak Compute Performance (Ceiling) | 181 TFLOP/s |

| **qkv mlp init** | Blue 'x' | Data points for QKV/MLP initialization | High Intensity/High Perf |

| **qkv mlp ar** | Orange 'x' | Data points for QKV/MLP auto-regressive | Mid Intensity/Mid Perf |

| **up/gate/down init** | Green 'x' | Data points for Up/Gate/Down initialization | High Intensity/High Perf |

| **up/gate/down ar** | Red 'x' | Data points for Up/Gate/Down auto-regressive | Low Intensity/Low Perf |

| **qk/pv init** | Purple 'x' | Data points for QK/PV initialization | Mid Intensity/Mid Perf |

| **qk/pv ar** | Brown 'x' | Data points for QK/PV auto-regressive | Low Intensity/Low Perf |

---

## 3. Component Analysis

### The "Roofline" Structure

1. **Memory-Bound Region (The Slope):** Represented by the blue dashed line starting from the bottom left. It follows the formula $Performance = Bandwidth \times Intensity$. Any data point sitting on or near this line is limited by how fast data can be moved from memory (768 GB/s).

2. **Compute-Bound Region (The Ceiling):** Represented by the horizontal red dashed line at the top. It represents the hardware's maximum theoretical throughput (181 TFLOP/s).

3. **Ridge Point:** The intersection of the two lines occurs at an Operational Intensity of approximately **235 FLOP/Byte** (indicated by a vertical green dashed line).

### Data Series Trends and Distribution

* **Initialization (init) Phases:**

* **Trend:** These points (Blue, Green, Purple 'x') cluster toward the right side of the graph (High Operational Intensity).

* **Observation:** Most "init" points for `qkv mlp` and `up/gate/down` are located on the horizontal "ceiling," meaning they are compute-bound and utilizing the GPU's maximum TFLOP/s.

* **Auto-regressive (ar) Phases:**

* **Trend:** These points (Orange, Red, Brown 'x') cluster toward the left side of the graph (Low Operational Intensity).

* **Observation:** These points follow the diagonal blue dashed line. This indicates that the auto-regressive decoding phase of the Llama 13B model is strictly memory-bandwidth bound, operating significantly below the peak TFLOP/s of the A6000.

---

## 4. Data Point Extraction (Approximate Values)

| Category | Operational Intensity (FLOP/Byte) | Performance (FLOP/s) | Bottleneck |

| :--- | :--- | :--- | :--- |

| **up/gate/down ar** | ~1 to 10 | 100G to 5T | Memory (768GB/s) |

| **qk/pv ar** | ~1 (Vertical stack) | 40G to 800G | Memory (768GB/s) |

| **qkv mlp ar** | ~2 to 40 | 1T to 20T | Memory (768GB/s) |

| **qk/pv init** | ~40 to 150 | 10T to 80T | Transition/Memory |

| **qkv mlp init** | ~800 to 3k | ~150T to 181T | Compute (181 TFLOP/s) |

| **up/gate/down init** | ~200 to 4k | ~100T to 181T | Compute (181 TFLOP/s) |

## 5. Summary of Findings

The chart demonstrates that for a Llama 13B model on an A6000 GPU:

1. **Initialization** is highly efficient and hits the hardware's compute ceiling (181 TFLOP/s).

2. **Auto-regressive decoding** is inefficient in terms of raw compute utilization because it is bottlenecked by the 768 GB/s memory bandwidth.

3. The **qk/pv ar** (Brown 'x') operations are the least efficient, clustered at the lowest operational intensity (~1 FLOP/Byte).