TECHNICAL ASSET FINGERPRINT

294383135a5a40382c346539

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

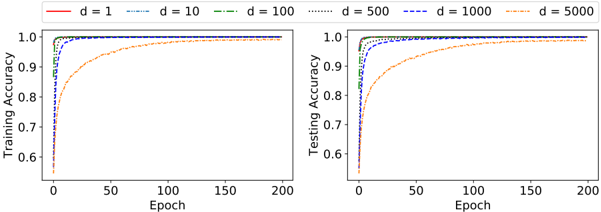

## Chart Type: Line Graphs Comparing Training and Testing Accuracy

### Overview

The image contains two line graphs side-by-side. The left graph displays "Training Accuracy" versus "Epoch," while the right graph displays "Testing Accuracy" versus "Epoch." Both graphs show the performance of a model with varying values of 'd' (likely a hyperparameter). The x-axis (Epoch) ranges from 0 to 200 in both graphs. The y-axis (Accuracy) ranges from 0.6 to 1.0 in both graphs. The legend, located at the top, indicates the line colors corresponding to different 'd' values: d=1 (red), d=10 (blue, dashed), d=100 (green, dash-dot), d=500 (black, dotted), d=1000 (blue, dash-dot-dot), and d=5000 (orange, dash-dot).

### Components/Axes

* **X-axis (both graphs):** Epoch, ranging from 0 to 200. Major ticks at 0, 50, 100, 150, and 200.

* **Y-axis (both graphs):** Accuracy, ranging from 0.6 to 1.0. Major ticks at 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Left Graph Title:** Training Accuracy

* **Right Graph Title:** Testing Accuracy

* **Legend (Top):**

* d = 1 (red, solid line)

* d = 10 (blue, dashed line)

* d = 100 (green, dash-dot line)

* d = 500 (black, dotted line)

* d = 1000 (blue, dash-dot-dot line)

* d = 5000 (orange, dash-dot line)

### Detailed Analysis

**Left Graph: Training Accuracy**

* **d = 1 (red, solid line):** Starts at approximately 0.6 and rapidly increases to approximately 0.95 by epoch 20, then slowly increases to 1.0.

* **d = 10 (blue, dashed line):** Starts at approximately 0.6 and rapidly increases to approximately 0.98 by epoch 20, then slowly increases to 1.0.

* **d = 100 (green, dash-dot line):** Starts at approximately 0.75 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 500 (black, dotted line):** Starts at approximately 0.7 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 1000 (blue, dash-dot-dot line):** Starts at approximately 0.7 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 5000 (orange, dash-dot line):** Starts at approximately 0.6 and gradually increases to approximately 0.99 by epoch 100, then slowly increases to 1.0.

**Right Graph: Testing Accuracy**

* **d = 1 (red, solid line):** Starts at approximately 0.6 and rapidly increases to approximately 0.95 by epoch 20, then slowly increases to 1.0.

* **d = 10 (blue, dashed line):** Starts at approximately 0.6 and rapidly increases to approximately 0.98 by epoch 20, then slowly increases to 1.0.

* **d = 100 (green, dash-dot line):** Starts at approximately 0.75 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 500 (black, dotted line):** Starts at approximately 0.7 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 1000 (blue, dash-dot-dot line):** Starts at approximately 0.7 and rapidly increases to approximately 0.99 by epoch 10, then slowly increases to 1.0.

* **d = 5000 (orange, dash-dot line):** Starts at approximately 0.6 and gradually increases to approximately 0.99 by epoch 100, then slowly increases to 1.0.

### Key Observations

* For both training and testing accuracy, higher values of 'd' (100, 500, 1000) generally lead to faster initial increases in accuracy.

* The 'd = 5000' line (orange) shows a slower increase in accuracy compared to other 'd' values.

* All lines converge to approximately 1.0 accuracy as the number of epochs increases.

* The training and testing accuracy graphs are very similar, suggesting the model is not overfitting significantly.

### Interpretation

The graphs illustrate the impact of the hyperparameter 'd' on the training and testing accuracy of a model. The data suggests that increasing 'd' up to a certain point (around 100-1000) can improve the initial learning rate, leading to faster convergence. However, excessively large values of 'd' (e.g., 5000) may slow down the learning process. The similarity between training and testing accuracy indicates that the model generalizes well to unseen data and is not memorizing the training set. The optimal value of 'd' would likely be in the range of 100-1000, balancing fast convergence with good generalization.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

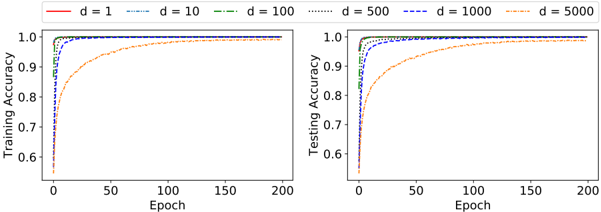

## Line Chart: Training and Testing Accuracy vs. Epoch

### Overview

The image presents two line charts displayed side-by-side. Both charts depict accuracy (Training Accuracy on the left, Testing Accuracy on the right) as a function of Epoch, with different lines representing different values of 'd'. The charts visually compare the learning curves for various 'd' values.

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to approximately 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from approximately 0.6 to 1.0.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from approximately 0.6 to 1.0.

* **Legend (Top-Center):** Labels for each line, corresponding to different values of 'd':

* d = 1 (Red solid line)

* d = 10 (Blue dashed line)

* d = 100 (Green dotted line)

* d = 500 (Orange dash-dot line)

* d = 1000 (Black dotted line)

* d = 5000 (Purple dash-dot-dot line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.65 at Epoch 0, rapidly increases to approximately 0.95 by Epoch 20, and plateaus around 0.98-1.0 for Epochs 20-200.

* **d = 10 (Blue):** Starts at approximately 0.65 at Epoch 0, increases more slowly than d=1, reaching approximately 0.9 by Epoch 50, and plateaus around 0.95-0.98 for Epochs 50-200.

* **d = 100 (Green):** Starts at approximately 0.65 at Epoch 0, increases at a moderate pace, reaching approximately 0.9 by Epoch 40, and plateaus around 0.97-0.99 for Epochs 40-200.

* **d = 500 (Orange):** Starts at approximately 0.65 at Epoch 0, increases slowly, reaching approximately 0.85 by Epoch 50, and plateaus around 0.95-0.97 for Epochs 50-200.

* **d = 1000 (Black):** Starts at approximately 0.65 at Epoch 0, increases very slowly, reaching approximately 0.8 by Epoch 50, and plateaus around 0.93-0.95 for Epochs 50-200.

* **d = 5000 (Purple):** Starts at approximately 0.65 at Epoch 0, increases extremely slowly, reaching approximately 0.75 by Epoch 50, and plateaus around 0.9-0.93 for Epochs 50-200.

**Right Chart (Testing Accuracy):**

* **d = 1 (Red):** Starts at approximately 0.65 at Epoch 0, rapidly increases to approximately 0.95 by Epoch 20, and plateaus around 0.98-1.0 for Epochs 20-200.

* **d = 10 (Blue):** Starts at approximately 0.65 at Epoch 0, increases more slowly than d=1, reaching approximately 0.9 by Epoch 50, and plateaus around 0.95-0.98 for Epochs 50-200.

* **d = 100 (Green):** Starts at approximately 0.65 at Epoch 0, increases at a moderate pace, reaching approximately 0.9 by Epoch 40, and plateaus around 0.97-0.99 for Epochs 40-200.

* **d = 500 (Orange):** Starts at approximately 0.65 at Epoch 0, increases slowly, reaching approximately 0.85 by Epoch 50, and plateaus around 0.95-0.97 for Epochs 50-200.

* **d = 1000 (Black):** Starts at approximately 0.65 at Epoch 0, increases very slowly, reaching approximately 0.8 by Epoch 50, and plateaus around 0.93-0.95 for Epochs 50-200.

* **d = 5000 (Purple):** Starts at approximately 0.65 at Epoch 0, increases extremely slowly, reaching approximately 0.75 by Epoch 50, and plateaus around 0.9-0.93 for Epochs 50-200.

### Key Observations

* The training accuracy generally increases with the number of epochs for all values of 'd'.

* The testing accuracy also generally increases with the number of epochs for all values of 'd'.

* Smaller values of 'd' (1, 10, 100) achieve higher accuracy faster than larger values of 'd' (500, 1000, 5000).

* The gap between training and testing accuracy appears to be relatively small for all values of 'd', suggesting minimal overfitting.

* The lines for d=1, d=10, and d=100 are very close to each other in both charts.

* The lines for d=500, d=1000, and d=5000 are very close to each other in both charts, and significantly lower than the other lines.

### Interpretation

The charts demonstrate the impact of the parameter 'd' on the training and testing accuracy of a model over time (epochs). The parameter 'd' likely represents a dimensionality or complexity factor within the model.

The data suggests that lower values of 'd' lead to faster learning and higher accuracy, at least within the observed range of epochs. As 'd' increases, the learning rate decreases, and the final accuracy plateaus at a lower level. This could indicate that increasing 'd' beyond a certain point introduces unnecessary complexity or noise, hindering the model's ability to generalize effectively.

The close proximity of the training and testing accuracy curves for all 'd' values suggests that the model is not significantly overfitting to the training data. The consistent trend across both charts (training and testing) reinforces the reliability of the observed relationship between 'd' and accuracy. The fact that d=500, d=1000, and d=5000 perform similarly suggests that there may be a diminishing return on increasing 'd' beyond a certain threshold. Further investigation would be needed to determine the optimal value of 'd' for maximizing performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Charts: Training and Testing Accuracy vs. Epoch for Different Model Dimensions (d)

### Overview

The image contains two side-by-side line charts comparing the training and testing accuracy of a machine learning model over 200 training epochs. The comparison is made across six different model dimensions, denoted by `d`. The charts illustrate how model dimensionality affects both learning speed (convergence) and final performance.

### Components/Axes

* **Chart Layout:** Two separate plots arranged horizontally.

* **Left Plot Title (Y-axis label):** `Training Accuracy`

* **Right Plot Title (Y-axis label):** `Testing Accuracy`

* **Common X-axis Label (for both plots):** `Epoch`

* **X-axis Scale:** Linear scale from 0 to 200, with major tick marks at 0, 50, 100, 150, and 200.

* **Y-axis Scale (for both plots):** Linear scale from 0.6 to 1.0, with major tick marks at 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Legend:** Positioned at the top center, spanning both charts. It defines six data series by line color and style:

* `d = 1`: Solid red line.

* `d = 10`: Blue dash-dot line (`-·-`).

* `d = 100`: Green dashed line (`--`).

* `d = 500`: Gray dotted line (`...`).

* `d = 1000`: Blue dashed line (`--`). *Note: This is a different blue and style from d=10.*

* `d = 5000`: Orange dash-dot-dot line (`-··-`).

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

**Left Chart: Training Accuracy**

* **General Trend:** All six lines show a steep, near-vertical increase in accuracy from epoch 0, followed by a rapid plateau near 1.0. The primary difference is the speed of initial convergence.

* **d = 1 (Red, Solid):** Starts at ~0.60. Reaches >0.99 by epoch ~10. Plateaus at ~1.00.

* **d = 10 (Blue, Dash-Dot):** Starts at ~0.60. Reaches >0.99 by epoch ~15. Plateaus at ~1.00.

* **d = 100 (Green, Dashed):** Starts at ~0.60. Reaches >0.99 by epoch ~20. Plateaus at ~1.00.

* **d = 500 (Gray, Dotted):** Starts at ~0.60. Reaches >0.99 by epoch ~25. Plateaus at ~1.00.

* **d = 1000 (Blue, Dashed):** Starts at ~0.60. Reaches >0.99 by epoch ~30. Plateaus at ~1.00.

* **d = 5000 (Orange, Dash-Dot-Dot):** Starts lower at ~0.55. Shows a noticeably slower, curved ascent. Reaches ~0.95 by epoch ~50, ~0.99 by epoch ~100, and approaches ~1.00 by epoch 200.

**Right Chart: Testing Accuracy**

* **General Trend:** Similar initial steep increase for all lines, but with more separation and slightly lower final values compared to training accuracy, indicating potential overfitting. The `d=5000` line again shows the slowest convergence.

* **d = 1 (Red, Solid):** Starts at ~0.60. Rises sharply to ~0.98 by epoch ~20. Plateaus at ~0.99.

* **d = 10 (Blue, Dash-Dot):** Starts at ~0.60. Rises sharply to ~0.98 by epoch ~25. Plateaus at ~0.99.

* **d = 100 (Green, Dashed):** Starts at ~0.60. Rises to ~0.97 by epoch ~30. Plateaus at ~0.98-0.99.

* **d = 500 (Gray, Dotted):** Starts at ~0.60. Rises to ~0.96 by epoch ~40. Plateaus at ~0.98.

* **d = 1000 (Blue, Dashed):** Starts at ~0.60. Rises to ~0.95 by epoch ~50. Plateaus at ~0.97-0.98.

* **d = 5000 (Orange, Dash-Dot-Dot):** Starts lowest at ~0.55. Slow, curved ascent. Reaches ~0.90 by epoch ~50, ~0.96 by epoch ~100, and approaches ~0.98 by epoch 200.

### Key Observations

1. **Convergence Speed vs. Dimension:** There is a clear inverse relationship between model dimension (`d`) and the speed of convergence. Lower `d` values (1, 10) achieve near-maximum accuracy within the first 10-20 epochs. The highest dimension (`d=5000`) requires nearly the full 200 epochs to approach the same level.

2. **Final Performance Gap:** While all models eventually reach high accuracy, a small but consistent gap exists between training and testing accuracy for each `d`. This gap widens slightly as `d` increases (e.g., `d=1000` plateaus at ~1.00 training vs. ~0.975 testing), suggesting increased overfitting with model complexity.

3. **Initial Condition:** All models start at a similar baseline accuracy (~0.60) at epoch 0, except for `d=5000`, which starts lower (~0.55). This could indicate initialization effects or the difficulty of evaluating a very high-dimensional model before any training.

4. **Visual Distinction:** The line styles and colors are effectively used to distinguish the six series, though the two blue lines (`d=10` and `d=1000`) require careful attention to their different dash patterns.

### Interpretation

This data demonstrates the classic trade-off between model capacity (represented by dimension `d`) and optimization dynamics. A higher-dimensional model (`d=5000`) has greater representational power but faces a more complex loss landscape, resulting in slower gradient-based optimization (slower convergence). Conversely, low-dimensional models (`d=1`) optimize very quickly but may have a lower capacity ceiling, though in this specific task, they appear to reach near-perfect performance.

The persistent gap between training and testing accuracy across all models indicates that the task has some inherent noise or that all models are slightly overfitting. The fact that this gap is largest for the highest `d` values aligns with the principle that overly complex models are more prone to fitting noise in the training data.

**Peircean Insight:** The charts are an *icon* of the learning process, visually representing the relationship between time (epochs), performance (accuracy), and model complexity (`d`). They are also an *index* of optimization difficulty—the slower rise of the `d=5000` line is a direct effect of the challenges in navigating a high-dimensional parameter space. The data suggests that for this particular problem, increasing model dimension beyond a certain point (`d=100` or `d=500`) yields diminishing returns in final accuracy while incurring a significant cost in training time. The optimal choice of `d` would balance the need for fast training against the requirement for maximum generalization performance.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Training and Testing Accuracy vs. Epochs for Different Dimensions

### Overview

The image contains two line graphs comparing training and testing accuracy across different model dimensions (d=1, 10, 100, 500, 1000, 5000) over 200 training epochs. Both graphs share identical axes and legend structures but differ in their y-axis labels ("Training Accuracy" vs. "Testing Accuracy").

### Components/Axes

- **X-Axis**: Epochs (0 to 200, linear scale)

- **Y-Axis (Left Chart)**: Training Accuracy (0.6 to 1.0, linear scale)

- **Y-Axis (Right Chart)**: Testing Accuracy (0.6 to 1.0, linear scale)

- **Legend**: Located in the top-left corner, with six line styles:

- Red solid: d=1

- Blue dashed: d=10

- Green dash-dot: d=100

- Orange dotted: d=500

- Purple dash-dot-dot: d=1000

- Yellow solid-dot: d=5000

### Detailed Analysis

#### Training Accuracy (Left Chart)

- **d=1 (Red)**: Starts at ~0.6, rises sharply to ~0.95 by epoch 50, plateaus at ~0.98 by epoch 100.

- **d=10 (Blue)**: Begins at ~0.65, reaches ~0.92 by epoch 50, plateaus at ~0.97 by epoch 100.

- **d=100 (Green)**: Starts at ~0.68, climbs to ~0.94 by epoch 50, plateaus at ~0.98 by epoch 100.

- **d=500 (Orange)**: Begins at ~0.7, rises to ~0.95 by epoch 50, plateaus at ~0.99 by epoch 100.

- **d=1000 (Purple)**: Starts at ~0.72, reaches ~0.96 by epoch 50, plateaus at ~0.99 by epoch 100.

- **d=5000 (Yellow)**: Begins at ~0.75, climbs to ~0.97 by epoch 50, plateaus at ~0.995 by epoch 100.

#### Testing Accuracy (Right Chart)

- **d=1 (Red)**: Starts at ~0.6, rises to ~0.85 by epoch 50, plateaus at ~0.92 by epoch 100.

- **d=10 (Blue)**: Begins at ~0.63, reaches ~0.88 by epoch 50, plateaus at ~0.94 by epoch 100.

- **d=100 (Green)**: Starts at ~0.66, climbs to ~0.90 by epoch 50, plateaus at ~0.96 by epoch 100.

- **d=500 (Orange)**: Begins at ~0.68, rises to ~0.92 by epoch 50, plateaus at ~0.97 by epoch 100.

- **d=1000 (Purple)**: Starts at ~0.70, reaches ~0.94 by epoch 50, plateaus at ~0.98 by epoch 100.

- **d=5000 (Yellow)**: Begins at ~0.73, climbs to ~0.95 by epoch 50, plateaus at ~0.99 by epoch 100.

### Key Observations

1. **Convergence Speed**: Lower dimensions (d=1, 10) converge faster but plateau at lower accuracy levels.

2. **Accuracy Trade-off**: Higher dimensions (d=1000, 5000) achieve higher final accuracy but require more epochs to stabilize.

3. **Training-Testing Gap**: Testing accuracy consistently lags training accuracy by ~0.05–0.10, indicating overfitting in higher-dimensional models.

4. **Diminishing Returns**: Beyond d=100, accuracy improvements become marginal despite increased computational complexity.

### Interpretation

The graphs demonstrate a classic trade-off between model complexity (dimension) and performance. Lower-dimensional models (d=1–10) train quickly but underfit, while higher-dimensional models (d=1000–5000) overfit less but require more epochs to converge. The persistent gap between training and testing accuracy suggests regularization or data augmentation might improve generalization. Notably, d=5000 achieves near-perfect testing accuracy (~0.99) but at the cost of slower convergence, highlighting the need for careful dimension selection based on computational constraints and performance requirements.

DECODING INTELLIGENCE...