## Flowchart: Model Evaluation Loop for FrontierMath Problems

### Overview

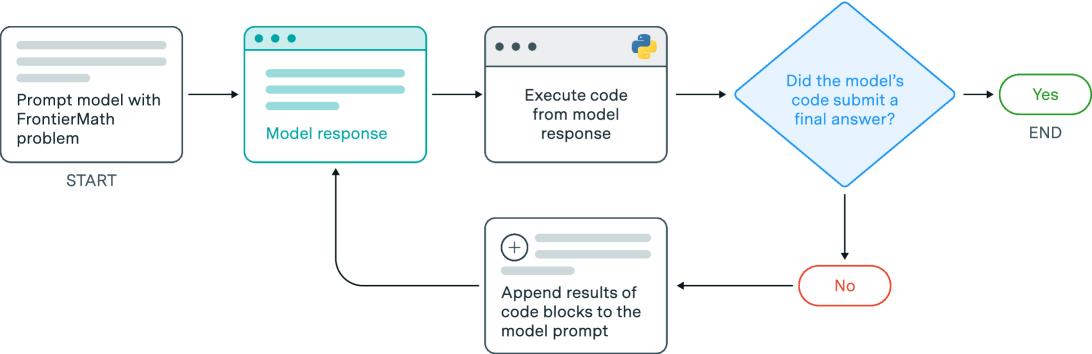

The image is a flowchart diagram illustrating an iterative process for evaluating a model's ability to solve a "FrontierMath" problem. The process involves prompting the model, executing its code, and checking for a final answer, with a feedback loop if no answer is submitted.

### Components/Axes

The diagram consists of six primary components connected by directional arrows, indicating the flow of the process. There are no numerical axes or data series.

1. **Start Node (Rectangle, Top-Left):**

* **Text:** "Prompt model with FrontierMath problem"

* **Sub-label:** "START" (positioned below the rectangle).

* **Visual:** A simple white rectangle with a light gray border and horizontal lines suggesting text.

2. **Model Response Node (Browser Window, Center-Left):**

* **Text:** "Model response"

* **Visual:** A stylized browser window with a teal border and three dots in the top-left corner. The interior shows horizontal lines representing text.

3. **Execution Node (Rectangle, Center):**

* **Text:** "Execute code from model response"

* **Visual:** A white rectangle with a light gray border. It contains a small Python logo (blue and yellow snakes) in the top-right corner.

4. **Decision Node (Diamond, Center-Right):**

* **Text:** "Did the model's code submit a final answer?"

* **Visual:** A blue-outlined diamond shape.

5. **Termination Node (Oval, Far-Right):**

* **Text:** "END"

* **Visual:** A green-outlined oval. The path leading to it is labeled "Yes".

6. **Feedback Loop Node (Rectangle, Bottom-Center):**

* **Text:** "Append results of code blocks to the model prompt"

* **Visual:** A white rectangle with a light gray border. It contains a plus sign inside a circle (`⊕`) and horizontal lines.

* **Flow:** This node is reached via the "No" path from the decision diamond (marked by a red-outlined oval). An arrow leads from this node back to the "Model response" node, creating a loop.

### Detailed Analysis

The process flow is strictly sequential with one conditional branch:

1. **Initiation:** The process begins at the "START" node, where a model is prompted with a "FrontierMath problem."

2. **Model Generation:** The model generates a response, which is captured in the "Model response" step.

3. **Code Execution:** The code contained within the model's response is executed.

4. **Decision Point:** The system checks the outcome: "Did the model's code submit a final answer?"

* **Path A (Yes):** If the answer is affirmative, the process moves to the "END" node, concluding the evaluation.

* **Path B (No):** If the answer is negative, the process enters a feedback loop. The results of the executed code blocks are appended to the original model prompt. This updated prompt is then fed back into the "Model response" step, and the cycle (Generate -> Execute -> Check) repeats.

### Key Observations

* **Iterative Feedback Loop:** The core mechanism is a closed loop designed to give the model another chance by providing it with the results of its previous attempt. This suggests the evaluation is not a single-pass test but allows for refinement.

* **Conditional Termination:** The process only terminates upon the successful submission of a final answer from the model's code.

* **Visual Coding:** Colors are used functionally: teal for the model's output, blue for the decision point, green for successful termination, and red for the failure path that triggers the loop.

* **Specific Problem Domain:** The problem is explicitly named "FrontierMath," indicating a specialized or benchmark dataset.

### Interpretation

This flowchart depicts a **robust, iterative evaluation protocol** for testing an AI model's mathematical problem-solving capabilities, specifically on the "FrontierMath" benchmark.

* **Purpose:** It moves beyond a simple pass/fail test. The loop acknowledges that complex problem-solving may require multiple attempts. By feeding execution results back into the prompt, the system simulates a "debugging" or "refinement" cycle, testing the model's ability to learn from its own output errors or incomplete attempts.

* **Underlying Assumption:** The design assumes that a model's initial code might be syntactically correct but logically incomplete or incorrect, and that providing the runtime results (e.g., error messages, partial outputs) as context can help it converge on a correct final answer.

* **What it Measures:** This process likely evaluates not just final accuracy, but also **persistence, error correction, and iterative reasoning**. A model that succeeds on the first try is proficient. A model that succeeds after several loops demonstrates resilience and the ability to use feedback.

* **Notable Absence:** The flowchart does not specify a maximum number of iterations. In a practical implementation, there would likely be a loop counter or timeout to prevent infinite cycles, but this abstract diagram focuses on the logical flow rather than implementation constraints.

In essence, this is a blueprint for a **self-correcting evaluation harness** that treats model output as executable code and uses its runtime behavior to dynamically guide the problem-solving process toward a conclusion.