## Diagram: Two-Stage Process for Generating High-Quality Solutions and Training Data

### Overview

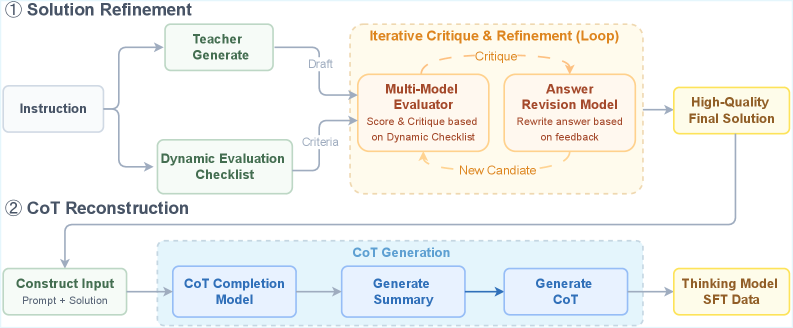

The image is a technical flowchart illustrating a two-stage process for refining solutions and generating training data for a "Thinking Model." The process is divided into two main phases: **① Solution Refinement** and **② CoT Reconstruction**. The diagram uses a combination of solid and dashed boxes, directional arrows, and color-coded labels to depict the flow of data and operations between various models and components.

### Components/Axes

The diagram is structured into two horizontal sections, each with a numbered title.

**Section ①: Solution Refinement**

* **Input:** A box labeled **"Instruction"** on the far left.

* **Primary Process Branches:** The "Instruction" feeds into two parallel green-outlined boxes:

1. **"Teacher Generate"** (top branch)

2. **"Dynamic Evaluation Checklist"** (bottom branch)

* **Core Iterative Loop:** A large, dashed orange box titled **"Iterative Critique & Refinement (Loop)"**. This loop contains two orange-outlined boxes:

* **"Multi-Model Evaluator"** with sub-text: "Score & Critique based on Dynamic Checklist".

* **"Answer Revision Model"** with sub-text: "Rewrite answer based on feedback".

* **Loop Arrows & Labels:**

* An arrow from "Teacher Generate" to the loop is labeled **"Draft"**.

* An arrow from "Dynamic Evaluation Checklist" to the loop is labeled **"Criteria"**.

* An arrow from "Multi-Model Evaluator" to "Answer Revision Model" is labeled **"Critique"**.

* An arrow from "Answer Revision Model" back to "Multi-Model Evaluator" is labeled **"New Candidate"**.

* **Output:** A yellow-outlined box on the far right labeled **"High-Quality Final Solution"**.

**Section ②: CoT Reconstruction**

* **Input:** A green-outlined box on the left labeled **"Construct Input"** with sub-text: "Prompt + Solution". This input is derived from the "High-Quality Final Solution" above, indicated by a connecting arrow.

* **Core Process:** A large, dashed blue box titled **"CoT Generation"**. This contains three blue-outlined boxes in sequence:

1. **"CoT Completion Model"**

2. **"Generate Summary"**

3. **"Generate CoT"**

* **Output:** A yellow-outlined box on the far right labeled **"Thinking Model SFT Data"**.

### Detailed Analysis

The diagram details a sophisticated pipeline for creating high-quality supervised fine-tuning (SFT) data.

1. **Solution Refinement Stage:** This stage takes an initial "Instruction" and uses a teacher model to generate a draft solution. Concurrently, a dynamic checklist is created to serve as evaluation criteria. These two elements feed into an iterative loop where a "Multi-Model Evaluator" scores and critiques the draft against the checklist. The critique is passed to an "Answer Revision Model," which rewrites the solution. This revised "New Candidate" is fed back into the evaluator, creating a loop that continues until a "High-Quality Final Solution" is produced.

2. **CoT (Chain-of-Thought) Reconstruction Stage:** The refined solution from Stage 1 is combined with the original prompt to "Construct Input." This input is processed by a "CoT Completion Model." The output then goes through a two-step generation process: first to "Generate Summary," and then to "Generate CoT." The final output is "Thinking Model SFT Data," which is presumably used to train a model to perform step-by-step reasoning.

### Key Observations

* **Iterative Core:** The heart of the first stage is a closed-loop system ("Critique" -> "Revision" -> "New Candidate"), emphasizing continuous improvement over a single-pass generation.

* **Dynamic Evaluation:** The use of a "Dynamic Evaluation Checklist" suggests the criteria for a good solution are not static but are generated or adapted based on the specific instruction.

* **Two-Stage Pipeline:** The process is explicitly sequential. The output of the solution refinement stage is a mandatory input for the chain-of-thought reconstruction stage.

* **Color Coding:** Green is used for input/generation components, orange for the iterative evaluation/revision loop, blue for the CoT generation pipeline, and yellow for final outputs.

### Interpretation

This diagram outlines a methodology for creating superior training data for reasoning models. The **Solution Refinement** stage acts as a quality filter, using multi-model critique and iterative revision to elevate a basic solution into a high-quality one. This addresses the common problem of noisy or low-quality data in model training.

The **CoT Reconstruction** stage then takes this polished solution and reverse-engineers the reasoning process (the Chain-of-Thought) that could lead to it. This is a form of "process supervision" data generation. Instead of just training a model on the final answer (the solution), it is trained on the intermediate reasoning steps (the CoT), which is known to improve model performance on complex, multi-step tasks.

The overall pipeline suggests a focus on **data quality over quantity**. By investing computational resources into refining solutions and reconstructing their reasoning traces, the resulting "Thinking Model SFT Data" is likely to be more effective for training models that need to perform deliberate, step-by-step problem-solving. The "Dynamic Checklist" is a key innovation, implying the system self-generates its own standards for success, making the process adaptable to a wide variety of instructions.